A Quality Score Rant Plus 3 Suggestions For The Engines To Improve It

Far too much has been written about Quality Score already and I’m loathe to add to the pile, but I have some ideas that might help us all. The exact recipes for Quality Score on Google and Bing are unknown, and it is that uncertainty that creates so much room for mischief and blather. Uncertainty […]

Far too much has been written about Quality Score already and I’m loathe to add to the pile, but I have some ideas that might help us all.

The exact recipes for Quality Score on Google and Bing are unknown, and it is that uncertainty that creates so much room for mischief and blather. Uncertainty causes some smart people to rack their brains trying to figure out what’s in the black box.

Other folks in the scoundrel category seize the opportunity created to present themselves as the QS Master who knows the secrets that can lead to riches. At least one such pretender claimed that instead of managing bids he preferred to manage through QS manipulation. Aye Carumba!

The name itself allows some misguided folks the opportunity to create garbage ‘diagnostic’ tools that identify ‘opportunities’ to ‘optimize’ one’s account by identifying ‘poor quality’ ads.

Let’s clarify a few things.

According to Google, Quality Score is Calculated from:

- The historical clickthrough rate (CTR) of the keyword and the matched ad on the Google domain; note that CTR on non-Google sites (such as AOL.com) only ever impacts Quality Score on our search partners – not on Google

- Your account history, which is measured by the CTR of all the ads and keywords in your account

- The historical CTR of the display URLs in the ad group

- The quality of your landing page

- The relevance of the keyword to the ads in its ad group

- The relevance of the keyword and the matched ad to the search query

- Your account’s performance in the geographical region where the ad will be shown

- Other relevance factors

CTR

We can essentially combine the first three bullets with the ‘relevance’ and geography bullets to get: Predicted Click-Through Rate. This is the most important piece of the puzzle.

When you look at the math, Google maximizes its revenue per SERP impression when it considers only bid and CTR as factors. Therefore: Any component of QS that diminishes the role of CTR in this equation loses them money, at least in the short run.

The engines aren’t going to swing too far away from CTR as the ultimate measure of quality, because doing so costs them money.

Landing Page

If the landing page provides a lousy experience the user will have a bad impression of not just that site, but the experience of clicking on ads as well. Short term, this doesn’t impact the engines, but in the long run it certainly could.

What landing page provides a lousy user experience? One that is slow, one that is unrelated to the search term, one that is chock full of ads.

If you run a legitimate business and the ads link to the right pages on your site, and the pages load reasonably fast, you’re home free. Moving pixels around on the page isn’t going to lead you to QS Nirvana.

That’s all there is to it.

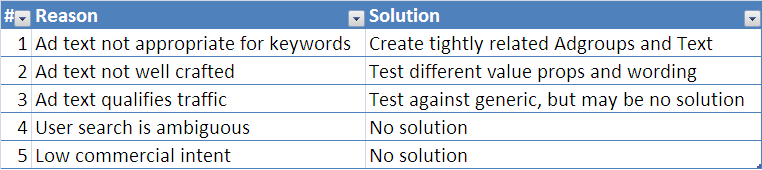

So if it is really all about anticipated CTR, why might my CTR be low?

It’s important to create tight Adgroups and write good copy. Well worth the time to do this right.

But here’s the thing: when people see a low QS they often mistakenly assume the reason is number 1 or 2, when in fact it’s often 3, 4, or 5.

People who don’t understand this waste countless hours trying to find the magic grouping and magic wording to get them 10’s. In many instances, there isn’t anything you can or should do to “fix” the “problem”.

Qualifying Ad Copy

Let’s say your business sells high-end jewelry. The mass market jewelers will tout discounts and “Ruby earrings starting at $15…” Your earrings start at $300. You’ll have a higher quality score if you tout discount percentages and offers, but doing so will torpedo your conversion rate.

It’s likely better to qualify the traffic with “Starting at $300” to weed out the discount hunters, but doing so will hurt CTR and therefore QS. Testing will reveal which makes the most sense. As I’ve argued previously, this game favors the mass marketers over the high-end merchants.

User Search Is Ambiguous

Someone searches for “Yamaha”. You sell Yamaha keyboards, but you don’t sell motorcycles, or boats, or stereo equipment. In all likelihood, unless you’re Yamaha corporation, your QS is going to be awful, because some fraction of people typing that query are looking for each of those and the QS for merchants of any of the single categories will have poor CTRs.

Low Commercial Intent

Someone searches for “Central Park”. You own a hotel near Central Park, but your QS on that keyword stinks. In all likelihood, this is a function of the fact that most of the people typing that phrase aren’t looking for hotels, trips, restaurants or anything else related to commerce.

Maybe they’re looking for a map, or directions, or history, but the CTR will be lousy because too few people typing that search have an interest in any specific ad.

The fact that the quality score is low doesn’t mean the ad text is sub-optimal and it doesn’t mean you can’t profitably advertise on that keyword!

People worry far too much that having low QS keywords in the account or adgroup will hurt the QS of other keywords. The engines have a huge interest in preventing that from happening.

Anticipating CTR accurately is a fundamentally important activity for them tied directly to their bottom lines. They’re going to get this right because it matters to them, and they have a ton of really smart people working on how to get it right.

Again, poor wording and poor grouping absolutely can be problems and should be addressed, but too much time is spent applying medicine to keywords that aren’t sick.

3 Request For The Engines That Would Help Us All

1. The Reset Button

We’ve inherited accounts that have been poorly managed historically and have to fight an uphill battle initially to overcome poor QS history. Setting up a new account is somewhat of a hassle and doesn’t address the piece of the problem tied to the advertiser’s display url.

It would be great if there was an “erase history” option that could give the new management team an opportunity to right the ship quicker. Historical data is often a good predictor of the future, but in cases where new management has taken over, the history can be a lousy predictor. Getting the CTR predictions right benefits the engines and the high quality managers and advertisers.

The challenge is that bad actors will want to hit that button every day to start over, so I propose that each advertiser can only hit that button once a year. Maybe only the engines can hit that button based on a verified and reasonable request.

2. End the promotional event penalty

Some advertisers have promotional events that they want to tout in the ad copy, but we find that changing copy frequently incurs a penalty because the engines have no history to go on with respect to the new Keyword-Copy combination so they erase the good history and start you back at square one.

By the time the short-term promotion is over, the engines may not have figured out yet that the new copy actually boosts CTR. This is a tougher engineering nut to crack. We can’t reasonably ask the engines to always assume a change is for the better because in a transition from control copy -> promotional copy -> control copy, the second transition likely hurts CTR.

Perhaps copy blocks can be deemed promotional or control. The engines could then track the average CTR impact of transitions from one to the other and make better assumptions about the CTR of a new change based on that history. Those who try to game the system will have data trends that indicate that there is no historic difference between the two, so no help.

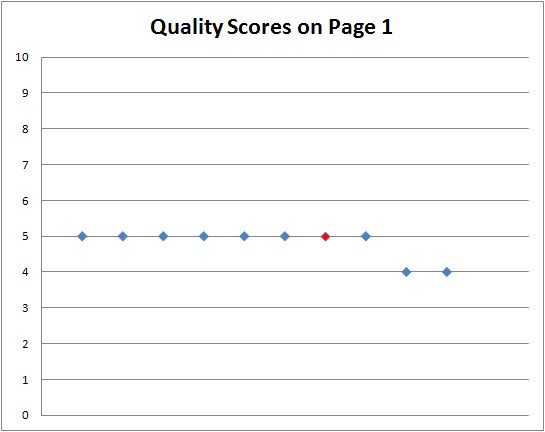

3. Give us a scatter plot

In a big program, it’s tough to tell whether CTR and QS are low because of the copy (#1, 2, or 3 above), or because of the user intent. One good way to know would be to reveal a scatter plot of the QS for all the ads in the top 10 positions.

Without identifying which QS went with which advertiser, we could still get a sense of whether we’ve done a poor job of matching keyword to copy, or whether no company scores well on this particular Keyword.

For example, the plot below would tell us that different copy could help QS. It may not be wise for us to ‘fix’ this copy if it qualifies traffic, but at least we’d know it’s an issue.

This plot would tell us that no advertisers have a great score on this keyword and we’re likely wasting time trying to ‘fix’ a problem that doesn’t exist.

Some of us geeks would prefer a numerical value like standard deviation rather than graphical representation, but perhaps both would be ideal.

We all benefit from working towards better performing programs. These changes will help advertisers and engines alike, by getting better predictions of CTR and helping advertisers identify where to put their finite management resources.

Opinions expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.

Related stories

New on Search Engine Land