Disturbing Michelle Obama Image Makes A Case For Facial Recognition In Google’s New Image Search

Do you remember that disturbing, Photoshopped image of First Lady Michelle Obama that made big news a couple years ago? It’s hard to find now in Google Image Search unless you know exactly what to type into the search box. Type “michelle obama” and you probably won’t ever see it. (More on that at the […]

Do you remember that disturbing, Photoshopped image of First Lady Michelle Obama that made big news a couple years ago? It’s hard to find now in Google Image Search unless you know exactly what to type into the search box. Type “michelle obama” and you probably won’t ever see it. (More on that at the end.)

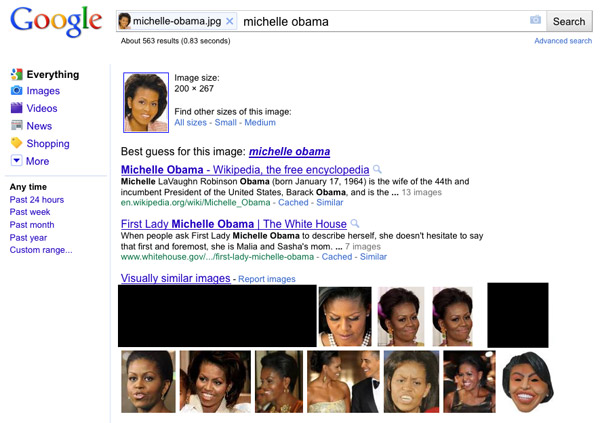

But if you upload the right photo (or wrong photo, as the case may be) of Michelle Obama into Google’s new Search By Image tool, that disturbing image shows up front and center among Google’s search results.

(Note: We’ve blocked the offending image so as to not give its creators the pleasure of more exposure.)

What’s happening here? A Google spokesperson talked to us about this specific search result, and we learned a couple things:

1. As Google first mentioned at Tuesday’s “Inside Search” event, the company isn’t using facial recognition in the Search By Image tool.

2. The Search By Image algorithm is looking at other things in the uploaded photo — the general shapes and proportions of what’s shown in the image, the colors, the outlines of Obama’s hair and clothing, and so forth.

It makes sense: Because the creators of the offensive image only changed her facial features from the image that I uploaded, and because Google isn’t using facial recognition, the Search By Image algorithm is actually making what I’d call the correct match. Without facial recognition, the disturbing image is essentially the same as the real image that I uploaded.

Will Search History Be Repeated?

Back in 2009, Google initially removed the offending image because it was hosted on a site that was serving malware, which is against Google’s terms of service. But the image showed up on other, “clean” sites and quickly made its way back into the image search results. That prompted Google to buy ads against the search term “michelle obama” to explain to users why search results are sometimes offensive.

Speaking about the Search By Image case above, Google wouldn’t speculate if they’d run similar ads now. The spokesperson said that Google views these as two very different cases: In 2009, an offensive image was being shown for a very broad text query (“michelle obama”). In this case, an offensive image is being shown in response to a very specific, user-uploaded image — an image that is closely associated to the offensive version.

In fact, the offensive image matches the original in every way but one: the First Lady’s face. And without using facial recognition, there’s no way for Google to distinguish between the two.

One Final Note

Back to that first paragraph: If you’re wondering why the offensive image doesn’t show up anymore on searches for “michelle obama,” Google says it’s because of algorithmic improvements, not any specific filtering on her name. The spokesperson said that the company’s internal metrics show that they’re doing a much better job of identifying the authoritativeness of individual images — and the offensive image is not authoritative for Michelle Obama’s name.

P.S. See Should Rick Santorum’s “Google Problem” Be Fixed? for a look at how this case compared to Rick Santorum’s “Google Problem.”

Opinions expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.

Related stories

New on Search Engine Land