Ask the SMXpert – Optimizing for voice search & virtual assistants

SMXpert Upasna Gautam continues the Q&A from SMX Advanced and discusses a number of topics including creating the right content, using homonyms, stressed words and quality metrics when optimizing for voice search.

Today’s Q&A is from the Optimizing for Voice Search & Virtual Assistants session with Upasna Gautam from Ziff Davis.

Question: How much of an impact will homonyms, accents, and stressed words have in voice search?

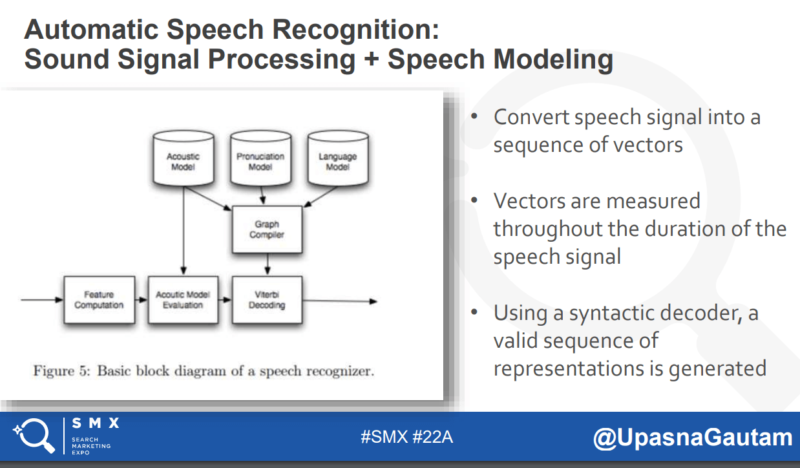

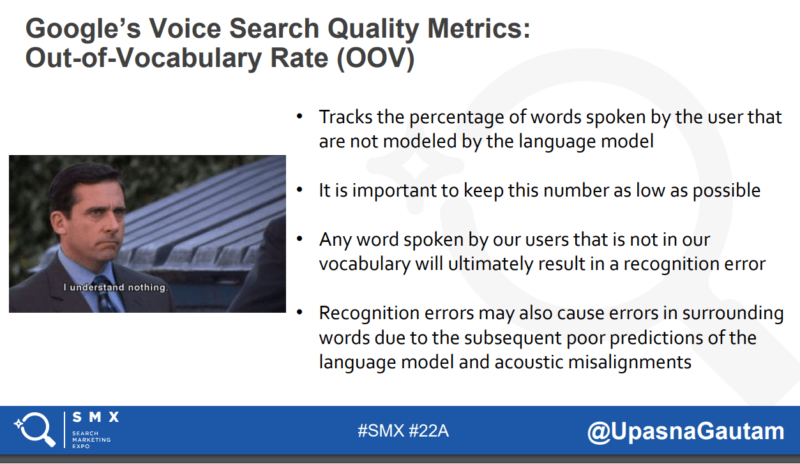

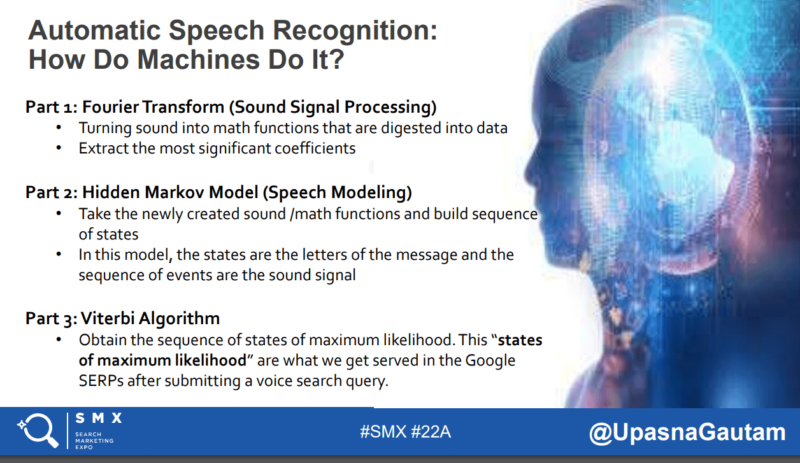

Upasna: The automatic speech recognition capabilities of the voice search system have become intelligent enough to understand accents, dialects, and stressed words, as well as decipher context of homonyms.

Google Assistant Group Product Manager Brad Abrams recently discussed this in the Voicebot Podcast, as he highlights (17:00 mark) how accents do pose problems within a country with regional variations, but that localization involves a lot more than just accents.

This challenge can be addressed in two parts: automated speech recognition (ASR) and natural language understanding (NLU). Speech and accent recognition fall under the ASR segment, while understanding intent, slang, grammar variants and colloquial expressions all need NLU.

When Google added 30 new language varieties last year, they worked with native speakers to collect speech samples by asking them to read common phrases in their own accents and dialects. This process trained their machine learning models to understand the sounds and words of the new languages and improve the accuracy of the system when exposed to more sound samples over time. Neural translation has worked a lot better than the old phrase-based system because it now translates full sentences at a time, instead of fragments of a sentence.

From Google:

To incorporate 30 new language varieties, we worked with native speakers to collect speech samples, asking them to read common phrases. This process trained our machine learning models to understand the sounds and words of the new languages and to improve their accuracy when exposed to more examples over time.

By using this broader context, it can figure out the most relevant translation, which is then rearranged and adjusted to be more like a human speaking with proper grammar. Google search has already existed and functioned in all of those languages for such a long time, which has provided a powerful source of intelligent data to build voice search capabilities that are able to understand user queries and serve relevant answers.

Google speech recognition now supports 119 languages at impressive accuracy rates.

Question: What about Siri? Should we use the same rules as Google voice search?

Upasna: I don’t like the rigidity of the word “rules” when we’re speaking about such a dynamic landscape, so let’s say “best practices.”

Yes, the same best practices can and should be applied, because like Google voice search, we understand how Siri works by understanding how ASR works. Apple already has a lot of ASR models in production, which support 21 languages in 36 countries (perhaps even more now).

Apple has also been working on refining their ASR language models over the past several years and has caught up despite getting a late start in the game.

Question: When creating content for voice search, does it make sense to have a whole page of questions and answers, or is it better to integrate a question/answer into each content piece?

Upasna: The best practice would be to create a clear information architecture within your FAQ section. Create a top-level FAQ page, then group similar questions together within a sub-page to create topical authority and provide long-form answers. Understanding and answering hyper-specific questions is key for voice search, especially for purchase-driven queries.

For example, a voice search user is much more likely to search for “what’s the best waterproof fitness tracker of 2018 that can sync with my iPhone” or “best waterproof fitness tracker for surfing” than just “best fitness tracker.”

In just the last four weeks, my team and I have noticed drastic changes in the search engine results pages (SERPs) for these queries, where the hyper-specific query being searched is providing results in the form of product carousels within the featured snippet and a knowledge graph panel pulling in a specific, single product to answer the question.

The more precisely we can answer these specific questions, the better we can serve the user and gain organic visibility. If you’re not using it already, I recommend you tap into the SEMrush Keyword Magic Tool’s “question” filter.

Question: How do you foresee the adoption of voice search in other countries?

Upasna: Google’s goal is to make the web more inclusive, which means bringing down as many language barriers as possible. I think this has already directly impacted the rate at which voice search is adopted in other countries, and will continue to do so.

The rate of adoption in India is a great example of this progression. According to Rajan Anandan, Google Vice President and Managing Director, South East Asia and India, as of December 2017, 28 percent of search queries in India are conducted by voice and Hindi voice search queries are growing by over 400 percent.

As I mentioned earlier, last year, Google launched voice search capability for 30 new languages, nine of which were Indian languages. The Indian subcontinent itself has 22 official/major languages, 13 different scripts, and over 720 dialects. We can only imagine the challenge of bringing something as complex as voice search to this country, but it is happening.

A speaker of regional Indian languages like Punjabi or Tamil used to have difficulty finding accurate and relevant content in their native languages, but last year, Google brought its new Neural Machine Translation technology to translations between English and nine widely used Indian languages (Hindi, Bengali, Punjabi, Marathi, Tamil, Telugu, Gujarati, Malayalam, and Kannada) – languages that span the entire country.

We know it’s easier to learn a language when we already understand a related language (as is the case with Hindi Punjabi, or Hindi and Gujarati, for example), and Google also discovered their neural technology speaks each language better when it learns several at a time. Because Hindi is the national language and spoken across the country, Google has a lot more sample data for Hindi than its regional relatives Marathi and Bengali. Google has realized that when the languages are trained all together, the translations for all improve more than if each one was trained individually.

We see this in practice with Chrome’s built-in translate functionality. More than 150 million web pages are translated by Chrome users through the magic of machine translations with one click or tap every single day.

With these advances in language accuracy and translation in India, Google statistics now reveal that rural areas are catching up quickly with the metropolitan areas when it comes to internet usage in India as consumers are searching in their preferred languages more than ever before. As more and more people in India discover the internet and its relevant and useful applications, it’s fast weaving into the fabric of everyday life in urban and rural areas alike.

I believe that we’ll continue to see this adoption pattern unfold into other countries as Google continues to feed more language data into and train its Neural Machine Translation system.

Question: Do you think e-commerce is ready for voice search? Where would you start?

Upasna: I think it’s just a step away from being ready. The SERP itself has long been ready, as we’ve seen Google preparing for and integrating e-commerce pages more prominently for years. Especially over the past five years, we’ve seen the entire SERP evolve into a dynamic, purchase-driven environment, with the integration of product carousels, featured snippets with product rankings, research carousels, and of course, the shopping carousel.

I say it’s just a step away from being ready because of the recent erratic behavior of e-commerce SERPs it’s clear that Google is still experimenting. This is the best time to optimize at the product level, detailed content, technical specs, optimized product images, user reviews and ratings, and of course, semantic markup!

Upasna: Here are my #SMXInsights:

Want to see more insights and answers from our SMXperts? Check out our growing library of Ask an SMXpert articles!

Opinions expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.

Related stories

New on Search Engine Land