Is CTR A Ranking Factor In Organic Results?

Contributor Bartosz Góralewicz shares the results of an experiment he conducted, which suggests that click-through rate (CTR) from search is not a ranking factor.

TL;DR: Despite popular belief, click-through rate is not a ranking factor. Even massive organic traffic won’t affect your website’s organic positions.

What Is Click-Through Rate?

Before going any further, let me briefly explain the concept of click-through rate (CTR).

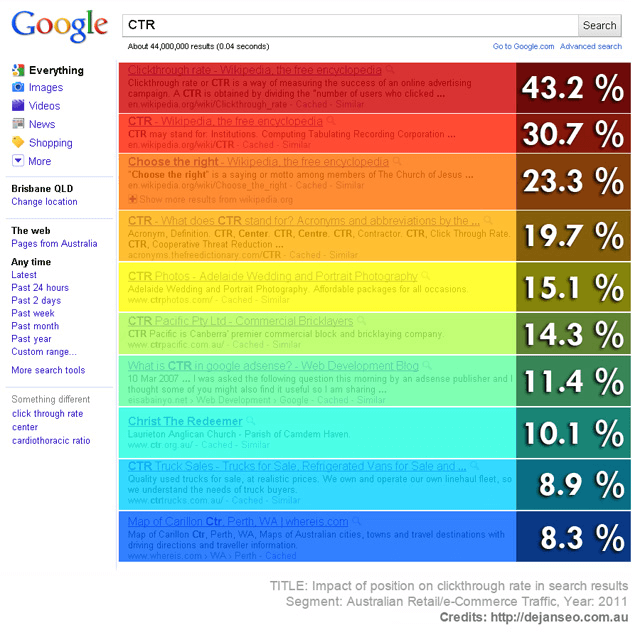

Click-through rate measures the number of people who click a link against the total number of people who had the opportunity to do so. So if a link to your website appears as a listing on a search engine results page (SERP), and 20% of people who view that SERP click your link, then your CTR in that case is 20%.

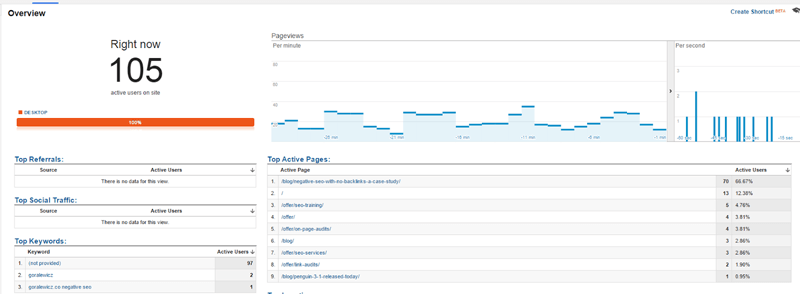

Everyone knows that ranking #1 is much better than ranking #10. Why? Because of the different click-through rates of each position in search results. As you can see in the image below, there is a considerable difference in ranking in the first slot of search results versus the fifth or afterwards.

Image credit: DEJAN Marketing

The image above was created in 2011, so of course these percentages may not be fully applicable today. However, many studies have sought to measure click-through rates based on search engine rankings over the years, and all have ultimately concluded that higher placements in search engine listings correlate with higher click-through rates.

Why did I pick CTR as the topic of my experiment? Recently, there has been a lot of buzz about CTR — but unfortunately, there are no SEO experiments or case studies showing the influence of CTR (as an isolated ranking factor) on rankings in 2015. (I will explain it a little bit later in the “Methodology” part of the article.)

Allow me to examine a few possible explanations for the popularity of the CTR over the past year.

Click-Through Rate Has Become A Popular Metric

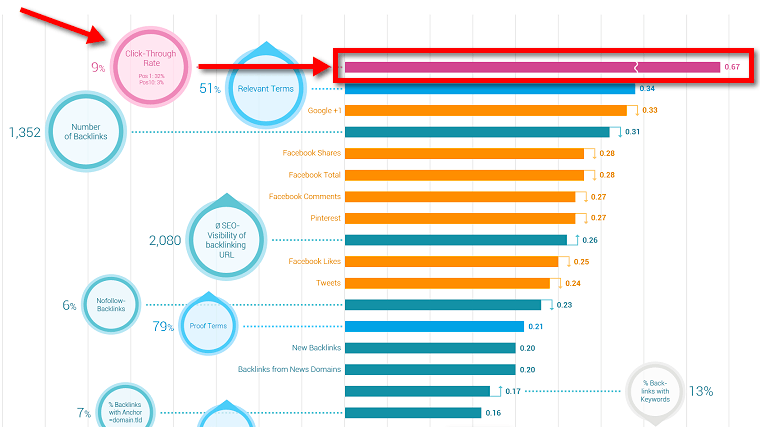

Click-through rate has always been considered an important SEO metric — however, since 2014, it has become very popular due to its placement in Searchmetrics’ SEO Rank Correlations And Ranking Factors 2014.

Image Source: Searchmetrics

SearchMetrics explicitly stated that their analysis was “used to describe differences between certain properties of URLs ranking from position 1 to 30 (without implying any causal relation between property and ranking).” In other words, some of the factors above can correlate with high rankings, but they are not necessarily ranking factors.

Since then, click-through rate has often been mentioned in blog posts, at conferences, or even on White Board Fridays as an important ranking factor.

There have been a number of case studies showing that you can influence your rankings through artificial CTR boosting, too. I shared a case study recently on negative SEO without backlinks, in which I managed to decrease the ranking of a page on my website using only click bots. One of the most popular case studies was this one by Rand Fishkin, in which he boosted rankings to a page on his site using crowdsourced clicks.

In 2015, it all changed. It seems that CTR does not directly influence rankings anymore. This is what I’ve managed to find evidence for in my research, and I want to present my findings in this article.

Idea Behind The Experiment

In a way, this experiment was a huge fail — I’d wanted to see how much I could improve website’s rankings by increasing click-through rate.

My hypothesis was that if click-through rate is indeed a ranking factor, then manipulating it should influence a website’s position in Google rankings. In other words, Higher CTR = Higher position in Google.

The Goal Of The Experiment

I wanted to focus on isolating CTR from all the other ranking factors to measure its impact on search engine rankings.

While the results of Rand’s experiment (and others like it) are interesting, there are many different possible ranking signals that could have influenced the results — Chrome data, plugin data, social media, backlinks, and so on. Therefore, it didn’t purely measure CTR.

I wanted to exclude the “human factor” from the CTR measured in these types of crowdsourced experiments. My goal was to create an SEO experiment measuring the CTR influence that would not be biased by other metrics.

Setting Up The Experiment

What could be the ultimate test of CTR’s impact on rankings? Sending traffic to a domain that would look like a viral trend (based on TV ads, a new product launch, etc.). If CTR is really an important ranking factor, then obviously, this test should result in a major increase in organic rankings.

Finding The Right Content

My aforementioned case study on negative SEO attracted quite a few backlinks. Still, I’d never managed to reach position #1 for the keyword “negative SEO.” The page has historically ranked in the #2 to #4 range. I figured that pushing it to #1 for this keyword would be a great proof of CTR’s influence on rankings.

(Of course, I added some other keywords to make it more precise and clear, but I will explain that in detail later in this article.)

Finding The Right Moment To Start

I waited until new links stopped coming, and I figured that was the best time to start the experiment. The experiment ran through the second and third weeks of March 2015.

Setup & Technical Benchmarks

Google is very precise at spotting fake traffic. As you can imagine, this is a part of their algorithm that is as important as any other. It’s necessary to protect their most valuable asset: AdWords.

I had a pretty good setup of CTR bots, proxies, proxy scrapers, tools and servers after my previous tests, and they were only gathering dust (and billing my PayPal monthly). I already had the chance to test this bot setup with my negative SEO experiment. Still, around 60–70% of my visits were filtered out by Google Analytics and/or Google Search Console.

I decided this had to change if I wanted to take my experiment to the next level. I was seeking to influence Google’s traffic data, such that it would impact Google Trends and the Google Keyword Planner. I would need to achieve the following goals for my setup in order for my experiment to be valid:

- A 60–70% success rate (which was my fail rate before) in having my traffic recorded by Google Analytics and Google Search Console (traffic sent vs. traffic recorded)

- Ensuring the same traffic wouldn’t be filtered out by Google AdWords

- I will use a different IP with each visit

- All the traffic comes from Google.com

It wasn’t easy, but after a few weeks of fine-tuning my setup, I succeeded and was super-excited to move forward. In theory, all I had to do right then was send all this fake Google organic traffic to my website and wait.

Executing The Experiment

After setting everything up, I started to send the bots to my own domain, https://goralewicz.co. The structure of each query was as follows:

- Open browser.

- Go to Google.com.

- Enter the query and click on “Google Search”.

- Search for my domain in the search results page. If not found, go to the second page, and so on, up to the 12th page.

- Click on the result.

- Stay on the website for ~2–4 minutes while going through random pages.

The keywords I tested were:

- Goralewicz

- Goralewicz.co

- Goralewicz.co negative SEO

- Negative SEO

- International SEO consultant

- SEO consultant

- SEO training

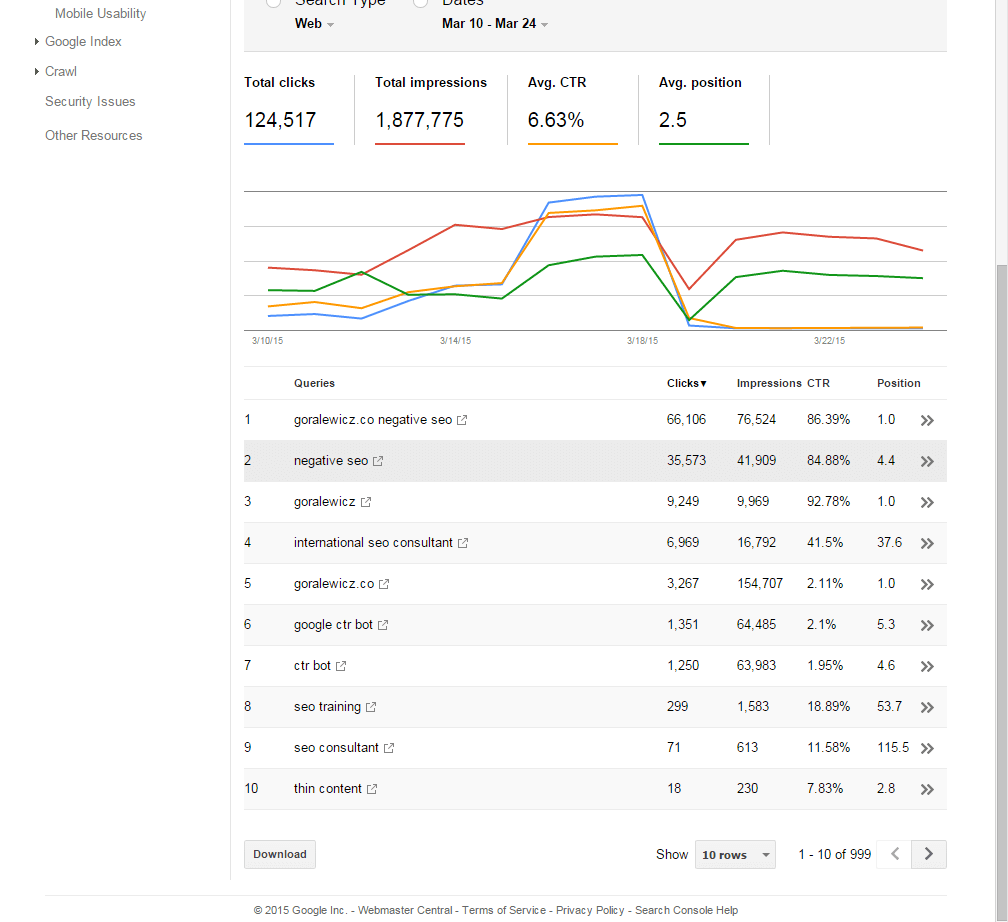

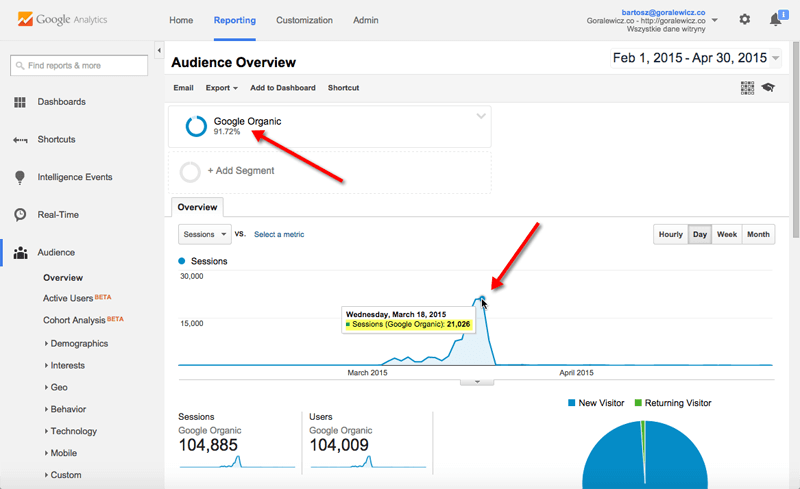

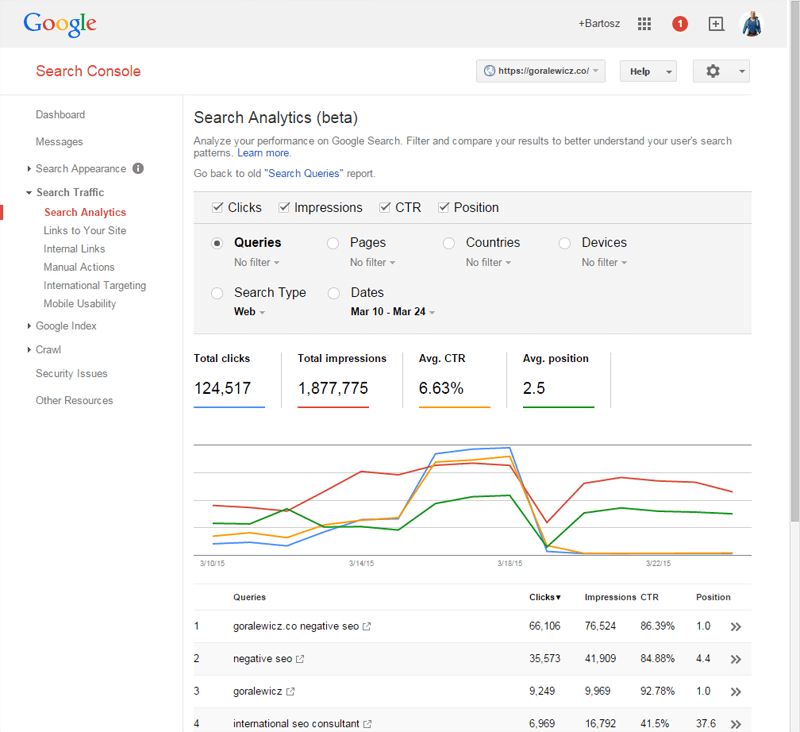

Here’s an overview of the clicks and impressions for these keywords, as recorded by Google Search Console:

I won’t go over every single keyword targeted, but let me review the results of the most important one: “negative SEO.” (To see data for all keywords, check out the full results on my blog.)

Negative SEO

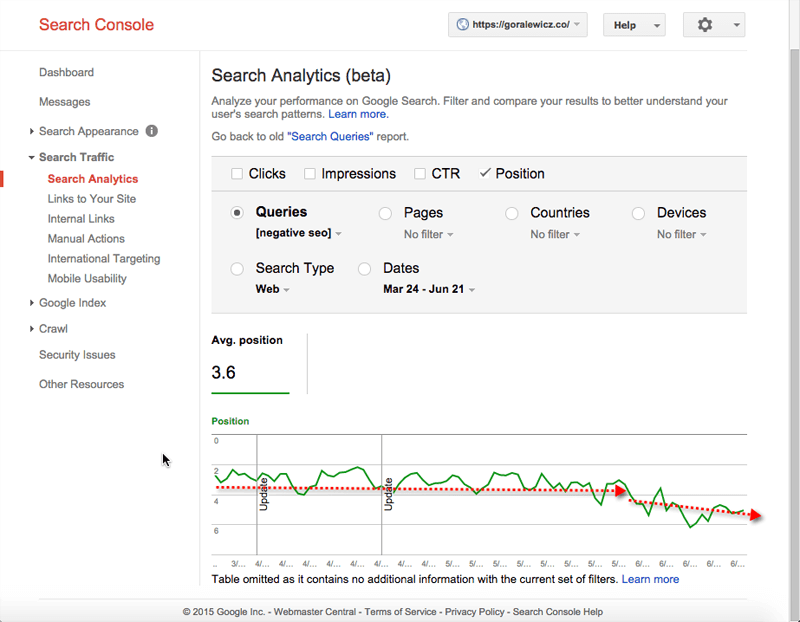

As mentioned before, my blog post had been ranking between #2 and #4 in Google for the keyword “negative seo” for several months following its publication on December 4, 2014.

The following sections detail how my experiment affected:

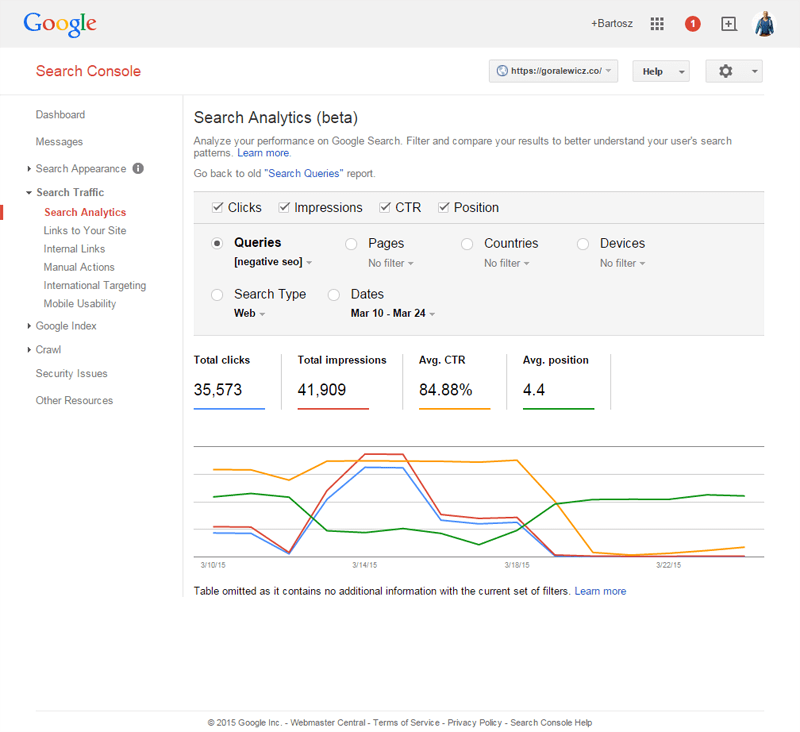

- Clicks, impressions, click-through rate and average position of my website for the term “negative SEO” (per Google Search Console)

- Google Trends for “negative SEO”

- Monthly keyword data for “negative SEO” in the Google AdWords Keyword Planner

- My website’s search engine rankings for “negative SEO” (per SEMrush)

Google Search Console

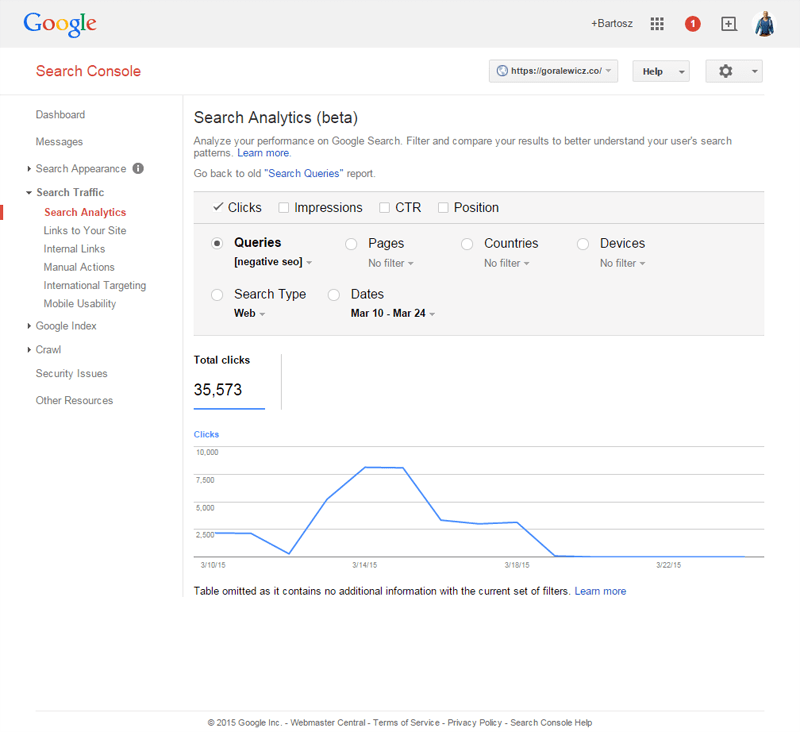

Here are the clicks recorded for this keyword in Google Search Console:

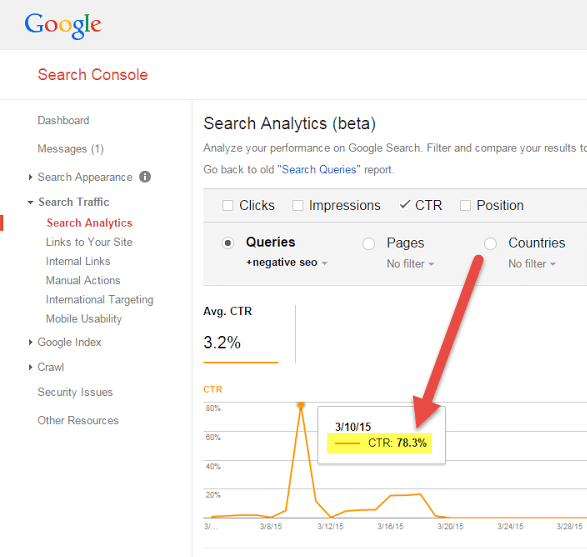

Here is the click-through rate (CTR):

Here is my average position in search results for this keyword:

And here is the combined view:

Google Trends

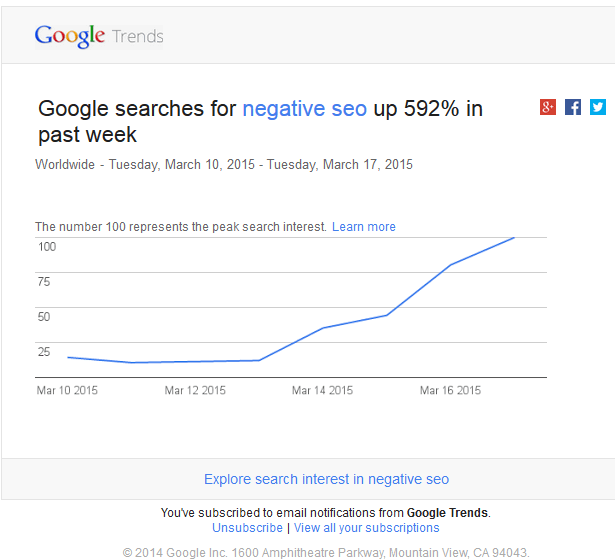

It all started with the email from Google:

In March 2015, “negative SEO” had become a Google Trend.

This helped me to verify that my clicks were not being filtered out by Google.

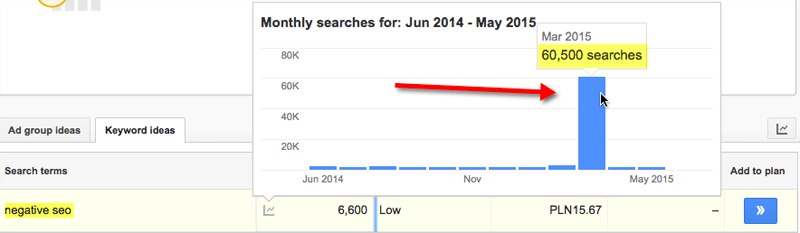

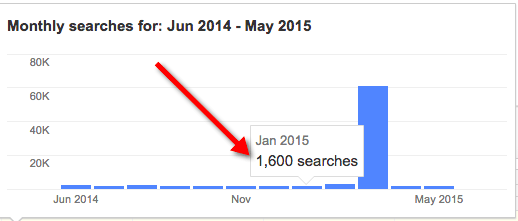

Google AdWords Keyword Planner

To verify further that my clicks were being recorded and not filtered out as spam, I checked the Google AdWords Keyword Planner.

As you can see, the spike in traffic for March 2015 is huge.

Compared with an average of 1,600 searches monthly, I managed to increase traffic for this keyword by 3781%.

Rankings Before The Experiment

As measured by SEMrush, here are my rankings before the experiment…

…and here are my rankings at the conclusion of the experiment.

Results

After the experiment, my search rankings remained flat for two months, only to start plummeting around June 1, 2015.

Reaching The Goals

As you can imagine based on the introduction to this article, there were no ranking changes. All the other goals of this experiment were fully met, though.

- I managed to create a super-heavy organic traffic that wasn’t filtered by the Google algorithm.

- Traffic reached up to 21,000 Google Organic visits per day.

- I managed to increase my CTR.

- I created a (fake) Google Trend.

- I managed to affect the very core of Google traffic data from Google AdWords Keyword Planner.

- I completely failed to increase my rankings for any of the keywords selected for this experiment.

Summary

More and more data seem to support this experiment’s conclusion that click-through rate does not impact search engine rankings. In fact, at SMX Advanced, Gary Illyes from Google confirmed that “Google uses clicks made in the search results in two different ways — for evaluation and for experimentation — but not for ranking.”

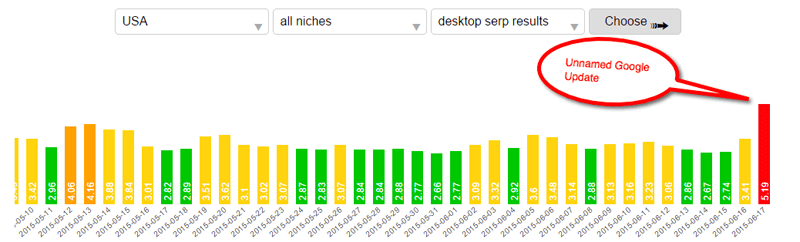

If you think about it, it clearly fits into Google’s policy of “fixing” ranking factors that are easy to manipulate. This also goes hand-in-hand with recent heavy SERP fluctuations that still remain unnamed.

Source: serp.watch

I believe it is a good move and the only move that Google could have made. With a spike in the CTR software and services that either help websites rank or attack competitors, it became too much of a vulnerability for Google.

I hope this SEO experiment will help you in understanding CTR and its influence on rankings a little bit better. Hopefully, it will also help all webmasters and SEOs in making better choices.

Interested in seeing the full results for all keywords tested in this experiment? Click here.

Opinions expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.

Related stories

New on Search Engine Land