Google Running Feedback Experiment That’s Similar To Human Quality Rater Test

Google has long asked searchers to provide feedback on the quality of its search results, and often runs a number of tests aimed at encouraging such feedback. The latest such experiment, which seems to have been live for at least a month or so, is a bit different because it asks searchers for feedback only […]

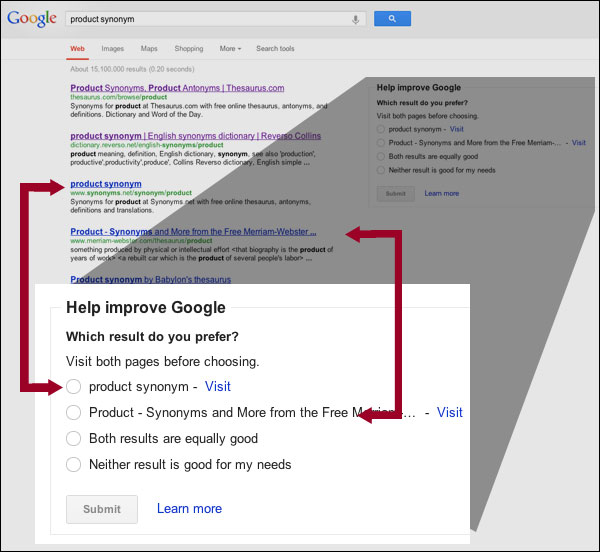

Eli Schwartz recently shared with us a screenshot after doing a search for [product synonym]. As you can see below, Google is using the open space on the right of the results page to ask “Which result do you prefer?”

What’s most interesting is that Google isn’t asking for general feedback on the search results page as a whole; it’s specifically pulling out two of the results — and not the top two. In this case, Google is specifying the third and fourth links and asking the searcher to “visit both pages before choosing.”

That’s similar to the “side-by-side” tasks that Google’s army of human search quality raters often perform — a type of task that you can learn more about in these articles:

- Interview With A Google Search Quality Rater

- Google Search Quality Raters Instructions Gain New “Page Quality” Guidelines

In that second article, you’ll find a screenshot of a “basic” side-by-side task that also asks the evaluator to view two specific pages and choose the better result.

The Street recently saw this same feedback form, and pointed out that the “Learn more” link at the bottom leads to a Google help page that says user feedback “will not directly influence the ranking of any single page,” and refers to how the data is used in conjunction with its “professional search evaluators.”

In a typical year, we experiment with tens of thousands of possible changes. These changes, whether minor or major, are tested extensively by professional search evaluators, who look at results and give us feedback, and “live traffic experiments” where we turn on a change for a portion of users. Testing helps us whittle down our list of changes to about 500 improvements per year.

Earlier this summer, Google was running a similar, but less specific, feedback test that asked searchers “How Satisfied Are You With These Results?” That survey asked for feedback on the full search results page.

Opinions expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.

Related stories

New on Search Engine Land