Patent 2 of 2: How Google learns to guide purchasing decisions

In a follow-up to his last article, columnist Dave Davies explores a Google patent on guided purchasing, which describes how smartphone behaviors and user data could be used to predict and direct purchase behavior.

In my last article, I explored a patent which focused on how Google learns to influence and control users. This patent described a system to adjust and correct users when their system determines that said user is making a mistake. In this system, we saw Google influence users and suggest alternatives when it deemed a user was acting in a way that would prevent then from performing a desired action in the future.

In this article, we’re going to build on this with an analysis of a second patent, “Guided Purchasing Via Smartphone,” which was granted on March 16, 2017. From there, I’ll discuss how these two patents work together to predict significant changes ahead — and some amazing opportunities for paid search marketers.

As always, I want to remind readers that filing or being granted a patent does not necessarily mean that Google will be implementing all or even any of the techniques and technologies discussed. However, as I mentioned in the last article, we can already see elements of these two patents in use, and that’s a good indication that Google is moving in this direction.

So, let’s begin with our analysis of the patent, “Guided Purchasing via Smartphone.”

Abstract

In the abstract, we find the core idea being patented: a system that is built to understand a user’s intent to purchase a product based on that user’s smartphone behavior. The idea is that when purchasing a given product, there is an expected sequence of tasks that must be completed, and the system can figure out where a user is in that sequence, based on their behavior. The patent further describes notifying a user and guiding them to the next step in the sequence towards the completion of the purchase.

Technical Field

Technical Field (Section 1) notes that the smaller user interface (presumably compared to a PC) and the more fragmented engagements we have on smartphones are the driving forces of the patent. Essentially, these two issues are what need to be addressed to improve purchasing on smartphones.

Background

The challenge outlined in the patent is caused by a shift from the predictable online sessions of the desktop environment being “supplemented, if not replaced, by fragmented interactions using smaller interfaces existing on smartphones and smaller tablet computing devices.”

In other words, widespread smartphone adoption has changed online behavior, as users have shifted from single long sessions into what Google refers to as “micro moments.” From checking the time to chatting with friends, use of a smartphone — and even a smartphone transaction — may take place in small fragments of time over multiple sessions.

Summary

The summary has multiple key sections that I’ll be looking at one by one:

Section 5

Section 5 again describes the concept of predicting a smartphone user’s intent to purchase a product, based on an expected sequence of events that tends to lead to its purchase. The system could learn to determine the user’s current position in this sequence and notify him or her of the next step. We’ll get further into how this works (and exactly what it means) as well as why PPC managers can get ready to start salivating.

Section 7

In Section 7, we read about advertising bids based on where the user is in their journey towards a purchase — that is, how far along the expected purchasing path are they, where are they specifically and what is the next expected step? This idea is exciting unto itself (basing ad targeting on where a user is in a sequence of tasks expected to end with a conversion), but I’m going to ask you to stick with me here — while this is an interesting idea, we’re just getting warmed up!

Detailed description of the example embodiments

Section 19

While Section 19 is mainly a repeating of what we read in the technical field section, there’s one thing that’s very important to catch. The patent is about guided purchasing via smartphone; however, there is zero mention of organic. Section 19 makes it clear that the purpose of the guiding is to drive ad opportunities, not organic search clicks.

Section 31

Previously, we’ve read that the user’s intent to purchase a product was determined from a series of actions they had taken. In Section 31, we see that these actions aren’t simply related to queries in search but could also include their interests on social media pages:

In some embodiments of the present technology, a user’s intent can be determined in other fashions, for example … by the guided purchasing server … querying the user, via the user’s smartphone …, after observing the user’s interest in social media pages featuring a product[.]

Presumably, this could be applied to additional smartphone actions as well, such as text messages; however, text isn’t mentioned in Section 31.

Section 32

This section mentions the use of machine learning and provides a better idea of how this system works. A system is developed by Google to analyze the sequence of tasks taken prior to making a purchase of a specific item across the population as a whole. This data is then used to develop an optimal order that these tasks should be completed in, and the user is then guided down this path.

We’ll read about how exactly that happens shortly. What’s important here is that the data is collected, and an optimal path is determined for the user to make a purchase. The path is optimized and may potentially aid the user in making better decisions for their needs.

Section 36

One of the tasks in the sequence outlined by Google may be a user establishing a budget for the product they intend to purchase. In that event, the other tasks associated with the purchasing process may be adjusted:

The purchase budget can then be used to determine one task sequence, from among a plurality of task sequences based on the user’s response to the budget query.

Presumably, this would be to avoid asking the user questions when one or more answers may result only in products that exceed the user’s budget.

There are a couple of interesting things here. The first is that Google is definitely taking the “bird in hand” approach. If the user doesn’t have a budget of $500 for a camera, let’s not show it to her because then she might decide to save up and not click on a paid ad at all (I’m guessing at this, but it seems likely to me).

As we saw with the previous patent we looked at, this may also be to reduce stress on the user. Asking questions or showing people options they can’t have is stressful and can be disheartening. Establishing the budget to avoid not just displaying products that aren’t applicable but crafting the experience so the user never encounters something they can’t have would make the process more enjoyable.

An additional angle I have to consider here as well, also related to the previous patent we looked at, is the notion of making recommendations and adjustments without the user’s knowledge. I would not find it unlikely that the technique would be advanced to monitor past purchase behavior to determine a likely budget shopping pattern and go from there.

Section 37

This section adds to the system the ability to recognize when there is a deadline for the intended purchase, determining which tasks need to be completed in what timelines for the deadline to be met, and notifying the user at these various stages.

One can think of applications for this (such as a sale), but what I find interesting here, too, is the combining of the deadline idea in this patent with the “correcting potential errors” discussed in the last article.

For example, consider the scenario of needing to purchase some new shoes for a wedding. A user may start looking months in advance, but if he doesn’t take steps towards making a purchase as the day approaches, it is logical that the systems would combine to help him avoid error (i.e., failing to buy the shoes). This could take the form of smartphone notifications alerting the user that to receive the item in time, he’ll need to proceed down the conversion funnel.

Now it gets exciting

I promised earlier that PPC managers could start salivating. Well here’s where that promise plays out.

Section 44

This section uses a camera as an example of a product that user intends to buy. In this hypothetical scenario, the system “determines that the user has yet to complete the ‘choose camera features’ task, the ‘review camera accessories’ task, and the ‘choose a merchant’ tasks,” which are all part of the sequence of actions leading to a camera purchase.

The data that needs collecting then is the features the user wants, so the system will prompt the user to “choose camera features.” Rather than simply displaying all digital cameras, Google wants to act as a filter.

Section 45

In Section 45, we continue the hypothetical camera example and see that two cameras (Mod1 and Mod2) are selected as appropriate for the user based on the feature set (A) that he or she is looking for. On top of this, the system has also selected merchants (MerchX and MerchY) who carry the Mod1 and Mod2 cameras with feature set A.

IMPORTANT: It’s important to know, before moving forward, that the referenced “feature set A” may represent a single feature or a grouping of features. For example, it may represent the single feature “50 inch” for a product “white board” where the only filterable feature is size. On the other hand, in my own quest for a motherboard, a feature set may include a variety of factors such as socket type, CPU support, chipset, onboard video, max memory, memory support and more. A variety of feature sets would exist for a product like a motherboard on Google’s end, and this information would be used to determine the product displayed, the merchants selected and — here’s where it gets interesting — the bid amount charged. (More on this shortly, as this is probably the most exciting aspect of this patent.)

Section 46

This section outlines how the merchant’s sales portal will work in conjunction with Google’s own systems to help the user complete the purchase. Worth noting is that, in this series of events, the user does not leave Google — the e-commerce system simply works with (or perhaps through) Google to complete the transaction.

Section 48

The one or more computing devices can select one or more advertising bids based on the smartphone user’s intent to purchase the advertised product, the determined sequence of tasks for purchasing the advertised product, and the smartphone user’s task state …

It get kind of interesting here as we see discussion of the bids being based on the user’s perceived intent (as determined by their position in the sequence of tasks completed towards making a purchase). So to be clear, implied in this section is that bids will be determined based on what one is willing to pay at various stages in the purchasing process.

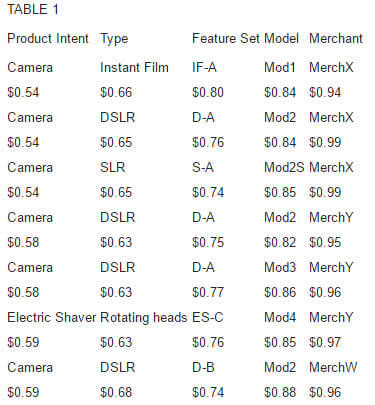

Before we go any further, I’m going to include a table right from the patent, which will apply to the next few sections:

The table above represents bids across three different advertisers (MerchX, MerchY and MerchW). For each new piece of data Google collects along a user’s path to purchase — desired product, product type, product features, model and so on — the user’s intent becomes better defined, and advertisers are able to bid different amounts at each new stage in the sequence.

Section 52

For example, let’s say the only data available to the system is simply that the user intends to purchase a camera (see the “Product Intent” column in Table 1 above). Similar to current bid strategies, the system selects the highest bids based on that information — therefore, the systems displays one ad from MerchW and one ad from MerchY, as they are the two highest bids for the only relevant piece of information we have (“Camera”) at $0.59 and $0.58, respectively.

The system itself then provides additional information to aid the user in understanding different camera types (see the “Type” column in Table 1), perhaps giving organic some of the non-commercial traffic at least. In this example, the camera types include Instant Film, DSLR and SLR. From there, the user refines their purchase intent by selecting a camera type.

Section 53

Having now selected a camera type of “DSLR,” the user is then prompted to select features (“Feature Set” in Table 1). Google will guide the user through a series of questions and provide information to enable the user to make their decisions on the features that are important to them.

During this stage of the process (i.e., while features are being selected), the user will now see ads from MerchX ($0.65) and MerchW ($0.68), as they are matching the current criteria (DSLR cameras) and have the highest bids for the combined Product Intent and Type.

By the end of the stage outlined in Section 53, the user will have selected the full scope of their required features, which brings us to…

Section 54

In the next step, the user is now selecting which model of camera he or she would like from those available that meet the criteria established (camera type, budget, features and so on). You can see in Table 1 that there are a variety of feature sets represented. Each of these sets is meant to represent certain criteria in the camera (such as display size, weight and zoom capabilities).

In this case, the user’s desired features are represented by Feature Set D-A. As such, one ad each from MerchY ($0.77) and MerchX ($0.76) will be displayed, as they are the highest bidding merchants with cameras matching the desired Product Intent, Type and Feature Set.

Digging deeper, Google recognizes that there are two models of DSLR camera that match the desired feature set, Mod2 and Mod3, each of which have specific bids associated with them. In Section 54, the system is carrying further the clarification stage and providing the user with links to information on these two models of camera for the user to choose specifically which one they would like (Mod2 in this example).

Section 55

With the specific camera now selected, the user is given the choice of merchants to purchase from. Based on the previous decisions made by the user, the system now looks for the merchants who carry the Mod2 DSLR camera with feature set D-A and asks the user to select from those available. During this stage, the system only displays a single advertisement, which is that from MerchX, as they are the highest bidder on Mod2 DSLR cameras with feature set D-A.

However, the user is also presented with links containing information on different merchants that sell the correct product (MerchX and MerchY) so that he or she can select the merchant they prefer.

Section 57

Here, we see post-purchase advertising now being displayed based on previous purchase behavior. In this example, the advertisement being sent to the user is for a nearby photography school due to their purchase of the camera previously. This is interesting enough, but Section 58 really adds some punch.

Section 58

Section 58 starts off pretty dry, simply noting that advertisers can be charged based on user engagement with an ad and the frequency at which it is displayed. Fairly predictable, but what’s interesting is the part that reads as follows:

[A]dvertisers can bid to add tasks to a determined task sequence, and the guided purchasing server … can conduct an auction awarding added tasks to one or more advertisers.

What this means is that the task sequence towards a purchase will be made available to advertisers, and advertisers will have the opportunity to bid to add tasks to the sequence. Some application of this would be fairly straightforward, such as adding in a task related to the weight of the camera if that was not part of the initial sequence (useful if you sell a particularly light camera). But it could also be extended and used to disrupt the natural leaders in a space if a new product is available with unique features.

Let’s imagine for a second you have just manufactured the first camera to connect to a holodeck (yes — I’m that kind of nerd). Adding the question, “Would you like your camera to connect to a holodeck?” into the sequence would dramatically change the feature sets applicable and basically give you the sale. This is one of the more interesting aspects of this patent.

So, what does this tell us about paid search?

This patent is full of ideas that I would view as highly likely to be implemented, and while the patent was written to focus on smartphones, the growth in voice-first devices and the push to get Google Assistant into more phones can carry the idea even further into a world where the questions and answers guide the user down a purchase path without any visual display at all.

I mentioned above that we’ll be looking at how the two patents discussed in this series tie together, but before we do that, let’s take a moment to quickly summarize what the system outlined in this patent does for the paid search manager:

- It provides an environment where a user is guided down a purchase path thorough a series of short, fragmented interactions (“micro-moments”) that occur while the standard smartphone user is on their device.

- It provides an environment where ads can be triggered based on social media engagement and other data associated with the device, such as location (Did the user enter a specific type of store?), interactions with apps or previous purchases.

- It provides an environment where machine learning and/or AI is used to create likely paths and task sequences associated with a conversion and assign a likelihood to a user’s behavior given where they are in this sequence.

- It provides PPC managers the ability to bid and advertise differently at different stages in the buying cycle. A different ad and bid can be used early in the buying cycle than is used further along when the consumer has gotten more specific in their needs and budget.

- It provides PPC managers with the ability to inject tasks into the conversion path sequence that may serve to offer better information/ads or provide a scenario where a unique feature may restrict some of the other advertisers.

In my opinion, the biggest features discussed involve the ability to customize ads and bids on specific features and position in the conversion path, and the ability to bid to add tasks to the sequence that may help adjust the conversion path in your favor.

How do the two patents relate?

At the beginning of the first article in this two-part series, I promised that the two patents would tie together. Some of the overlap has already been specified above. For example, we’ve discussed the deadlines referenced in the patent we’ve been covering today and how they may be used to help users avoid errors, as was covered in the last article.

But the ties run deeper. If you’ve read both articles, you’ve suffered through thousands of words — so rather than give a technical analysis of how the systems tie together, I will instead illustrate it using a basic, hypothetical interaction between a user and their smartphone (indicating which patent is being utilized where). I will be giving a voice-based example, as it’s more colorful; however, one can easily imagine the same interaction taking place via notifications and manual input.

User: OK Google, navigate to Billy’s Dry Cleaning.

Google: Will this be to have your suit cleaned for the wedding you’re flying to tomorrow afternoon? (Error correcting patent)

User: Yes.

Google: Billy’s Dry Cleaning is closed tomorrow, and you would not be able to pick it up. Would you like me to find a dry cleaner close by that is open tomorrow and has next-day pickup? (Error correcting patent)

User: Yes.

Google: Willy’s Dry Cleaning is one block away and is open tomorrow and has next-day pickup. Would you like me to set your navigation there? (Both patents: error correcting in offering suggestions to meet future event needs and the guided purchasing patent in providing an advertisement for product “dry cleaning” with the feature set “open Sunday” and “next-day service.”)

[The user drops off dry cleaning and gets back in car.]

User: OK Google, where can I get a laptop?

Google: Is the laptop a wedding gift? (Error correcting patent)

User: No, it’s for me.

Google: How much would you like to spend on your laptop? (Guided purchasing patent)

User: Under $1,000.

Google: Is this for work or for pleasure? (Guided purchasing patent)

User: Work.

Google: Do you need it to have Microsoft Office? (Guided purchasing patent)

User: Yes.

Google: Do you know the specification you would like it to have or would you prefer to answer some questions about your use? (Guided purchasing)

User: I’ll give the specifications.

Google: OK. Let’s begin with the processor. Would you prefer AMD or Intel? (Guided purchasing)

User: Intel.

[We’ll skip the rest of that series of questions and jump to the last in that set; however, each of these relates to the guided purchasing patent.]

Google: Do you need this by a specific date? (Guided purchasing patent)

User: I would like to get it today to have for my trip.

Google: If you would like to pick it up locally, there are two stores near you that carry the Acer XYZ Notebook, which meets or exceeds all your requirements. You can also order it from Acme Computers Online for next-day delivery to your hotel for $24 less, including the shipping to your hotel. Would you like to pick it up or order it to the hotel? (Guided purchasing with elements from the error correction patent)

User: I’ll pick it up.

Google: Both MerchX and MerchY have that item in stock. MerchX lists the Acer XYZ at $10 less but is 20 minutes farther away. Which merchant would you like to visit? (Guided purchasing with elements of the error correction patent)

User: MerchY.

Google: Setting navigation to MerchY. (Error correction)

As you can see, the patents could easily intertwine — and this just one simple example. We could also add suggestions to stop for gas on the way to MerchY to avoid having to do so on the way to the airport tomorrow, or include suggestions to set alerts and reminders to prevent the user from missing the flight.

The future is now

I personally view the error correction as occurring presently to certain degrees and on the inevitable list moving forward. It’s a natural evolution in technology, and I don’t believe there’s any debate as to whether the systems described in this patent will impact our daily lives. This brings us to the guided purchasing patent. As mentioned previously, while the patent itself is written for smartphones, I personally view the processes and systems as even more relevant in a voice-first search environment (which includes voice-based interactions with smartphones), and in this area it’s again an inevitable step in the evolution of search and personal assistants.

The only portions of the patents that aren’t inevitable but which I still consider to be highly likely are the sections of the guided purchasing patents that involve adding tasks into the Google-established sequence involved in a purchase. It’s an opportunity to make money for Google that (properly controlled) could provide a solid value for the consumer, so a win on both counts.

As advertisers, one of the areas we need to start thinking about and readying ourselves for is a time when we can bid and craft ads for specific points in a conversion path and when specific conditions are true. You won’t just need to bid on a term like “acer xyz” — you can bid on it only if it’s a recommended product at the end of an established purchase path and when the laptop features sought by a user match those of the device. You won’t be showing up for people just looking up specs or people whose need aren’t met by the laptop.

If these patents are any indication, it’s going to be a very interesting next couple of years, and if you’re involved in AdWords management, buckle up. It looks like it might start getting even more interesting in the very near future given the pace at which things are moving.

Opinions expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.

Related stories

New on Search Engine Land