Review counts matter more to local business revenue than star ratings, according to study

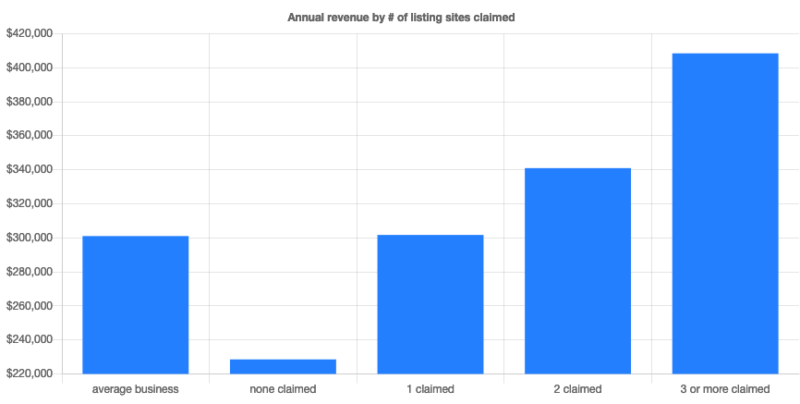

Womply also found businesses claiming their listings on multiple sites generated 58% more revenue than the average.

Reviews matter; that’s well established. What’s not quite as clear is the relationship between reviews, star ratings and revenue, although there have been several academic studies on this. Now, small business SaaS provider Womply has released a large-scale study showing a strong connection between reputation management and revenue across multiple industries.

More than 200K businesses examined. In performing its analysis, Womply looked at reviews and transactions data “for more than 200,000 U.S. small businesses in every state and across dozens of industries, including restaurants, salons, auto shops, medical and dental offices, retailers, and more.” The key difference between this study and others on reviews is local business transaction data. Womply was able to connect review and presence management best practices with revenue outcomes.

In brief, the study found:

- Businesses claiming their listings on multiple sites earn 58% more revenue

- Businesses that respond to reviews average 35% more revenue

- Businesses with ratings of 3.5 to 4.5 stars earn more than those with higher and lower ratings

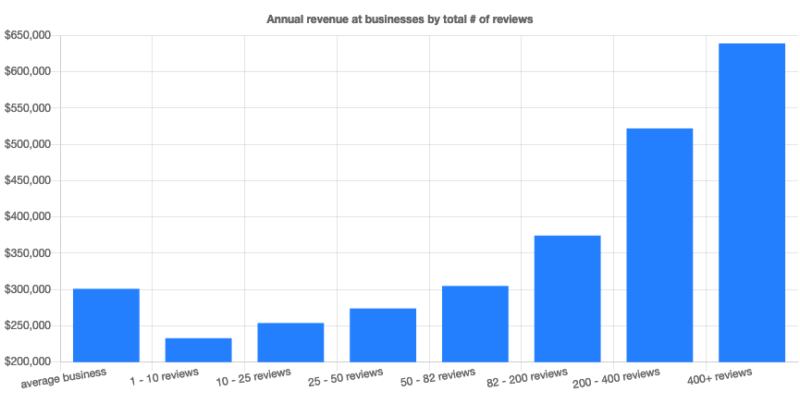

- Businesses with more reviews (than the average) across sites generate 54% more revenue

Claim and respond. Businesses that didn’t claim their listings averaged $72,000 less in annual revenue according to Womply. Claiming listings on key sites like Google My Business enables consumers to find and engage with businesses more readily. This isn’t news.

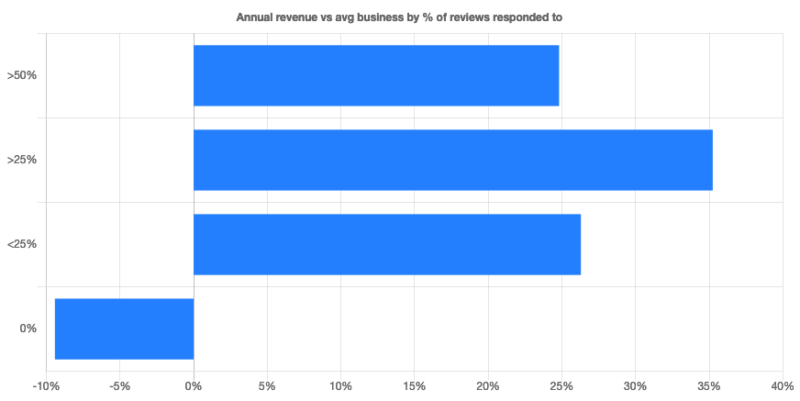

Another “no-surprise” finding is that consumers appear more inclined to buy from businesses that respond to online reviews. This may be because they assume those responding to reviews offer better service. According to the study, 75% of businesses don’t respond to their online reviews. But those that do earn considerably more revenue.

An interesting caveat here appears to be one of diminishing returns. Businesses responding to more than half of their reviews weren’t earning more than those responding to between 25% and 50%. The study doesn’t go segment by positive or negative review responses. There may be more nuanced findings here yet to be unpacked.

The optimal ratings range. Womply also discovered an optimal star-rating range. Businesses obviously don’t have control over this. But the company found that businesses in the 3.5 to 4.5 star range had more average revenue than those below or above, including businesses with 5-star ratings.

Womply offers two reasons to potentially explain the under-performance of 5-star businesses compared with those in the optimal range:

- Five star businesses tend to have fewer reviews

- Consumers may be more skeptical of 5-star businesses (assuming manipulation)

Review counts trump reviews. The study also discovered that review counts were more strongly correlated with revenue performance than average star ratings. The company said, “Businesses with more than the average number of reviews bring in 82% more in annual revenue than businesses with review counts below the average.” I suspect, however, that below a minimum star-rating threshold this observation would no longer hold up.

Why we should care. Here’s where one injects the familiar maxim, “correlation doesn’t equal causation.” Businesses that already “get it” are going to outperform those that don’t, in part because they’re probably better run. And these businesses are more likely to pursue and execute local SEO tactics effectively: claim and populate your profile on key sites (e.g., GMB, Yelp), respond to reviews, and have a program in place that generates a steady stream of reviews in an ethical way.

One thing not revealed here is whether and what percentage of these businesses worked with agencies or third party providers. Regardless, and despite the fact that much of this is already known in the community, the revenue analysis validates the real-world impact of these best practices.

Opinions expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.

Related stories

New on Search Engine Land