How Changes To The Way Google Handles Subdomains Impact SEO

At Pubcon last week, Matt Cutts mentioned a change in the way Google handles subdomains. To better understand this change and what this means for search marketers, let’s revisit common site structure choices, how they’re handled by Google, and how that impacts SEO. Although URLs may take many forms, the overall structure can be distilled […]

At Pubcon last week, Matt Cutts mentioned a change in the way Google handles subdomains. To better understand this change and what this means for search marketers, let’s revisit common site structure choices, how they’re handled by Google, and how that impacts SEO.

Although URLs may take many forms, the overall structure can be distilled into one of three basic types: within a single domain (pages from the root of the domain as well as within subfolders), subdomains, and separate domains.

Generally, a site begins with a single domain. You decide to sell widgets online, so you open a site about them at this domain:

widgets.com

Within that site, you have pages about various types of widgets, like this:

widgets.com/blue.html

widgets.com/red.html

widgets.com/green.html

Indented Results: Host Crowding in Action

Your widget site does well on Google and the home page ranks in the top ten results for a search on “widgets.” You find that your page on blue widgets is quite popular as well, and it’s indented below the listing for the site’s home page. This is called “host crowding” to ensure diversity in search results. (Google also calls this indented results.)

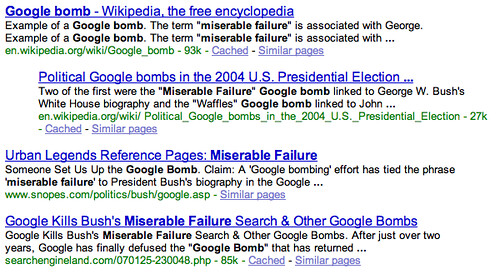

You can see this in action below with the one en.wikipedia.org page indented below the other.

Search marketers would like to see as many results from their pages as possible, but searchers would like to see choices from a variety of domains. Google‘s priority is relevant results for the searcher, so when they find multiple pages from one domain that would ordinarily rank highly for the query, they take the top two pages, cluster them together (one indented below the other), and show only those in a set of 10 results.

(There have been a few glitches with this, such as that the second listing isn’t indented when the first includes sitelinks. Also, this clustering happens for each set of 10 results, so although you will see a diverse result set on the first page, you may see a lot of the same types of results once you click to the second page.)

Dominating Results with Domains and Subdomains

Say you decide to start a blog about widgets to share news and information about the widget industry to augment your original e-commerce widget site. How do you structure the blog? Do you place it as part of your original site, or do you place it on a subdomain or separate domain? Three common choices are:

- Existing site: widgets.com/blog/index.html

- Subdomain: blog.widgets.com

- Separate domain: widgetsblog.com

In the past, some search marketers may not have gone with the first option, to have it be within an existing site. That’s because it meant there was no opportunity to get more than two listings on the same page. Instead, historically, putting the blog on either a subdomain or a separate domain meant it would be treated as a completely different site, allowing the marketer to potentially pick up a third or even a fourth listing on the page.

Now that’s changing. Google is no longer treating subdomains (blog.widgets.com versus widgets.com) independently, instead attaching some association between them. The ranking algorithms have been tweaked so that pages from multiple subdomains have a much higher relevance bar to clear in order to be shown.

It’s not that the “two page limit” now means from any domain and its associated subdomains in total. It’s simply a bit harder than it used to be for multiple subdomains to rank in a set of 10 results. If multiple subdomains are highly relevant for a query, it’s still possible for all of them to rank well.

Matt Cutts talks about this a bit more in his blog and in this interview with me and Mike McDonald on WebProNews. For instance, for navigational queries such as “IBM“, it’s likely that the searcher is looking for pages from the IBM web site, and in fact, even with this new change, the first two results are from ibm.com (likely hard stop limited to two due to host crowding) and three other results in the top ten are from ibm.com subdomains.

Subdomains Lose Their Magic?

Have subdomains lost their ability to help sites increase their representation in a set of search results? That remains to be seen. Subdomains are tricky for search engines because in some cases (for instance, video.webpronews.com and blog.webpronews.com), subdomains are used as important subsections of a main domain (webpronews.com) that’s owned by the same company. In other cases, (for instance, lsjumb.blogspot.com and postsecret.blogspot.com), subdomains are websites controlled by completely separate webmasters. They may share the same root domain (blogspot.com), but the content of the blogs themselves is unrelated to each other.

Perhaps someday the search engines will be smart enough to recognize when subdomains are completely separate sites and treat them accordingly, but it’s a tough problem today.

Shift to Separate Domains?

With the restrictions imposed on displaying multiple pages from a single domain or subdomains, it seems like it might make more sense for webmasters to create separate domains for each category. For instance, if you want to rank for “Viagra” (not that anyone I know would), why not create viagra.com, viagravideos.com, and viagrablog.com, so you have more opportunities to rank?

Nice try, but it doesn’t really work that way. For one thing, you’ll run into an issue with PageRank. If you have viagra.com, viagra.com/videos, and viagra.com/blog, then the two subareas of the main domain may get some PageRank boost from links to the viagra.com home page (and over at Yahoo, they’ve said in the past that the “trust” for a particular domain will flow down to other pages in the domain, regardless of linking). But with three separate sites, you need to work three times as hard at getting links. And when link building, it’s hard enough to get a single link, much less three.

In the end, the lost PageRank likely washes out any potential gains in overcoming host crowding and this new subdomain change. Separate domains do make sense for completely different subjects, particularly when the content is different enough that link and traffic building efforts would be aimed at different audiences.

What About Folders & Subdirectories

As all this has come up, people have been wondering about “folders” or “subdirectories” of a web site. Often times, site owners like to organize all the pages of a particular type within a subsection of a main domain. For example, in the widget site, all the pages about consumer widgets might be in the consumer area (/consumer), while industrial widgets go in another area (/industrial), with URLs looking like this:

widgets.com/consumer/blue.html

widgets.com/consumer/red.html

widgets.com/consumer/green.html

widgets.com/industrial/big.html

widgets.com/industrial/bigger.html

widgets.com/industrial/biggest.html

Despite this organization, Google still sees pages within folders or subdirectories as part of the main domain. That means they are subject to host crowding rules covered above. Generally speaking, Google sees widgets.com/blue.html and widgets.com/consumer/blue.html exactly the same, so the choice about which organization to use depends entirely on what’s easiest for you and your users. Using folders tends to make the site easier for you to manage and easier for visitors to understand. However, be careful creating too deep a directory structure. PageRank is thought to flow from the home page into deeper directories and bots crawl from the home page down. Depending on a number of factors (including the PageRank of the site and how frequently the site is updated), bots may only crawl so deeply into a site’s structure. There’s no hard limit on how deeply a site’s directory structure should go, but you likely can’t go wrong with one or two levels.

So, What’s A Webmaster To Do?

For each query that you care most about, make sure that you have one or two pages that you most want to rank for those terms and focus on optimizing those with keywords, anchor text, and links. Know that every page on your site won’t rank for each query, so decide which are the most relevant.

If you have completely separate topics, consider creating separate domains. In most cases, you’ll want to stick with a single domain, but there are times when multiple domains make sense. I’m thinking of starting a social media blog, and I’ll likely start it as its own domain rather than within my existing domain or as a subdomain of it.

Use subdomains when you have very disparate content that you feel searchers would feel relevant. For instance, videos are very different from articles, so it may make sense to separate those into subdomains. If you have a travel site, it may make sense to use subdomains to categorize cities. Someone searching for a particular resort may want to see the various locations that resort is located in.

In particular, look at the use of a single domain versus subdomains versus separate domains from a business perspective. What makes the most sense for your users? If you have completely different business units, they may appear more credible as individual subdomains.

As for doing with separate domains, that’s another leap that might not be necessary. Matt says the change to treating subdomains hasn’t been that huge. It’s been live for several weeks, and no one’s noticed. This change doesn’t prevent multiple subdomains from ranking for a query, it simply makes it a bit harder to ensure a relevant, quality result for the searcher.

Since the change has been in place for a while, take a look at your search traffic from the previous few weeks to see if you’ve noticed any reductions. It’s likely most sites haven’t experienced traffic dips. If you have, check to see if fewer ranking subdomain pages is the reason. If it is, the best thing to do is probably to ensure that the pages that do rank have compelling snippets in the search results.

Contributing authors are invited to create content for Search Engine Land and are chosen for their expertise and contribution to the search community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. The opinions they express are their own.

Related stories

New on Search Engine Land