Bing Now Supports Google’s Crawlable AJAX Standard?

In 2009, Google proposed a standard for crawlable AJAX. A few months later, that standard went live and while I thought it was great Google was providing options to content owners to ensure their sites were indexed well, I noted at the time that “this method doesn’t work for search engines other than Google. So […]

In 2009, Google proposed a standard for crawlable AJAX. A few months later, that standard went live and while I thought it was great Google was providing options to content owners to ensure their sites were indexed well, I noted at the time that “this method doesn’t work for search engines other than Google. So if you care about getting this content indexed by Bing and Yahoo!, you’ll want to explore other methods.” It’s possible that’s now changed.

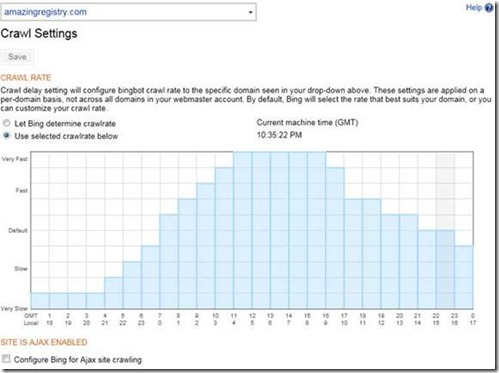

A few weeks ago, as part of a set of updates, Bing Webmaster Center launched crawl settings, which enable site owners to set the crawl rate of BingBot by time of day. (You get to this option by selecting Crawl > Crawl Settings.) You can see in the screenshot provided in their blog post that a checkbox below the crawl rate grid has a heading titled “Site is AJAX Enabled” and is labeled “Configure Bing for Ajax site crawling”. The only mention in the blog post was that the crawl settings “feature enables dedicated pre-set crawl settings for AJAX websites.” I’m not sure what either of things were intended to mean. The help documentation isn’t much better, saying “If your website is built in AJAX, you can select the check box labeled Configure Bing for Ajax site crawling to alert us to handle the website properly.”

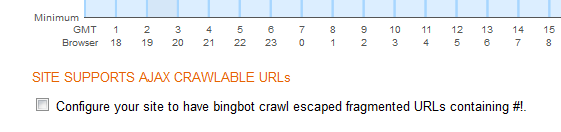

Now, the heading has changed to “Site Supports AJAX Crawlable URLs” and the wording for the checkbox has changed to “Configure your site to have bingbot crawl escaped fragmented URLs containing #!”.

It appears as though this means Bing will crawl #! URLs according to the Google standard. The help information hasn’t been updated, so it’s hard to say for sure.

Bing is crawling #! AJAX URLs, then implementing AJAX URLs in this way should result in those pages being indexed in Google, Bing, and Yahoo. This implementation requires substantial configuration as I noted in my earlier article and many other reasons exist why other progressive enhancement-based options may be better choices. But all that was keeping you from #! URLs was lack of Bing (and thus Yahoo) support, then that obstacle may be gone. I’ll see what I can find out from Microsoft and report back.

Related:

Google May Be Crawling AJAX Now – How To Best Take Advantage Of It

Google Offers a Proposal To Make AJAX Crawlable

It’s Official: Google’s Proposal For Crawling AJAX URLs is Live

Google I/O: New Advances in the Searchability of JavaScript and Flash, But Is It Enough?

Opinions expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.

Related stories