Liveblogging The Google’s Web Search “Evolution” Event

Live from the Computer History Museum in Mountain View, I’ll be liveblogging what Google has dubbed “an inside look at the evolution of Google search.” As it turns out, it’s the unveiling of Google’s new Real Time Search, shown above, as well as search by voice and search by picture improvements for mobile. Real time […]

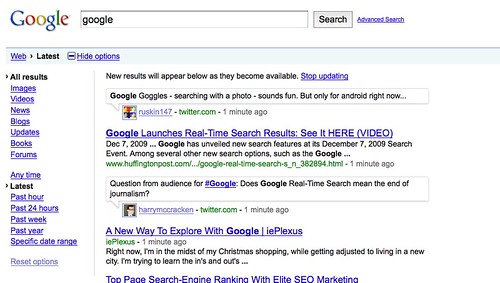

Live from the Computer History Museum in Mountain View, I’ll be liveblogging what Google has dubbed “an inside look at the evolution of Google search.” As it turns out, it’s the unveiling of Google’s new Real Time Search, shown above, as well as search by voice and search by picture improvements for mobile.

Real time search is slowly coming in and will rollout over the next few days. If you don’t see it, try this link. Google’s official post is here. Below is the liveblogging as it unfolded.

This is all I have to share on the event so far:

Please join us on Monday, December 7th for an inside look at the evolution of Google search. We’ll discuss how search has transformed over the years, and will introduce a few new features that we hope will change how people search in the future. Speakers include Marissa Mayer, VP of Search Products and User Experience; Vic Gundotra, VP of Engineering; and Amit Singhal, Google Fellow. It’s an event you won’t want to miss.

An agenda now:

- Introduction: Marissa Mayer, VP, Search Products & User Experience

- Mobile Search: Vic Gundora, VP, Engineering

- Search: Amit Singhal, Google Fello

- Closing: Marissa Mayer

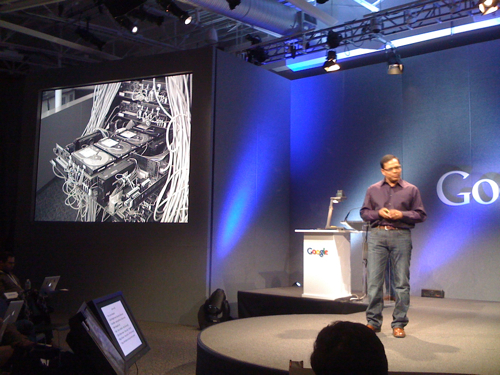

And we start. Marissa (above) is welcoming us as promised. Focus is on the future of search and innovation. Google thinks of four main ways forward:

- Modes

- Media

- Language

- Personalization

Modes, modalities, how do people search. Today, they type keywords into desktop computers. But there will be many more. What about mobile phones. If you talk to your computer. Give it a picture. Search will grow in many way.

Media is what appears in the results. Today we have movies, maps and more. Constantly looking at how to make Google more comprehensive, provide answers, more than 10 blue links (trademark: former Ask.com CEO Jim Lanzon).

Language: Google can translate into a lot of pairs, Google sees future where you search in any language and get answers in any language.

Personalization: Because search changes so quickly, hard to pinpoint future but results will be more personalized and more rich and relevant to users.

Oh, and fifth component to future. Progress. There will be a lot of progress. Google likes to innovate, iterate often. On Google Blog, launched This Week In Search to itemize each week all they do. Since then, there have been 33 innovations, that’s 2 per week she says.

Next up, Vic, above. What innovation do you think that changed mankind? Printing press? Steam engine? Electricity? At their outset, full impact of those weren’t understood. Gutenberg went broke. Papers from his press came years later. Google argues same thing might be happening in personal computer space.

In 3rd decade of PC revolution, only now might possibilities really be seen. Google sees 3 trends:

First trend: Moore’s Law of computing, processors increase.

Second Trend: Connectivity. Idea everything will be connected.

Third Trend: Powerful clouds (gosh, I though that was connectivity, but OK).

When you combine these three things, you get a Reece’s peanut butter cup. No, you get something interesting. And here’s a Droid phone in his hand. Camera, GPS, accelerometer. By selves, sensors not extraordinary. But add cloud and connectivity, microphone becomes an ear, camera becomes an ear.

Search By Voice (you all remember Microsoft rolled this out in like 2005? Yes? Yes?). Anyway, Vic says rolled out last year and since then has gotten really good.

Says pictures of barack obama at something with the french president. Really long query. Brings back some images. They apparently match based on applause (applause?) the broke out by what I’m guessing is a Googler cheering squad mixed among the press and bloggers. I can’t see well myself. Glasses. Forgot. Damn. Old. Getting.

New demo, Google Mobile app, showing the Mandarin version. Going to say the only Mandarin query he’s able to do. McDonald’s in Beijin. Now we get all the McDonald’s in Beijing. And more applause. Japanese now being supported today. I wonder if they’ve fixed it though so that if your British and speaking English the damn thing actually works.

A Japanese query. It works. More applause, which is getting really disturbing. Did I wander into a Google search developers conference by mistake?

Imagine you spoke in one language, got translations that came back and the device would speak for you. You know, in Star Trek, we call this a Universal Translater. Says Hi my name is Vic, can you show me where the nearest hospital is? Now it’s speaking in Spanish. I guess it was right. There was applause. I wonder if they’ll have a device that translates Google into English. Like you ask them a question, and they give you a straight answer rather than “We’re always thinking and experimenting but don’t reveal that data.”

Research in Japan, people kept their phones 24 hours a day, less than a meter away. Then Vic realized he does the same. Guys, though, don’t be like the dude who was peeing and texting at the urinal next to me. I know. Gross. But we need limits.

Anyway, with My Location in Google Maps, they save about 9 seconds per users because they know your location and render it. But they want to do more.

How many use Google Suggest? Data shows over 40% are queries that result from someone using a Google Suggest item. How could that be improved with location? Let’s get 2 separate iPhones out and find out.

One phone is hardcoded to believe its in Boston. RE suggests Red Sox. In San Francisco, RE suggests REI. At the Daily Telegraph, RE suggests we are Google and are editing Google Suggest to make you paranoid.

Now doing a product search. Top two results for Canon camera has blue dots to say they’re in stock at merchants near by? Isn’t that great he asks? Applause.

OK, there’s a near me now feature. See things that are near you. But can this be ranked better, sure — you can expand an item and then have stars that rank even more.

Today, new version of Google Mobile Maps for Android, go to what you want to look at, “long press,” select, say What’s Nearby, and that feature’s available (don’t have it, try it from going to the Google home page).

How about search by sight? How about Google Goggles, which is out in Google Labs now (you’ll find it here). OK, story about a wine someone brought him. While they went to pee, he took a picture of the bottle (as he is doing now). It came back with info about the wine, which he used to impress the person with his knowledge when they came out.

Hmm, what if you see a landmark. Shows a landmark in Japan. He takes a picture of it, and now we get info about the shine from Google, all from the image scan. And applause, of course.

So in future, you’ll point finger at an object and get info.

To wrap up, we’re at the beginning of the beginning. See what happens if you take a sensor rich device and connect it to the cloud (or really, connect it to Google).

OK, Marissa is back. Search engines that understand. Even search engines with eyes. How about medium. Talking about Universal Search and how Google blends results and the relevance challenge that poses. And if it’s snowing in Tahoe, there are user reports that might be more accurate than official ones. How do you pull that in.

Amit up (above), going to do big announcment, one of the most exciting in his career in search (and Amit’s been doing this for ages, so if he’s excited, I’m excited).

How did information evolve? Took time to pass from person to person. Then we had printing, which disseminated info for widely. And until about 30 years ago, print remained the primary form for information transfer.

Then came the internet. Google used to crawl each month, update each month in what was known as the Google Dance. That wasn’t fast enough, even when things changed to every few minutes.

In today’s world, information is coming in all the time on web pages, Tweets, information is being created at a pace he’s never seen before and in this information environment, seconds matter.

Imagine library with billions of books. Then 100 million new books come in. Librarian finally figures out how to deal with that, and just when master, librarian realizes there are people running around the library constantly adding notes, adding books, marking things up. That’s the information environment today. Now imagine librarian tracks all this stuff without breaking a sweat? As of today, that’s what Google can do.

We are here today to announce Google Real Time Search.

Here’s Dylan Casey (sp?) who’s going to show what epopel are looking at. How. Latest results for Obama just flowed into the Google search page. Applause, and sure, I’m impressed.

First time ever any search engine has integrated real time results into the results page.

Now we’re seeing a dedicated page with results flowing (above, sorry, bad pic). Which I’m pretty sure you could get at Collecta and a few other places, though we’ll see how the quality compares in time.

Getting frustrated I don’t have a URL to share with you all yet. Hang in there.

The whole real time web we’re told. With tweets and blog posts. Which isn’t really all real time but my What Is Real Time Search? Definitions & Players explains that in more depth.

OK, search options in google, then click on latest to see real time results. At least, that’s what’s supposed to be happening. Right now, I don’t see it.

Also in options panel, there’s a new Updates link that you can use to see real time results.

More demo, this time a search for Google Goggles and how you get tweets in the middle of the Google search results. Or what mostly looks like tweets.

And now a look at how this works on mobile. More applause, and not just from the cheering section. Works for iPhone, Android and anywhere you are, just type in a query on Google and you’ll get the real time web in your palm right away.

Also, Google Trends is now out of beta, and there’s a new Hot Topics area that shows real time query trends. And you can search for real time info from it, also.

How do they do this? Google says had to develop technologies to take into account query fluctuation, language modeling, quality of author, relevance probability, semantics, real time URL resolution and more.

As so much more comes in, speed, comprehensiveness and more is important but not as much as relevancy overall. Relevancy has become the critical factor in building products.

Recap of what talked about. Real time search, a new Latest search option in Google search options, a new Update option in Google search options, new mobile real time search and new Hot Trends area.

Marissa back, with two partner announcements. Facebook will be providing Google with a feed from Facebook pages and will show in real time search. MySpace will provide updates, too. Thanks Twitter also for previous deal, calls out to Biz Stone who’s at the event somewhere she says.

Questions.

How about face recognition? Vic say faces are objects that can be recognized but Google decided not to. Wants to work through issues of user privacy. Decided to delay until more safeguards.

Ads & real time search? Focused on the user side right now, says Amit. People are experimenting with multiple models. All companies like Twitter and others have added tremendous value to the world.

How many real time sources? How often crawling? How much draw in? Amit says crawling a lot of content, billion pages per day (think he said). Many sources out on the web, crawling all of them. Key is comprehensiveness of info and consolidation with Google’s existing results. Taking Twitter now and soon Facebook and MySpace (you know, until Murdoch blocks those, heh).

How avoid spam in real time results? Amit says he’s knows something about spam. “We have the best systems in place to prevent spamming of the system” Calls out to Matt Cutts for leading the team. Can fight spam almost before it happens.

Can we selectively add in real time sources, like just your own Facebook friends? Amit says excited about what’s happening and not much more.

How will stuff flow out in respect to say privacy at Facebook? Apparently if you flag something private, it won’t flow out.

Do you need these partnerships to be comprehensive? Marissa says they really need the world’s information.

Are they paying for this stuff? They won’t confirm or deny. Which still leaves me wondering. Is Murdoch’s MySpace handing over key data to Google for free but he thinks news is something he should be paid for? Or is he getting paid for MySpace data (and if so, why wouldn’t Google pay for news data?).

Will support be reduced for non-Android devices? Vic says absolutely not.

Will real time search be the death of journalism? If knowledge is power, is Google the most powerful company in the world? Amit says journalism will continue to have its role. Knowledge is indeed power, and people in this room are keeping people informed with knowledge. So he doesn’t see how real time and death of journalism can be combined. As for second point, goal of Google has always been to bring information to people.

Missed some questions, sorry, tried to actually play with the real time search service. General question about how do you find what’s really true. Hard problem.

Question on what it means for PageRank. PageRank is one of many factors Google already uses for regular results, and for real time search, you have a variety of factors as well. Marissa adds PageRank is about finding authority and you can use some learnings from that to have like an UpdatesRank or RetweetsRank for measuring real time data.

Can you disable the entire thing, not just pause it. Amit says they’ve experimented tons with this (really, because it’s never been spotted in the wild).

That’s the conference over.

Contributing authors are invited to create content for Search Engine Land and are chosen for their expertise and contribution to the search community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. Search Engine Land is owned by Semrush. Contributor was not asked to make any direct or indirect mentions of Semrush. The opinions they express are their own.