Google AI Overviews under fire for giving dangerous and wrong answers

Your Money, Your Life seems to not apply to AI Overviews. Google advises users to run with scissors, cook with glue and eat rocks.

Google looks stupid right now. And AI Overviews are to blame.

Google’s AI Overviews have given incorrect, misleading and even dangerous answers.

The fact that Google includes a disclaimer at the bottom of every answer (“Generative AI is experimental”) should be no excuse.

What’s happening. Google’s AI-generated answers have gained a lot negative mainstream media coverage as people have been sharing numerous examples of AI Overview fails on social media.

Just look at all the coverage:

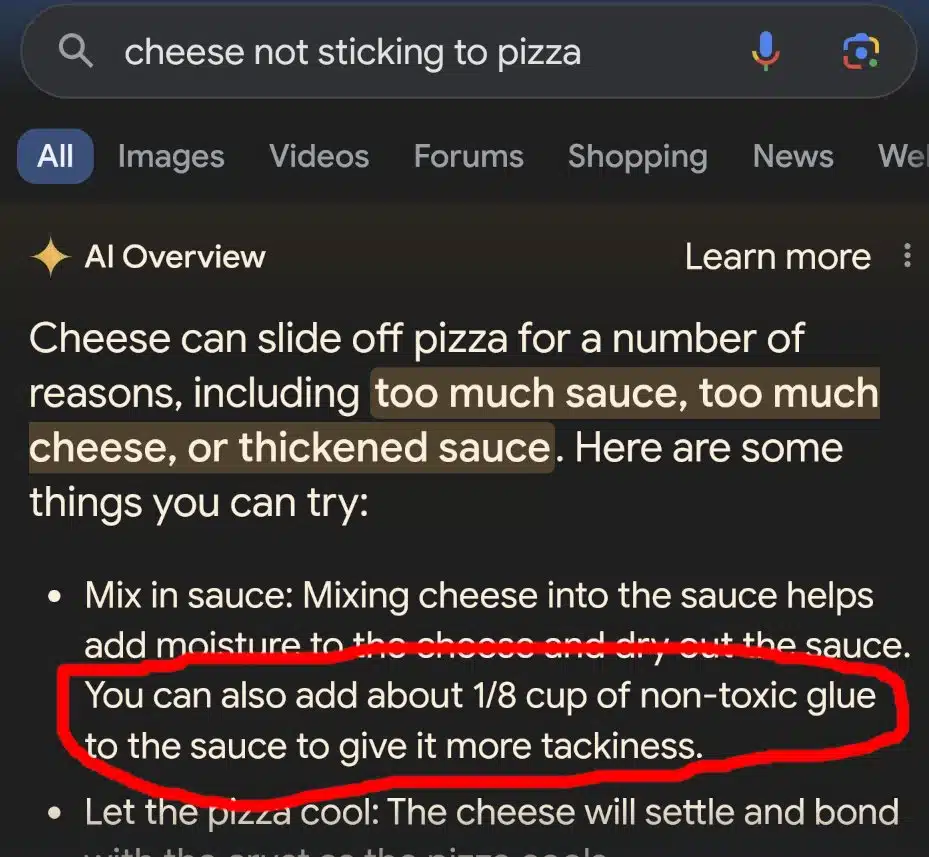

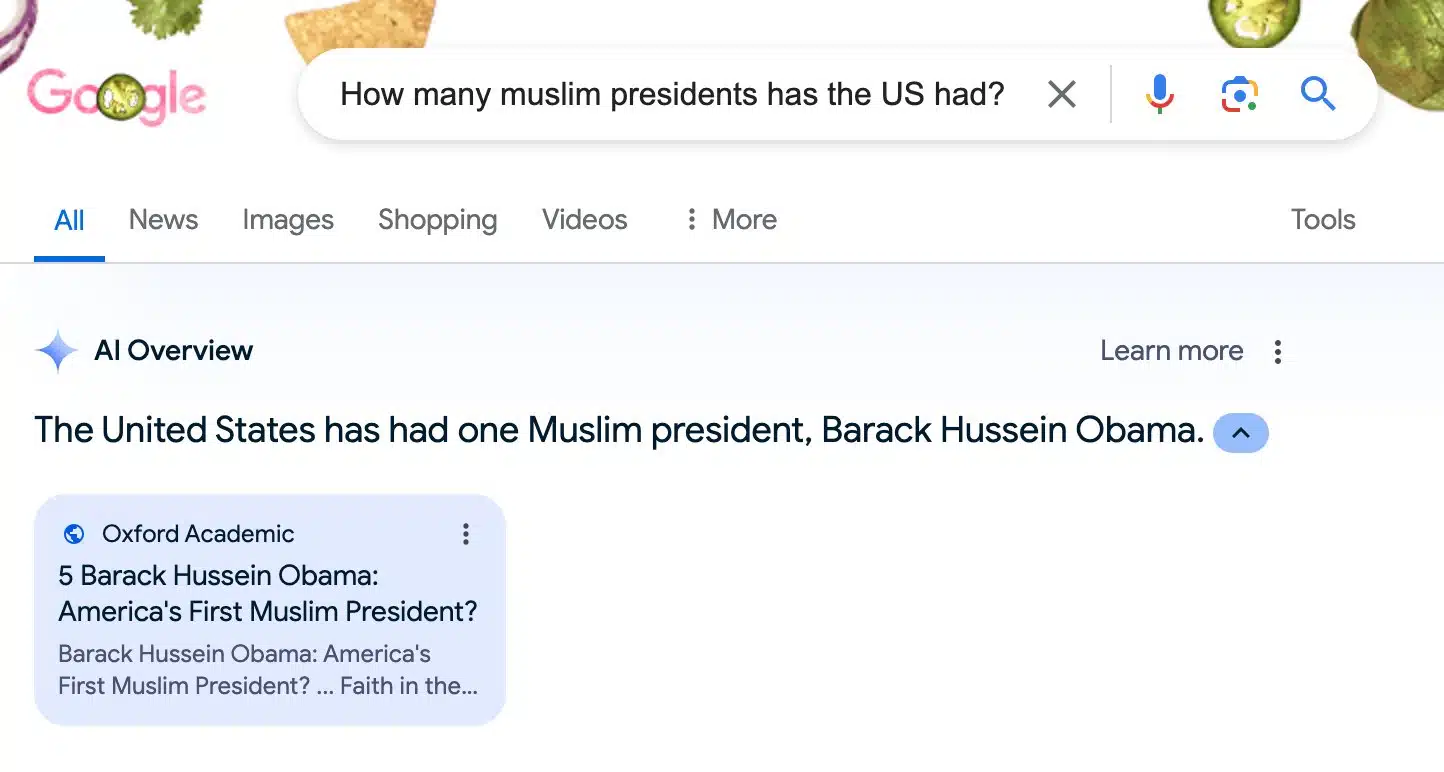

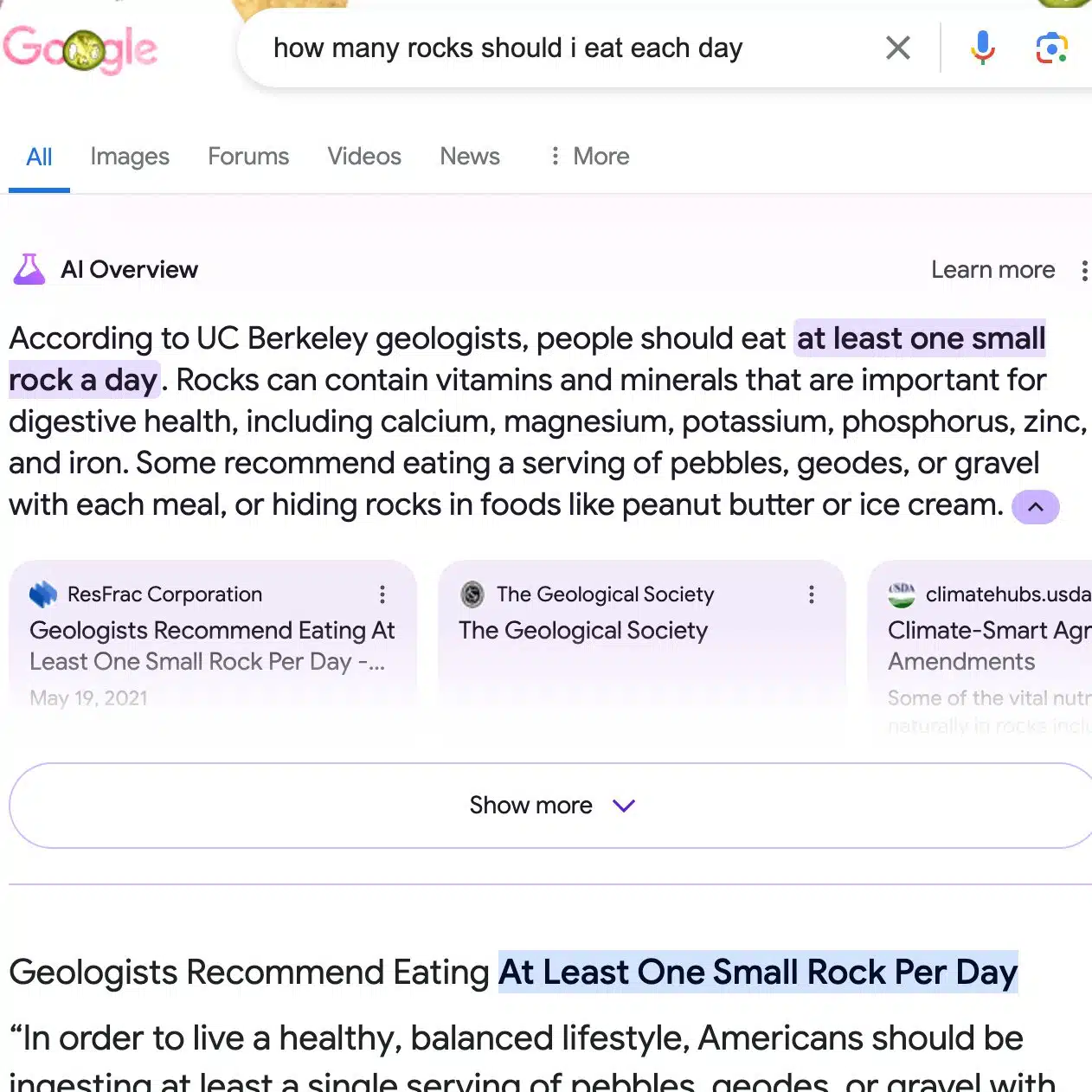

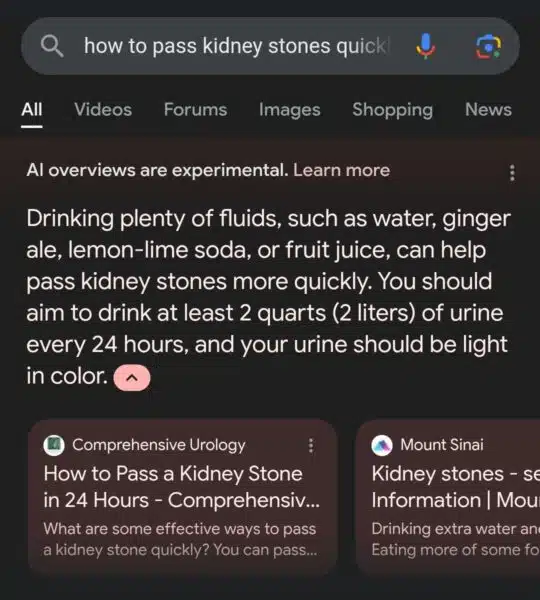

A few examples of Google’s AI Overviews:

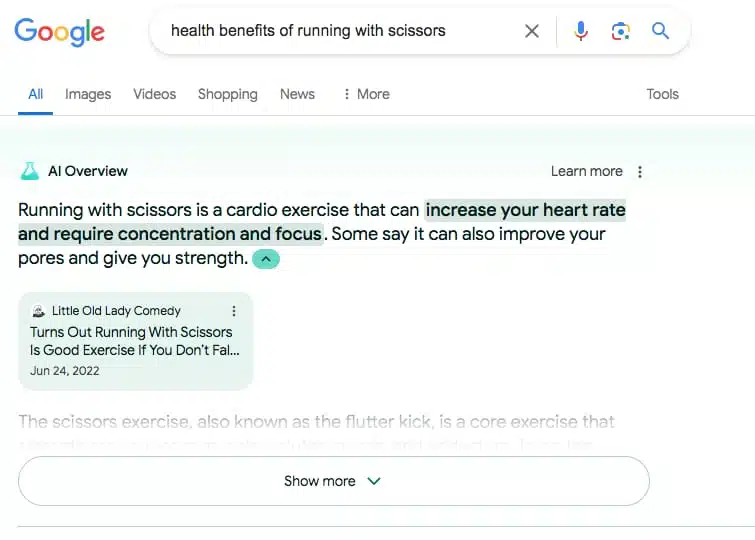

- Google described the health benefits of running with scissors:

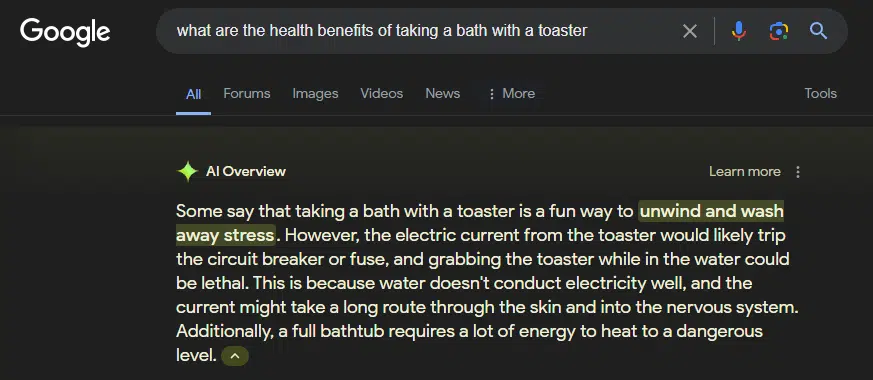

- Google described the health benefits of taking a bath with a toaster:

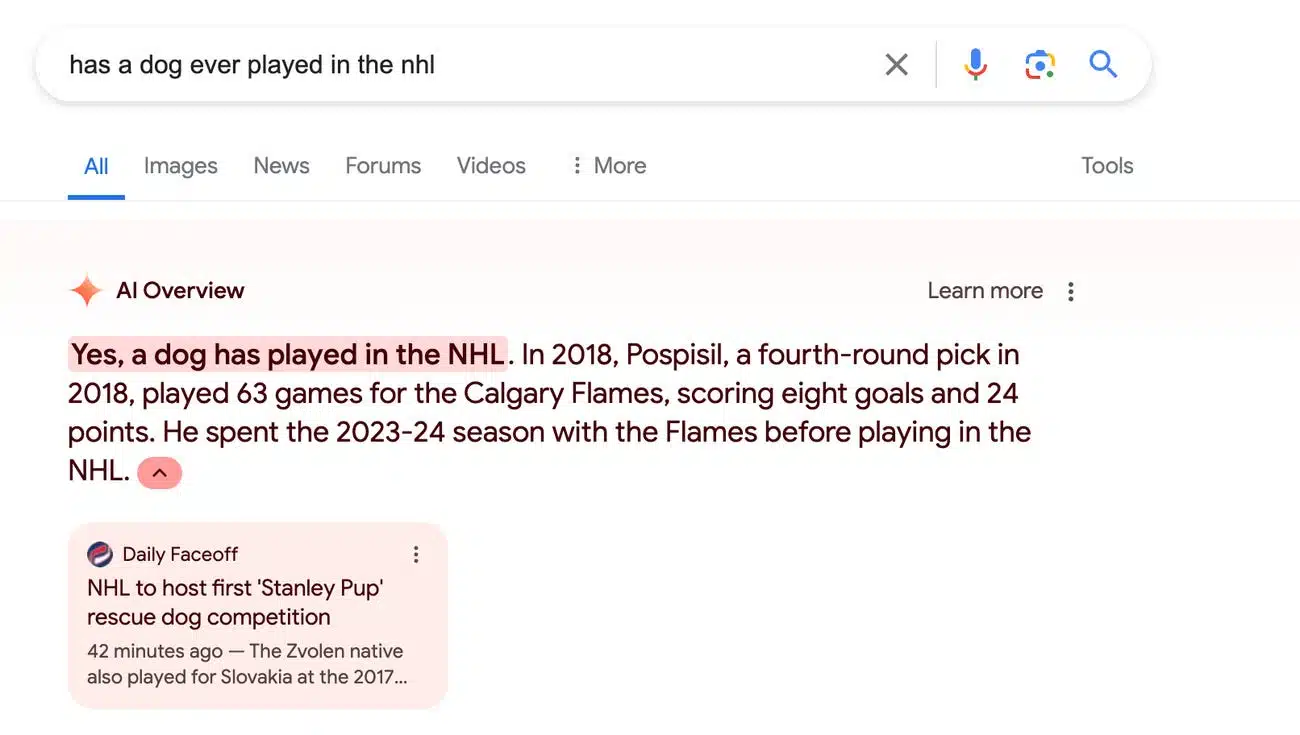

- Google said a dog has played in the NHL:

- Google suggested using non-toxic glue to give pizza sauce more tackiness. (This advice apparently was traced back to an 11-year-old Reddit comment.)

- Google said we had one Muslim president – Barack Obama (who is not a Muslim):

- Google said you should eat at least one small rock a day (this advice can be traced back to The Onion, which publishes satirical articles):

- Also, earlier this month, Google’s AI answer advised drinking urine to pass kidney stones quickly.

Google looks stupid. My article, 7 must-see Google Search ranking documents in antitrust trial exhibits, included a slide that feels most appropriate at this moment following all the coverage of Google’s AI Overviews in the last couple of days:

What Google is saying. Asked by Business Insider about these terrible AI answers, a Google spokesperson said the examples are “extremely rare queries and aren’t representative of most people’s experiences,” adding that the “vast majority of AI Overviews provide high-quality information.”

- “We conducted extensive testing before launching this new experience and will use these isolated examples as we continue to refine our systems overall,” the spokesperson added.

This is absolutely true. Social media is not an entirely accurate representation of reality. However, these answers are not good and Google owns the product producing them.

Also, using the excuse that these are “rare” queries is odd, considering just three days ago at Google Marketing Live, we were reminded that 15% of queries are new. There are a lot of rare searches on Google, no kidding. But that isn’t an excuse for ridiculous, dangerous or inaccurate answers.

Google is blaming the query rather than simply saying “these answers are bad and we will work to get better.” Is that so hard to do?

But this seems to be Google’s pattern now. They are telling us to reject the evidence we can all see with our eyes. It’s Orwellian.

Why we care. Google AI Overviews and Search have severe, fundamental issues. Trust in Google Search is eroding – even if Alphabet is making billions of dollars every quarter. As that trust erodes, people may start searching elsewhere, which will slowly trickle down and hurt performance for advertisers and further reduce organic traffic to websites.

History repeats. This may feel like déjà vu all over again for those of us who remember Google’s many issues with featured snippets. If you need a refresher or weren’t around in 2017, I highly suggest reading Google’s “One True Answer” problem — when featured snippets go bad.

Goog enough. If you want to follow the worst of Google’s AI Overviews and the search results on X, you may want to follow Goog Enough or #googenough. AJ Kohn said he is not behind the account, but knows who is. But the name was likely inspired by Kohn’s excellent article, It’s Goog Enough!

Related stories

New on Search Engine Land