Advanced Link Auditing & Best Practices For Acquiring Authoritative Links

Contributor Chris Silver Smith shares highlights and takeaways from link building experts at SMX Advanced.

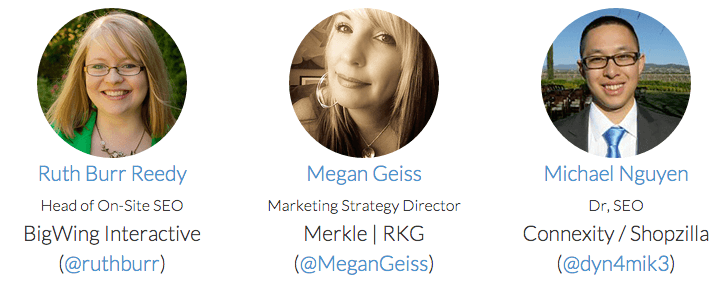

I recently attended the “Advanced Link Auditing & Best Practices For Acquiring Authoritative Links” session at SMX Advanced, moderated by Elisabeth Osmeloski and with presentations provided by Ruth Burr Reedy, Megan Geiss, and Michael Nguyen.

There’s probably no more controversial topic in search engine optimization (SEO) circles than link development. Google’s famous original innovation, PageRank, made obtaining external links a huge necessity for webmasters in order to attain advantageous rankings in search results.

Since then, the major search engines have created and progressively refined methods for identifying artificial link development. As a result, certain types of links have lost value as ranking signals, and search engines has even begun to penalize sites caught trying to manipulate their rankings through links.

Link Building: Then And Now

If you’ve attended SMX since the very beginning, the contrast in how we discuss link building now versus then is also quite striking. The increasingly aggressive policing of artificial links has pushed the entire industry from the “wild, wild West” of technical tricks, exploits, and holes in spam policing, to a focus on performing solid content development and more classic-style marketing activities that may indirectly result in naturally obtained links.

Megan Geiss was up first, and she presented tips based on experience with a major financial company that had some domains “in Google jail” — penalized, apparently due to bad links. She walked through some fairly standard steps an SEO analyst needs to perform in evaluating a case of likely penalization (some of which have been fairly standard steps since Google introduced the Penguin Update back in 2012).

For manual penalties, Google will inform you of the penalty via Webmaster Tools. For algorithmic penalties, it’s necessary to review your site’s backlink profile to determine which links may have been considered spammy or unnatural.

Megan described how her agency performs this type of link risk assessment. They examine quality metrics, evaluate the referring domains, check to see how natural the anchor text is, review the geolocation of IPs, and look for sitewide links. She states that the referring domain is the factor they pay most attention to — they look to identify client-owned domains and perform a C-block analysis to see what relationships there may be between the linking domains.

For performing link risk assessment, they depend heavily upon Majestic’s link data. They analyze the total links vs. the numbers of linking domains, and they look at the data using a pivot table. (Something like a large number of linking IP addresses based out of Malaysia can be a red flag for a website based in the United States.) They also focus very heavily on highlighting low citations using “Citation Flow” and Trust Flow (they focus on scores of 30 or less).

They also use Moz Open Site Explorer to look at page-level link data since it’s possible that a penalty is not necessarily sitewide (something I’ve seen with my clients as well). And, they use links reported by Google Webmaster Tools.

Megan’s 7-Step Process For Getting Out of Google Jail

- Gather all backlinks. Get a list of all your inbound links (from Google Webmaster Tools, Majestic, etc.) to create a comprehensive inventory.

- Rank links by quality. Consolidate and de-dupe your list, filter by Citation Flow, look for IP patterns or trends within C-blocks, etc., to assess good links vs. bad.

- Analyze. Analyze and evaluate the filtered master list; determine if a backlink or domain is outside of Google’s quality guidelines or falls within an identified link scheme; manually review domains.

- Outreach for removal. Gather contact info of the linking websites and reach out to them to request link removal (minimum of two outreach attempts). Document this for Google.

- Follow up. Typical responses to a link removal request: the link is removed and you are notified, the link is removed and you are not notified, the webmaster requests payment to remove the link, or you receive no response whatsoever.

- Use the disavow tool. Create the disavow file and upload it to Google Webmaster Tools. Avoid common mistakes, such as disavowing “just enough” to get by, or disavowing everything, or uploading a new list that overwrites an old disavow file.

- Submit a reconsideration request. Admit the mistake, and show them your documented efforts to clean up your backlink profile. Follow up with the creation of new, quality backlinks.

For building these new, quality backlinks, she suggests doing new social media work, promotion campaigns, social mentions — gaining Likes, Shares, Tweets, etc.

https://www.slideshare.net/slideshow/embed_code/key/HRec9yVquKW9Yu

“How To Build The Links You Actually Want”

Ruth Burr Reedy spoke next on the topic. She recommended that the best links drive traffic — they’re not just links for links’ sake. Not only does Google like real links that drive actual human traffic, these links give you business in addition to ranking value.

Ruth suggests building a content calendar for link outreach. She endorsed a formula from Ronell Smith’s article, “How to Create Boring-Industry Content that Gets Shared” — one month, one theme, four pieces of blog content. These pieces of content should include one strong piece of local content, two pieces of evergreen content, and one link-worthy asset.

Ruth also suggests identifying people interested in content you create as a means of developing a sort of relationship with them, increasing the chances that they might promote your work. To do this, you must first identify people that may have affinities for your topic areas.

You can use tools like Followerwonk to find people with affinities useful to you — use it to search Twitter profiles for those keywords found in bios only, and locate those who associate with keywords that align with your affinity topics. You can then use TagCrowd to create a word cloud (ignore “twitter,” “followers”). Identify the keywords that resonate the most with the crowd of people who like your concept.

When it comes to evaluating link prospects, she uses Majestic to assess which Twitter users have websites/blogs that would be most beneficial to obtain links from. She’ll use this once a month or once a quarter to determine the best targets based on Trust Flow, Citation Flow, external backlinks, referring domains, etc.

Once you have a list of Twitter users who align with your affinity topics and have valuable sites for links, Ruth then suggests a few outreach strategies that work: try not to be weird; be natural; establish a relationship (good for long-term prospects). Share stuff the influencers in your list share. Only spend minor amounts of time talking about yourself. The formula she follows for Twitter outreach in just fifteen minutes a day is approximately 1/3 replying, 1/3 sharing, and 1/3 talking.

Then, using your content calendar, establish relationships with influencers first — two months out — then later introduce the content they’d like, and they may be more open to sharing and linking to the content they like that you’re producing.

“How to Manage Link Risk In-House”

The above was the title of Michael Nguyen’s presentation. He described a situation he and his team at Shopzilla / Connexity had dealt with wherein their site had received a penalty that heavily impacted traffic. He explained what they did to diagnose and correct it, then discussed their process to reduce and avoid the risk of link penalties in the future. (Similar to Megan’s story, they first identified what they thought were bad links, and then worked to get them removed.)

I found some of the aspects that Michael covered particularly interesting from the viewpoint of large corporation/brand website marketing. Some of the bad links they identified were apparently coming from their own affiliates. This isn’t a surprise, nor does it particularly indicate low quality or spammy techniques on the part of the affiliates. Google has long taken a more skeptical view towards affiliate sites that may have thinner content — and affiliate links may be considered a form of paid link (which Google frowns upon) if not clearly labeled.

The takeaway for larger e-commerce websites is that bad links may very well include your own links and partners. To address this, they updated their terms of service for affiliates, presumably dictating how the links are created and requiring them to be clearly labeled as affiliate links (we can assume they required “rel=nofollow” name/value parameters to be added to all affiliate links as well).

In some cases, they also leveraged their legal department to send DMCA takedown notices to spammers. (I’d assume, in this case, that these spammers included content scrapers.) This is a great strategy, in my opinion, because sites and ISPs in the U.S. may be legally compelled to remove content based on copyright infringement.

They also used the straightforward approach of contacting webmasters and requesting removals, even following up with phone calls in some cases.

As many of us have encountered in cleaning up a site’s bad links, some webmasters demand payment to remove links. Just for the sake of speed, they opted to pay some of these, but he also recommended disclosing to Google which sites demanded payment.

The downside of engaging the legal department to handle some takedowns was that at least one of their innocent partners or bloggers got caught up in the list of sites that received removal demands. The webmaster in that case was outraged, but they made it right with them by sending them Shopzilla wine and candy!

This actually resulted in more positive postings from the grateful webmaster, gaining them yet another beneficial link!

After going through the whole effort to get their site out of the penalty box, Michael stated that he “NEVER WANTED TO DO THAT AGAIN!” This is a sentiment that any of us who’ve dealt with these issues can relate to!

Very interestingly, Michael described a new process they introduced afterwards for evaluating the quality of a linking site prior to exposing their main site to the link and any risks that might accompany it. He described how they used a sort of siloed site domain where they would have new links point to, and after a period of time, they’d assess whether it was a penalized or low-quality site. If it was not, they’d then redirect the link to the main site, where it could then pass PageRank.

https://www.slideshare.net/slideshow/embed_code/key/hqn7yXLxeex8hQ

Overall, the session reflected what are now the best practices for evaluating the quality of a site’s external links in order to eliminate the bad links that cause penalties. The session also described a few best practice methods for obtaining new links — engage with influencers, built quality content that can attract links, and then engage in social media to promote that content.

While the “meat” of the session revolved around already-established practices, I appreciated some of the nuances and creativity in the ways the contributors responded to and resolved their issues. With few exceptions, advanced link building practices are now primarily confined to cleverness in content development and social outreach promotion work.

Contributing authors are invited to create content for Search Engine Land and are chosen for their expertise and contribution to the search community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. The opinions they express are their own.

Related stories

New on Search Engine Land