Hermit Crab SEO

You've heard of Barnacle SEO, but what about Hermit Crab SEO? Columnist Bryson Meunier sheds some light on this troubling black hat practice and urges search engines to do something about it.

You may remember the hermit crab as the animal that uses the shells of other animals for its own survival. Because hermit crabs require larger and larger shells as they grow, they compete with other hermit crabs for discarded shells.

This is what I see happening in search results lately in my own niche.

Hermit Crab SEO is similar to Barnacle SEO — a term coined by Search Influence’s Will Scott to describe using the rankings of a larger object to help your site’s search visibility. But it’s different in that it is not using a larger site’s rankings, but using a defunct larger site’s authority (the shell) and creating rankings for your content (the crab) by adopting the larger site as a home — making their authoritative site relevant to what you’re trying to sell.

I’m not involved in any of these Hermit Crab SEO relationships, but there are likely variations on how it occurs — the smaller site may buy the one with more authority and move in, or the larger site may sell space to the smaller one, renting it a room in its shell.

Instinctively, this strikes me as somewhat unfair, as it amounts to “pay for play” and allows smaller brands to get traffic from search results without actually earning links, but rather by paying for someone else’s links to boost their own visibility.

It’s similar to what black hats do with private blog networks (PBNs), buying up once popular domains in order to link to content they want to rank. This is a clearly black hat practice that I wouldn’t recommend to marketers looking for sustainable traffic, because Google is aware of a lot of PBNs and has disabled them and spoken publicly against the practice.

What’s different about this is that I reported it to Google months ago at the highest levels, and the sites in question still rank. Until they stop ranking or Google speaks out against the practice publicly, some may consider that a tacit approval of the practice and recreate it for themselves. Today, the rankings of the example site below have only improved in the past two years, even though none of the links to the site are for the content that’s currently hosted there.

The tale of Excite.com

Remember Excite.com? Excite launched a search engine three years before Google, in October 1995. This got them a lot of attention and links at the time — so much so that even today, in 2016, they can boast a Domain Authority of 89, according to Moz. (They’re most famous for refusing to buy Google for $750,000 back in 1999.)

If you go to the site today, you’ll find a similar collection of links on the home page to what was there back in 1998, with two notable exceptions.

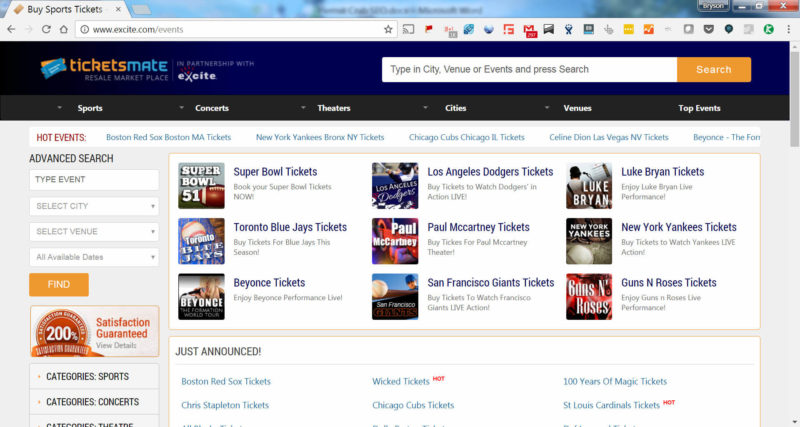

Six years ago, in March of 2010, they copied a small-ticket company called TicketsMate and hosted their content on the excite.com domain. (Disclosure: my employer, VividSeats, is a competitor to TicketsMate.)

Figure 1: Ticketsmate site on Ticketsmate.com

Figure 2: Ticketsmate.com copied on Excite.com

Clearly, there’s no duplicate content “penalty” at work here.

In May of 2010, they launched the Excite Education Portal, where they use the site’s domain authority to rank well for online education terms and drive leads to online schools.

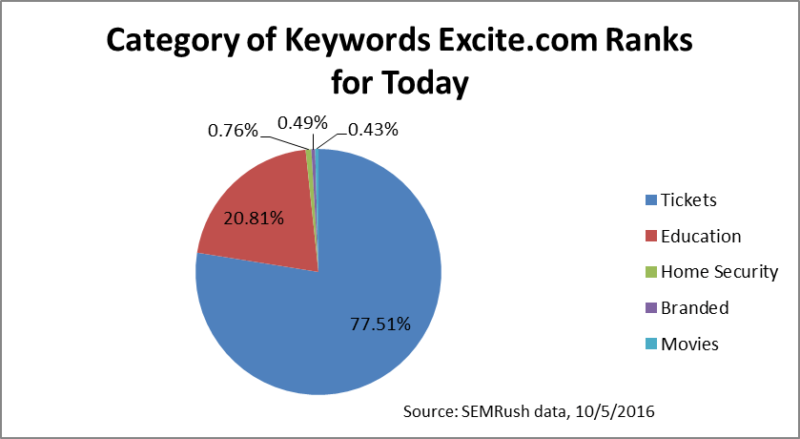

Today, 97 percent of the keywords that Excite.com ranks for organically in Google are related to either tickets (77 percent) or online education (20 percent) according to SEMRush data. Fewer than one percent of the keywords they rank for are navigational, or people looking to go to Excite.com.

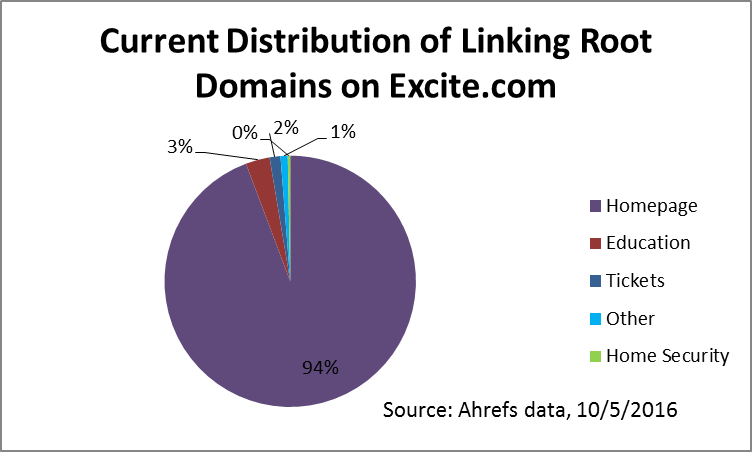

However, in contrast, very few of their links are to tickets or education pages, and 94 percent of their links are to their home page.

Furthermore, most of the links to their ticket and education pages fall in one of three categories:

- They were acquired in late 2014, when many sites in the ticket industry were hit by an Xrumer comment spam attack.

- They are the result of low-quality sites scraping Google that everyone gets.

- They are low-quality directory links or links from low-authority sites, some of which have anchor text that doesn’t match the content, like this link to their Super Bowl page from the seminal 2014 article “Questioning About Soccer? Go by means of These Valuable Guidelines” with the anchor text “obama student loan forgiveness after 10 years”:

Very few of Excite’s inbound links are legitimate sites that point to them as a good site to buy tickets or get education information from.

And yet they rank very well for these things. If you have ever doubted that the concept of site authority is important for SEO, this should convince you otherwise. At least in this niche (as well as online education and home security), the total number of quality links to the site, rather than to the pages, seems to determine whether the pages rank.

Is this a legitimate SEO practice that you should employ? Google didn’t respond to our request for comment at press time, but if it’s not outright spam, it does seem to fly in the face of the concept of creating valuable content. According to Google’s help section,

The key to creating a great website is to create the best possible experience for your audience with original and high quality content. If people find your site useful and unique, they may come back again or link to your content on their own websites. This can help attract more people to your site over time.

But why go through the difficult process of creating valuable content that organically attracts links when you can, in fact, use someone else’s site authority to rank content people don’t otherwise care about, and will not search for or link to?

After all, it’s much easier (as long as Google makes it possible) to visit the Internet graveyard and find a once-great partner with old links and high domain authority that you can make rank for competitive content that your own domain would have no hope at all at ranking for.

What can Google do?

If Google wanted to defuse this, it would be easy enough to do. It becomes pretty clear which sites are legitimate authorities in an industry when you measure the percentage of linking root domains to their home page relative to other pages on the site. When I looked at a sample of sites in the ticket industry, the top three sites had an average of 37 percent of their links to their home page, and searches on their brand terms plus the content they want to rank for (e.g., “stubhub red sox tickets”). Google even has patents for the last part of this, but they’re clearly not using it to determine site quality here.

I’m not pretending I don’t know how difficult it is to weed out spam in an industry where paid links, or links due to paid partnerships, would exist even without search engines. I know it’s tough. I’m also not blaming Excite or TicketsMate, as they’re just making money on a strategy that clearly works for them.

But any company with machine learning, self-driving cars and the smartest engineers on the planet should be able to figure out that a site with an extremely high percentage of links to their home page probably doesn’t deserve to be there. Especially if they keep telling us that creating good content that generates organic links is the best SEO.

But until they do, grab a discarded shell of a website, and walk like a crab to the bank.

Contributing authors are invited to create content for Search Engine Land and are chosen for their expertise and contribution to the search community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. The opinions they express are their own.

Related stories

New on Search Engine Land