Why links are still the core authority signal in Google’s algorithm

Link metrics have been the foundation of Google’s ranking algorithm since the beginning, but could anything ever surpass links as a ranking signal? Columnist Jayson DeMers speculates.

It’s almost impossible to see any meaningful search engine optimization (SEO) results without spending some time building and honing your inbound link profile.

Of the two main deciding factors for site rankings (relevance and authority), one (authority) is largely dependent on the quantity and quality of links pointing to a given page or domain.

As most people know, Google’s undergone some major overhauls in the past decade, changing its SERP layout, offering advanced voice-search functionality and significantly revising its ranking processes. But even though its evaluation of link quality has changed, links have been the main point of authority determination for most of Google’s existence.

Why is Google so dependent on link metrics for its ranking calculations, and how much longer will links be so important?

The concept of PageRank

To understand the motivation here, we have to look back at the first iteration of PageRank, the signature algorithm of Google Search named after co-founder Larry Page. It uses the presence and quality of links pointing to a site to determine how to gauge a site’s authoritativeness.

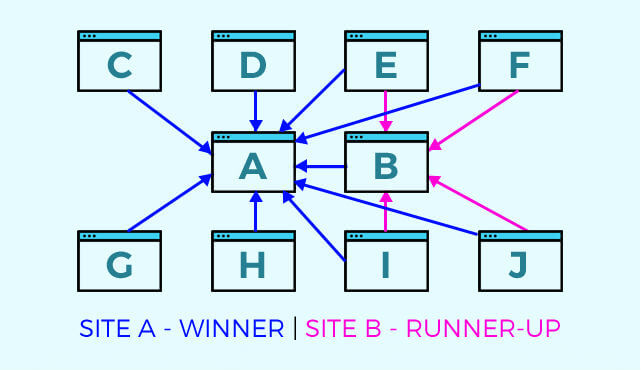

Let’s say there are 10 sites, labeled A through J. Every site links to site A, and most sites link to site B, but the other sites don’t have any links pointing to them. In this simple model, site A would be far likelier to rank for a relevant query than any other site, with site B as a runner-up.

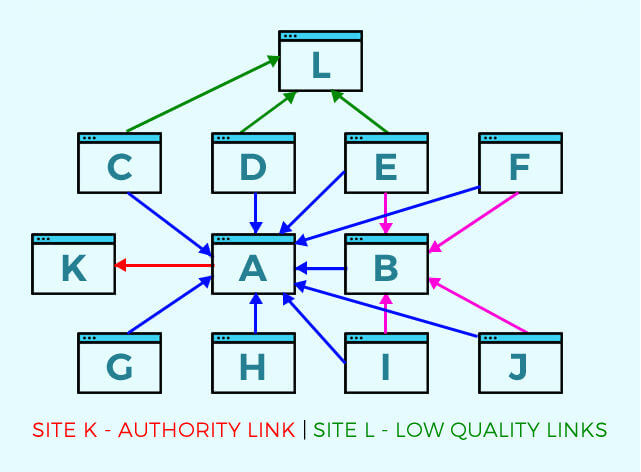

But let’s say there are two more sites that enter the fray, sites K and L. Site L is linked to from sites C, D and E, which don’t have much authority, but site K is linked to from site A, which has lots of authority. Even though site K has fewer links, the higher authority link matters more — and might propel site K to a similar position as site A or B.

The big flaw

PageRank was designed to be a natural way to gauge authority based on what neutral third parties think of various sites; over time, in a closed system, the most authoritative and trustworthy sites would rise to the top.

The big flaw is that this isn’t a closed system; as soon as webmasters learned about PageRank, they began cooking up schemes to manipulate their own site authority, such as creating link wheels and developing software that could automatically acquire links on hundreds or thousands of unsuspecting websites at the push of a button. This undermined Google’s intentions and forced them to develop a series of checks and balances.

Increasing phases of sophistication

Over the years, Google has cracked down hard on such rank manipulators, first punishing the most egregious offenders by blacklisting or penalizing anyone participating in a known link scheme. From there, they moved on to more subtle developments that simply refined the processes Google used to evaluate link-based authority in the first place.

One of the most significant developments was Google Penguin, which overhauled the quality standards Google set for links. Using more advanced judgments, Google could now determine whether a link appeared “natural” or “manipulative,” forcing link-building tactics to shift while not really overhauling the fundamental idea behind PageRank.

Other indications of authority

Of course, links aren’t the only factor responsible for determining a domain or page’s overall authority. Google also takes the quality of on-site content into consideration, thanks in part to the sophisticated Panda update that rewards sites with “high-quality” (well-researched, articulate, valuable) content.

The functionality of your site, including its mobile-friendliness and the availability of content to different devices and browsers, can also affect your rankings. But it’s all these factors together that determine your authority, and links are still a big part of the overall mix.

Modern link building and the state of the web

Today, link building must prioritize the perception of “naturalness” and value to the users encountering those links. That’s why link building largely exists in two forms: link attraction and manual link building.

Link attraction is the process of creating and promoting valuable content in the hope that readers will naturally link to it on their own, while manual link building is the process of placing links on high-authority sources. Even though marketers are, by definition, manipulating their rankings whenever they do anything known to improve their rankings, there are still checks and balances in place that keep these tactics in line with Google’s Webmaster Guidelines.

Link attraction tactics won’t attract any links unless the content is worthy of those links, and manual link-building tactics won’t result in any links unless the content is good enough to pass a third-party editorial review.

The only sustainable, ongoing manual link-building strategy I recommend is guest blogging, the process by which marketers develop relationships with editors of external publications, pitch stories to them, and then submit those stories in the hope of having them published. Once published, these stories achieve myriad benefits for the marketer, along with (usually) a link.

Could something (such as social signals) replace links?

Link significance and PageRank have been the foundation for Google’s evaluation of authority for most of Google’s existence, so the big question is: could anything ever replace these evaluation metrics?

More user-centric factors could be a hypothetical replacement, such as traffic numbers or engagement rates, but user behavior is too variable and may be a poor indication of true authority. It also eliminates the relative authority of each action that’s currently present in link evaluation (i.e., some users wouldn’t be more authoritative than others).

Peripheral factors like content quality and site performance could also grow in their significance to overtake links as a primary indicator. The challenge here is determining algorithmically whether content is high-quality or not without using links as a factor in that calculation.

Four years ago, Matt Cutts squelched that notion, stating at SMX Advanced 2012, “I wouldn’t write the epitaph for links just yet.” Years later, in a Google Webmaster Video from February 2014, a user asked if there was a version of Google that excludes backlinks as a ranking factor. Cutts responded:

We have run experiments like that internally, and the quality looks much, much worse. It turns out backlinks, even though there’s some noise and certainly a lot of spam, for the most part, are still a really, really big win in terms of quality of our search results. So we’ve played around with the idea of turning off backlink relevance, and at least for now, backlink relevance still really helps in terms of making sure that we return the best, most relevant, most topical set of search results.

The safe bet is that links aren’t going anywhere anytime soon. They’re too integrated as a part of the web and too important to Google’s current ranking algorithm to be the basis of a major overhaul. They may evolve over the next several years, but if so, it’ll certainly be gradual, so keep link building as a central component of your SEO and content marketing strategy.

Contributing authors are invited to create content for Search Engine Land and are chosen for their expertise and contribution to the search community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. The opinions they express are their own.

Related stories

New on Search Engine Land