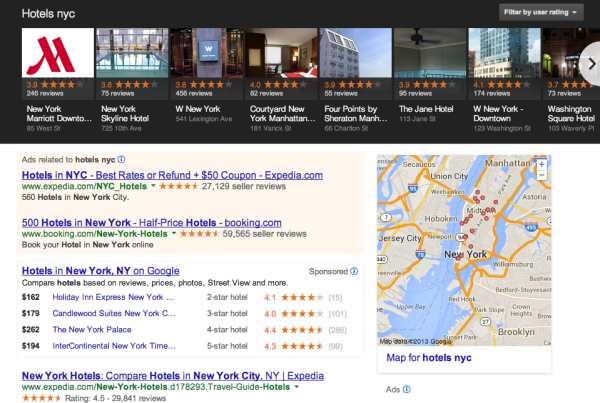

Study: Google Reviews Determine Local Carousel Rankings

Since the launch of the Google “Local Carousel” in June, SEOs and marketers have been trying to reverse engineer the ranking variables that elevate listings into that hallowed ground. Mike Blumenthal offered a good early roundup of stories and analysis. Now Digital Marketing Works (DMW) has conducted an extensive study of what might be called “Carousel […]

Since the launch of the Google “Local Carousel” in June, SEOs and marketers have been trying to reverse engineer the ranking variables that elevate listings into that hallowed ground. Mike Blumenthal offered a good early roundup of stories and analysis.

Now Digital Marketing Works (DMW) has conducted an extensive study of what might be called “Carousel results” and come to the conclusion that the quality and quantity of Google reviews are the single most important variable determining inclusion and ranking.

The agency examined more than 4,500 search results in the hotels category, in 47 US cities. Each SERP featured a Carousel result. Here’s more on the methodology of the study from DMW’s verbatim discussion:

For the top 10 hotels of each search, we collected the hotel’s name, rating, quantity of reviews, and rank (as displayed in Carousel). We also recorded the travel time and distance from each hotel to Google’s definition of the given city . . .

Our research yielded approximately 42,000 data points, including data on approximately 1,900 distinct hotels . . .

Our study look[ed] for correlations between a hotel’s rank in a search result with each of the following: 1) Google review rating (out of five stars), 2) quantity of Google reviews, 3) travel time from the hotel to the searched city, and 4) driving distance from the hotel to the searched city. We can look for these correlations within all combinations of query type (like “best hotels in…”) and market tier. For example, we were not surprised to see a strong correlation between rank and travel time/distance: closer hotels ranked higher.

There were four main findings from the study:

- Review quality and volume: “Carousel rank correlates highly with Google review ratings… Our study also showed an equally strong correlation for a hotel’s quantity of Google reviews”

- Distance and travel time are ranking variables

- Google appears to be weighting results based on a more nuanced understanding of queries and inferred user intent

- The findings and ranking variables held true in both large and small markets

It doesn’t appear that third party reviews (e.g., Yelp) are factoring in the Carousel rankings according to DMW.

The agency goes into considerable additional detail about its methodology and scoring, as well as practical recommendations and takeaways from its findings. It’s worth taking a closer look.

Contributing authors are invited to create content for Search Engine Land and are chosen for their expertise and contribution to the search community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. The opinions they express are their own.

Related stories

New on Search Engine Land