With Fix In Place, Wolfram Alpha Explains How Siri “Recommended” The Lumia By Mistake

It wasn’t Siri that was recommending the Lumia as the best smartphone to some last week; it was Wolfram Alpha. That won’t happen again, now that Wolfram Alpha has made changes to to fix problems it had dealing with customer reviews. Reviews Weren’t Weighted To Account For Number When I looked into the Siri-Lumia issue last […]

Reviews Weren’t Weighted To Account For Number

When I looked into the Siri-Lumia issue last week, I speculated that Wolfram Alpha wasn’t doing any type of weighting of its results:

The bottom line is that Wolfram has ratings from Best Buy, and it’s not trying to weight those in any particular fashion such as number of reviews or number of purchases.

The Lumia rates tops on Wolfram because four people gave it 5 stars, versus 86 people who give the AT&T 16GB version of the iPhone 4S an overall rating of 4.7. The Lumia is batting 1.000 after being up to bat only 4 times. The iPhone is batting .940 after 47 at bats.

What I suspected turns out to be the case. Jean Buck, director of content development for Wolfram Alpha, emailed me a long explanation of what was happening:

You’re pretty much right on with your analysis of what was going on with Wolfram|Alpha. We do use Best Buy as our source for electronic product information. And we do match the customer rating information we get from them to the meaning and ranking of “best” products. I think this is pretty reasonable.

The problem comes in with this particular type of data. Normally, Wolfram|Alpha deals with precise numerical data; usually finding a superlative item is easy (e.g., highest mountain, most populous country, etc.). But Best Buy’s customer ratings are different in a couple of ways.

For one, it suffers from being “crowdsourced.” It’s not uniformly available for all products. And secondly, it’s not really numeric; there are only values between 1 and 5 (I believe). So our normal framework for dealing with ranked ordinal information isn’t really applicable. There’s great possibility for “ties” in values. We overlooked this in applying our ranking framework.

To summarize, Wolfram Alpha gets customer ratings only for phones that Best Buy carries (if I understand correctly), and it can’t even depend on getting fair sample of ratings for all of these.

In A 27-Way Tie, Pick One

The bigger issue is that if several phones are all tied with the same score, Wolfram Alpha wasn’t able to express that they were tied. Someone ended up ranking lower. Continuing, her email explained:

In actuality, for the query “best smartphones” (pluralized), we do return a pure ranked list. I believe we were actually showing 27 products with a rating of 5, the iPhone 4s being one of them. But for “best smartphone,” we returned only the product that appeared at the top. Clearly, if 27 phones returned the same value, we should have listed all of them.

In other words, the Lumia wasn’t the best rated smartphone. It was one of 27 equally tied phones (some of these weren’t even phones, even). But if someone searched for “best smartphone,” Wolfram picked only one of the 27 phones — the Lumia — to rank tops.

New Weighting In Place

So what’s been changed? Buck’s email continues:

To rectify the lack of sophistication in our ordinal framework, we need to do a couple of things. The first two fixes have already taken place.

We’ve weighted the actual ratings by the number of reviews. And if we have “ties” in the data, we no longer return only the item that appears at the top of the list (which is purely an artifact of having the first item returned by the database that matches the criteria at the top of the list.) You’ll see the difference if you query for “best smartphone” now.

Weighting the results makes sense, a good improvement that doesn’t let a phone with only a few ratings win top honors. If there are ties, no one phone will “win” simply because it was the first in the database.

Buck said that Wolfram Alpha is now clarifying that the ratings are based on Best Buy user reviews and weighted by number of reviews. As for additional changes, she said:

We still have to add a bit more sophistication so that complete “ties” don’t return different ordinal rankings (the first column in our table); sometimes it’s possible that there really isn’t a unique “top” ordinal value.

If two smartphones both have perfect 5’s based on the same number of reviews, we shouldn’t list one as 1st and the other as 2nd simply based on how our database query returns them. But this will take more time, since it could potentially affect everything that we can possibly rank.

How’s It Look?

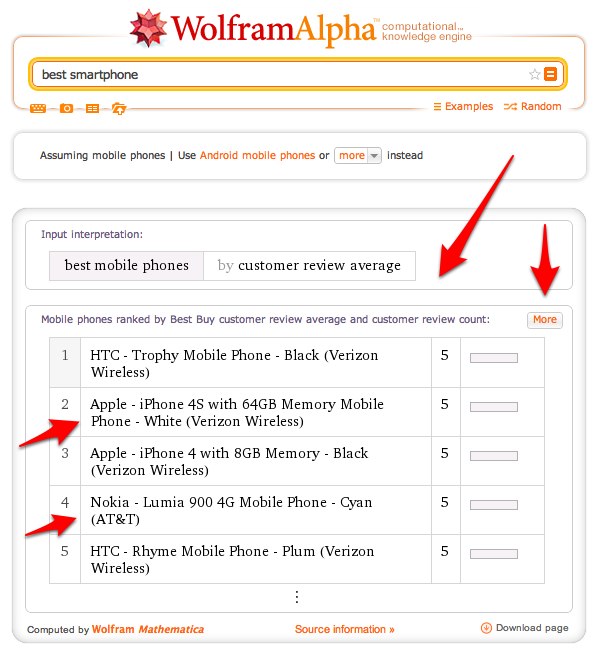

Now let’s look at the current ratings:

Keep doing that, and eventually you’ll see the 30th ranked phone finally have a score less than 5. So are all those with a 5 a tie? Buck told me, in a follow-up shortly after I posted this article:

These are real ties. Rounding does happen, but only at the fifth digits. If there is a tie, we use a secondary criteria to resolve the tie.

So while it looks like the iPhone beats the Lumia, and the HTC Trophy Android phone beats them both, apparently they’re all tied for first.

Of course, you won’t be getting these results on Siri. Whatever caused Siri to send searches for this topic to Wolfram Alpha for what appears to be a small number of people seems to have stopped.

As for the jokey responses that people get, like this:

Some have assumed Apple just started doing this because of the Lumia situation. That’s not the case. These existing way back when Siri first launched on the iPhone 4s, as I covered in my earlier story: Apple Siri’s Recommending Nokia? Then Nokia’s Recommending Android & iPhone, I Guess.

Finally, Buck said that Best Buy data is updated at Wolfram Alpha every two weeks, so there will be a slight lag behind Best Buy itself, at times.

Contributing authors are invited to create content for Search Engine Land and are chosen for their expertise and contribution to the search community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. The opinions they express are their own.

Related stories

New on Search Engine Land