Mastering NLP for modern SEO: Techniques, tools and strategies

Learn how modern search engines like Google use advanced NLP to understand searches, match queries to content, and rank results.

SEO has come a long way from the days of keyword stuffing. Modern search engines like Google now rely on advanced natural language processing (NLP) to understand searches and match them to relevant content.

This article will explain key NLP concepts shaping modern SEO so you can better optimize your content. We’ll cover:

- How machines process human language as signals and noise, not words and concepts.

- The limitations of outdated latent semantic indexing (LSI) techniques.

- The growing role of entities – specifically named entity recognition – in search.

- Emerging NLP methods like neural matching and BERT go beyond keywords to understand user intent.

- New frontiers like large language models(LLMs) and retrieval-augmented generation (RAG).

How do machines understand language?

It’s helpful to begin by learning about how and why machines analyze and work with text that they receive as input.

When you press the “E” button on your keyboard, your computer doesn’t directly understand what “E” means. Instead, it sends a message to a low-level program, which instructs the computer on how to manipulate and process electrical signals coming from the keyboard.

This program then translates the signal into actions the computer can understand, like displaying the letter “E” on the screen or performing other tasks related to that input.

This simplified explanation illustrates that computers work with numbers and signals, not with concepts like letters and words.

When it comes to NLP, the challenge is teaching these machines to understand, interpret, and generate human language, which is inherently nuanced and complex.

Foundational techniques allow computers to start “understanding” text by recognizing patterns and relationships between these numerical representations of words. They include:

- Tokenization, where text is broken down into constituent parts (like words or phrases).

- Vectorization, where words are converted into numerical values.

The point is that algorithms, even highly advanced ones, don’t perceive words as concepts or language; they see them as signals and noise. Essentially, we’re changing the electronic charge of very expensive sand.

LSI keywords: Myths and realities

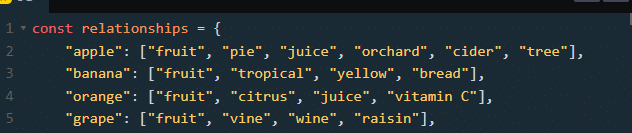

Latent semantic indexing (LSI) is a term thrown around a lot in SEO circles. The idea is that certain keywords or phrases are conceptually related to your main keyword, and including them in your content helps search engines understand your page better.

Simply put, LSI works like a library sorting system for text. Developed in the 1980s, it assists computers in grasping the connections between words and concepts across a bunch of documents.

But the “bunch of documents” is not Google’s entire index. LSI was a technique designed to find similarities in a small group of documents that are similar to each other.

Here’s how it works: Let’s say you’re researching “climate change.” A basic keyword search might give you documents with “climate change” mentioned explicitly.

But what about those valuable pieces discussing “global warming,” “carbon footprint,” or “greenhouse gases”?

That’s where LSI comes in handy. It identifies those semantically related terms, ensuring you don’t miss out on relevant information even if the exact phrase isn’t used.

The thing is, Google isn’t using a 1980s library technique to rank content. They have more expensive equipment than that.

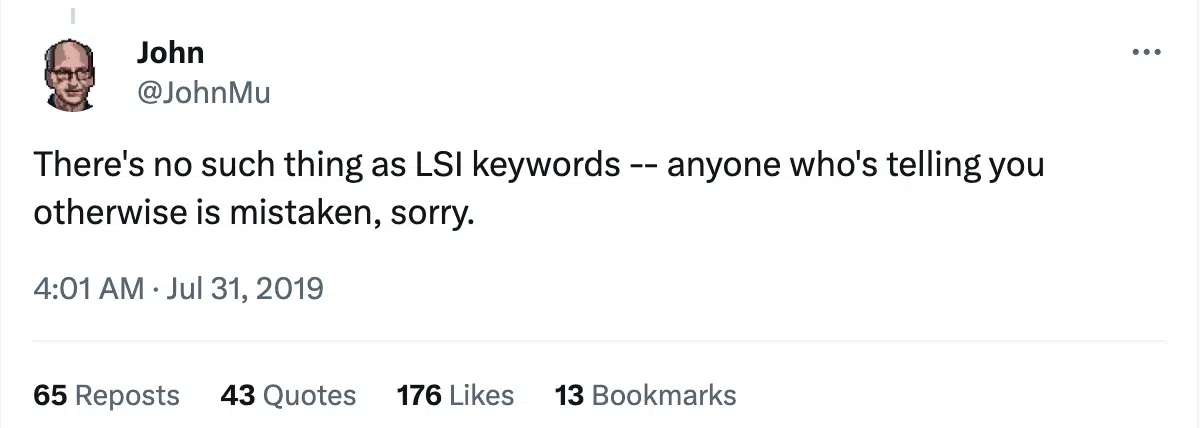

Despite the common misconception, LSI keywords aren’t directly used in modern SEO or by search engines like Google. LSI is an outdated term, and Google doesn’t use something like a semantic index.

However, semantic understanding and other machine language techniques can be useful. This evolution has paved the way for more advanced NLP techniques at the core of how search engines analyze and interpret web content today.

So, let’s go beyond just keywords. We have machines that interpret language in peculiar ways, and we know Google uses techniques to align content with user queries. But what comes after the basic keyword match?

That’s where entities, neural matching, and advanced NLP techniques in today’s search engines come into play.

Dig deeper: Entities, topics, keywords: Clarifying core semantic SEO concepts

The role of entities in search

Entities are a cornerstone of NLP and a key focus for SEO. Google uses entities in two main ways:

- Knowledge graph entities: These are well-defined entities, like famous authors, historical events, landmarks, etc., that exist within Google’s Knowledge Graph. They’re easily identifiable and often come up in search results with rich snippets or knowledge panels.

- Lower-case entities: These are recognized by Google but aren’t prominent enough to have a dedicated spot in the Knowledge Graph. Google’s algorithms can still identify these entities, such as lesser-known names or specific concepts related to your content.

Understanding the “web of entities” is crucial. It helps us craft content that aligns with user goals and queries, making it more likely for our content to be deemed relevant by search engines.

Dig deeper: Entity SEO: The definitive guide

Understanding named entity recognition

Named entity recognition (NER) is an NLP technique that automatically identifies named entities in text and classifies them into predefined categories, such as names of people, organizations, and locations.

Let’s take the example: “Sara bought the Torment Vortex Corp. in 2016.”

A human effortlessly recognizes:

- “Sara” as a person.

- “Torment Vortex Corp.” as a company.

- “2016” as a time.

NER is a way to get systems to understand that context.

There are different algorithms used in NER:

- Rule-based systems: Rely on handcrafted rules to identify entities based on patterns. If it looks like a date, it’s a date. If it looks like money, it’s money.

- Statistical models: These learn from a labeled dataset. Someone goes through and labels all of the Saras, Torment Vortex Corps, and the 2016s as their respective entity types. When new text shows up. Hopefully, other names, companies, and dates that fit similar patterns are labeled. Examples include Hidden Markov Models, Maximum Entropy Models, and Conditional Random Fields.

- Deep learning models: Recurrent neural networks, long short-term memory networks, and transformers have all been used for NER to capture complex patterns in text data.

Large, fast-moving search engines like Google likely use a combination of the above, letting them react to new entities as they enter the internet ecosystem.

Here’s a simplified example using Python’s NTLK library for a rule-based approach:

import nltk

from nltk import ne_chunk, pos_tag

from nltk.tokenize import word_tokenize

nltk.download('maxent_ne_chunker')

nltk.download('words')

sentence = "Albert Einstein was born in Ulm, Germany in 1879."

# Tokenize and part-of-speech tagging

tokens = word_tokenize(sentence)

tags = pos_tag(tokens)

# Named entity recognition

entities = ne_chunk(tags)

print(entities)For a more advanced approach using pre-trained models, you might turn to spaCy:

import spacy

# Load the pre-trained model

nlp = spacy.load("en_core_web_sm")

sentence = "Albert Einstein was born in Ulm, Germany in 1879."

# Process the text

doc = nlp(sentence)

# Iterate over the detected entities

for ent in doc.ents:

print(ent.text, ent.label_)These examples illustrate the basic and more advanced approaches to NER.

Starting with simple rule-based or statistical models can provide foundational insights while leveraging pre-trained deep learning models offers a pathway to more sophisticated and accurate entity recognition capabilities.

Entities in NLP, entities in SEO, and named entities in SEO

Entities are an NLP term that Google uses in Search in two ways.

- Some entities exist in the knowledge graph (for example, see authors).

- There are lower-case entities recognized by Google but not yet given that distinction. (Google can tell names, even if they’re not famous people.)

Understanding this web of entities can help us understand user goals with our content

Neural matching, BERT, and other NLP techniques from Google

Google’s quest to understand the nuance of human language has led it to adopt several cutting-edge NLP techniques.

Two of the most talked-about in recent years are neural matching and BERT. Let’s dive into what these are and how they revolutionize search.

Neural matching: Understanding beyond keywords

Imagine looking for “places to chill on a sunny day.”

The old Google might have honed in on “places” and “sunny day,” possibly returning results for weather websites or outdoor gear shops.

Enter neural matching – it’s like Google’s attempt to read between the lines, understanding that you’re probably looking for a park or a beach rather than today’s UV index.

BERT: Breaking down complex queries

BERT (Bidirectional Encoder Representations from Transformers) is another leap forward. If neural matching helps Google read between the lines, BERT helps it understand the whole story.

BERT can process one word in relation to all the other words in a sentence rather than one by one in order. This means it can grasp each word’s context more accurately. The relationships and their order matter.

“Best hotels with pools” and “great pools at hotels” might have subtle semantic differences: think about “Only he drove her to school today” vs. “he drove only her to school today.”

So, let’s think about this with regard to our previous, more primitive systems.

Machine learning works by taking large amounts of data, usually represented by tokens and vectors (numbers and relationships between those numbers), and iterating on that data to learn patterns.

With techniques like neural matching and BERT, Google is no longer just looking at the direct match between the search query and keywords found on web pages.

It’s trying to understand the intent behind the query and how different words relate to each other to provide results that truly meet the user’s needs.

For example, a search for “cold head remedies” will understand the context of seeking treatment for symptoms related to a cold rather than literal “cold” or “head” topics.

The context in which words are used, and their relation to the topic matter significantly. This doesn’t necessarily mean keyword stuffing is dead, but the types of keywords to stuff are different.

You shouldn’t just look at what is ranking, but related ideas, queries, and questions for completeness. Content that answers the query in a comprehensive, contextually relevant manner is favored.

Understanding the user’s intent behind queries is more crucial than ever. Google’s advanced NLP techniques match content with the user’s intent, whether informational, navigational, transactional, or commercial.

Optimizing content to meet these intents – by answering questions and providing guides, reviews, or product pages as appropriate – can improve search performance.

But also understand how and why your niche would rank for that query intent.

A user looking for comparisons of cars is unlikely to want a biased view, but if you are willing to talk about information from users and be crucial and honest, you’re more likely to take that spot.

Large language models (LLMs) and retrieval-augmented generation (RAG)

Moving beyond traditional NLP techniques, the digital landscape is now embracing large language models (LLMs) like GPT (Generative Pre-trained Transformer) and innovative approaches like retrieval-augmented generation (RAG).

These technologies are setting new benchmarks in how machines understand and generate human language.

LLMs: Beyond basic understanding

LLMs like GPT are trained on vast datasets, encompassing a wide range of internet text. Their strength lies in their ability to predict the next word in a sentence based on the context provided by the words that precede it. This ability makes them incredibly versatile for generating human-like text across various topics and styles.

However, it’s crucial to remember that LLMs are not all-knowing oracles. They don’t access live internet data or possess an inherent understanding of facts. Instead, they generate responses based on patterns learned during training.

So, while they can produce remarkably coherent and contextually appropriate text, their outputs must be fact-checked, especially for accuracy and timeliness.

RAG: Enhancing accuracy with retrieval

This is where retrieval-augmented generation (RAG) comes into play. RAG combines the generative capabilities of LLMs with the precision of information retrieval.

When an LLM generates a response, RAG intervenes by fetching relevant information from a database or the internet to verify or supplement the generated text. This process ensures that the final output is fluent, coherent, accurate, and informed by reliable data.

Applications in SEO

Understanding and leveraging these technologies can open up new avenues for content creation and optimization.

- With LLMs, you can generate diverse and engaging content that resonates with readers and addresses their queries comprehensively.

- RAG can further enhance this content by ensuring its factual accuracy and improving its credibility and value to the audience.

This is also what Search Generative Experience (SGE) is: RAG and LLMs together. It’s why “generated” results often skew close to ranking text and why SGE results may seem odd or cobbled together.

All this leads to content that tends toward mediocrity and reinforces biases and stereotypes. LLMs, trained on internet data, produce the median output of that data and then retrieve similarly generated data. This is what they call “enshittification.”

4 ways to use NLP techniques on your own content

Using NLP techniques on your own content involves leveraging the power of machine understanding to enhance your SEO strategy. Here’s how you can get started.

1. Identify key entities in your content

Utilize NLP tools to detect named entities within your content. This could include names of people, organizations, places, dates, and more.

Understanding the entities present can help you ensure your content is rich and informative, addressing the topics your audience cares about. This can help you include rich contextual links in your content.

2. Analyze user intent

Use NLP to classify the intent behind searches related to your content.

Are users looking for information, aiming to make a purchase, or seeking a specific service? Tailoring your content to match these intents can significantly boost your SEO performance.

3. Improve readability and engagement

NLP tools can assess the readability of your content, suggesting optimizations to make it more accessible and engaging to your audience.

Simple language, clear structure, and focused messaging, informed by NLP analysis, can increase time spent on your site and reduce bounce rates. You can use the readability library and install it from pip.

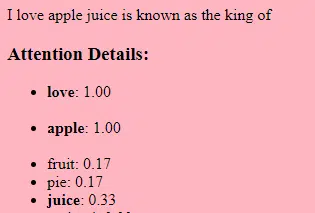

4. Semantic analysis for content expansion

Beyond keyword density, semantic analysis can uncover related concepts and topics that you may not have included in your original content.

Integrating these related topics can make your content more comprehensive and improve its relevance to various search queries. You can use tools like TF:IDF, LDA and NLTK, Spacy, and Gensim.

Below are some scripts to get started:

Keyword and entity extraction with Python’s NLTK

import nltk

from nltk.tokenize import word_tokenize

from nltk.tag import pos_tag

from nltk.chunk import ne_chunk

nltk.download('punkt')

nltk.download('averaged_perceptron_tagger')

nltk.download('maxent_ne_chunker')

nltk.download('words')

sentence = "Google's AI algorithm BERT helps understand complex search queries."

# Tokenize and part-of-speech tagging

tokens = word_tokenize(sentence)

tags = pos_tag(tokens)

# Named entity recognition

entities = ne_chunk(tags)

print(entities)Understanding User Intent with spaCy

import spacy

# Load English tokenizer, tagger, parser, NER, and word vectors

nlp = spacy.load("en_core_web_sm")

text = "How do I start with Python programming?"

# Process the text

doc = nlp(text)

# Entity recognition for quick topic identification

for entity in doc.ents:

print(entity.text, entity.label_)

# Leveraging verbs and nouns to understand user intent

verbs = [token.lemma_ for token in doc if token.pos_ == "VERB"]

nouns = [token.lemma_ for token in doc if token.pos_ == "NOUN"]

print("Verbs:", verbs)

print("Nouns:", nouns)Contributing authors are invited to create content for Search Engine Land and are chosen for their expertise and contribution to the search community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. The opinions they express are their own.

Related stories