Do You Hate “Not Provided”? Not So Fast… It May Be a Blessing In Disguise!

Things have certainly changed since Google took away keyword referral data, but columnist Stephan Spencer argues that these changes were for the better.

It’s no surprise to us SEOs that Google has been obscuring referring keywords from webmasters (a.k.a. “not provided”). Indeed, it has been the talk of SEO since before Google’s big switch to secure search, because it changes so much of how we approach keyword research and analysis.

One of my favorite tools for uncovering keywords in this brave new world of “not provided” is Searchmetrics, a search analytics platform that won “Best SEO software” in October at the U.S. Search Awards.

I recently had the opportunity to interview Searchmetrics’ founder, Marcus Tober, about the implications of “not provided,” potential workarounds and the future of keyword analysis and SEO in general.

Tober had a surprisingly user-centered mentality about the “not provided” issue in contrast to most discussion which has usually been all about the marketer’s woes. He encourages marketers not just to seek a direct solution to “not provided” by simply finding keyword data (although Searchmetrics does indeed supply it), but also to let it be a catalyst for reassessing our sites’ relationship to keywords.

Tober pointed out that, to the searcher, “not provided” isn’t an issue at all. There have been a lot of questions and “answers” circulating about why Google would enact secure search. Perhaps it is because, ultimately, targeting one specific keyword on a page is not resulting in the best possible user experience, and is, instead, just instances of ugly, unhelpful keyword stuffing.

Rather, getting rid of the ability to target one specific keyword from query to conversion may encourage sites to move toward a content strategy that provides the most helpful information possible on every page.

Instead of targeting one keyword, pursue a breadth of information that makes the page optimized for the searcher, not the search engine. Searchmetrics does, indeed, provide ways to get most of the data that became unavailable. However, that should not be the end-all be-all of your keyword action plan.

First (because you know you want it), let’s go over how you can indeed get your data “back.” Then (because you need it), let’s go over how you should actually be using this data.

Keyword Data, Revealed!

Searchmetrics is capable of analyzing keyword reach by accessing its enormous library of keywords (over 600 million), which is the biggest in the biz.

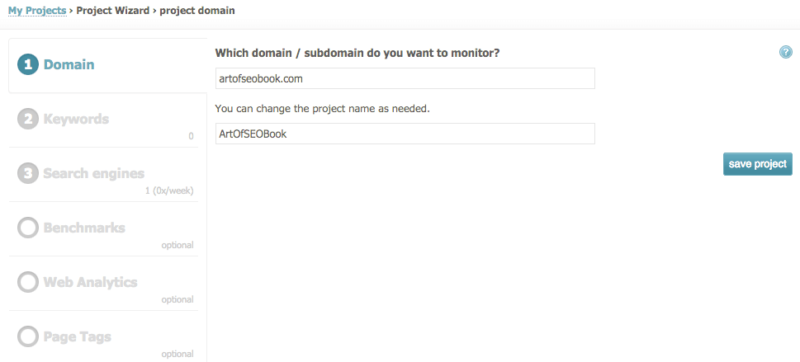

When you begin a campaign, you can actually just input a URL, a domain or subdomain, and Searchmetrics gives you the option of putting in your own keywords for the domain you want to track, or it will scrape the page for keywords that are relevant and that it is ranking for. You then can monitor these keywords and create campaign goals.

‘Not provided’ took away the ability to evaluate the query to conversion calculations; however, it did not take away the ability to scrape the browser and take snapshots of the SERP. Through a series of parameters that evaluate the total of the SERP page, Searchmetrics comes up with a dynamic click calculation based on “there are x amount of users clicking on yourpage.com based on the site.”

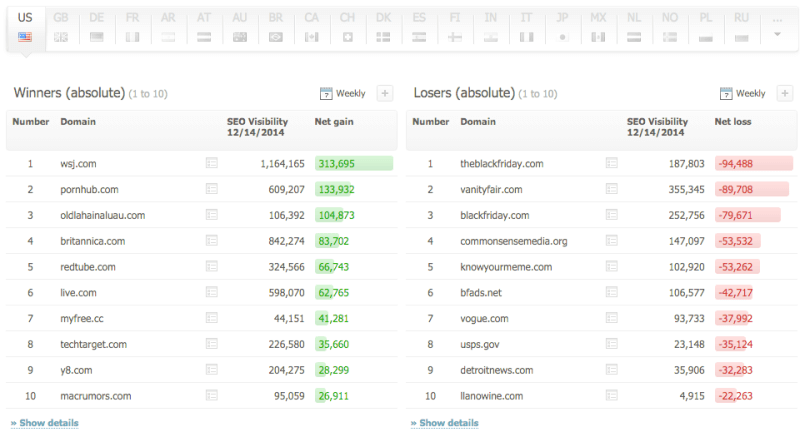

This is also how SEO Visibility is calculated. SEO Visibility is the Searchmetrics metric that encompasses a large number of search ranking calculations to come up with one ‘visibility’ factor. SEO Visibility is now virtually accepted as industry standard for calculating relative SEO winners and losers.

You can also integrate your campaign with Google Analytics, Adobe Analytics, all major analytics companies, and even Google Webmaster Tools. This feature is totally free, and I absolutely recommend utilizing it, as any keyword conversion info is potential informational gold and allows you to integrate other information like social traffic into the keyword research process. Webmaster Tools will also give you a more in-depth look at data for your past 90 days.

Keyword Optimization 2.0

The old SEO strategy of optimizing one page for a specific keyword and then optimizing another page for another keyword is, frankly, out of style. “That paradigm is gone,” Tober insists. “The old methodology was to put one instance of the keyword in the title tag, once in the H1, a couple in the page copy. And you end up keyword stuffing.”

Tober agrees that this is no longer the methodology — not only because of “style,” but because it is no longer effective in SEO.

When Google released the Hummingbird algorithm, its engineers claimed that Google is now working to understand the meaning of a query, rather than simply matching strings of keywords. If Google is able to understand the meaning of a query, this also means it is able to understand the meaning of content.

This means that keyword stuffing will be unnecessary, as Google will be able to identify synonymous words and phrases. Searchmetrics had thousands of examples of similar keywords for which 9 of the top 10 results were identical after Hummingbird.

Google’s intention is to be able to understand well-built, useful, informative content such that it can determine the entire body of keywords a page should rank for, rather than just the keywords that a page is overtly “optimized” to rank for.

Because of this, Tober has crafted Searchmetrics’ capabilities to address “not provided” further — to give the user supplementary resources in creating related, holistic content pertaining to the site’s topic.

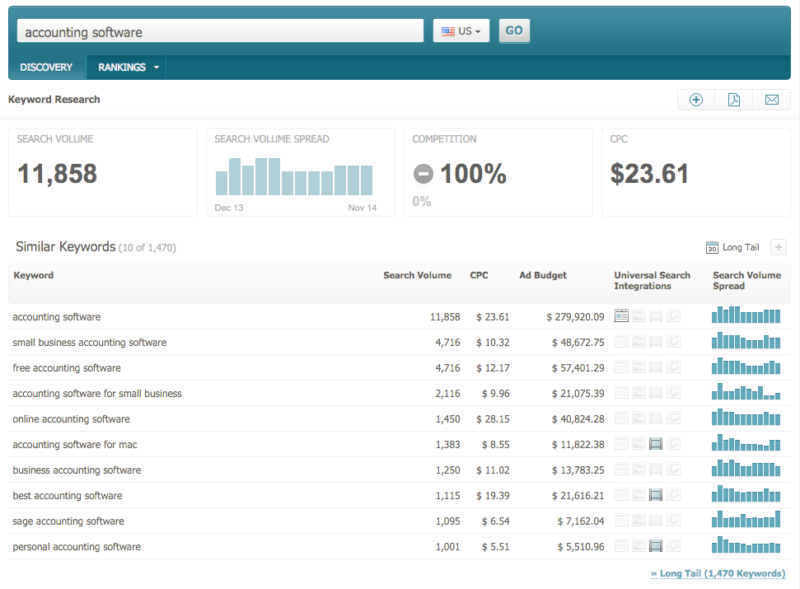

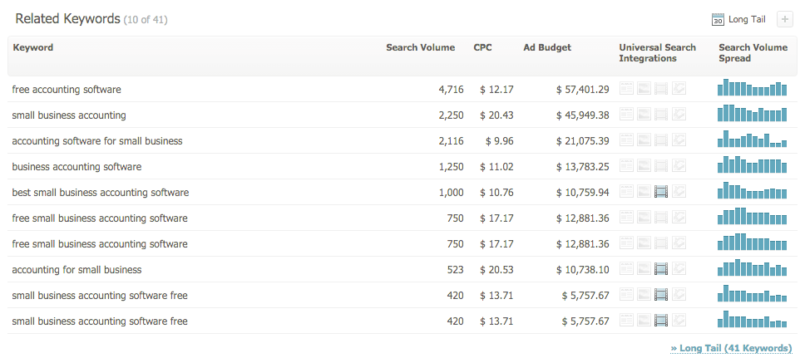

This can be especially effective for optimizing your landing pages. Let’s say your landing page is selling small business accounting software. You simply plug in the keyword at the top of the Searchmetrics interface, and the database searches its 600 million keyword inventory to come up with relevant, related keywords and concepts that you can add to the page to provide better, more user-friendly content.

Tober notes that his product is a vector database; consequently, the more specific you can get with your keyword target or topic, the more confidently the software can be that those keywords belong with that page.

However, if you are looking to expand the breadth of your site’s reach, it may be a great idea to put in a more general term to create ideas for new pages, features or blog posts.

What This Means For The Future Of Search

“Not provided” signals a future that may, indeed, already be here. Tober points out the clear winners and clear losers in the past couple of years, with trends that show an obvious shift in SEO from the outright technical to the user experience-oriented.

“Before it was more, ‘How can I create a campaign to get as many links as possible?’ or, ‘How can I cheat the system to get as many good links for cheaply as possible?’

However, now it is becoming more advanced,” Tober says. “Only the companies that get decent insights, with an algorithmic approach, will be those who are successful. Look at all these retailers — Amazon, Best Buy, Target, eBay, etc. — and you’ll notice they’re competing for the exact same keywords.”

Because there’s so much competition, the “not provided” situation guides us to instead reverse engineer our pages to achieve a better user experience than that of the competition. “The reason Amazon is so successful is that Amazon delivers diversity.”

Tober claims, “It’s not just a landing page that has just the product and nothing else, you have reviews, you have related products, you have really good content. Companies like Best Buy messed up because they didn’t understand how important the diversity of content via subtopics is. Amazon invested in that very early.”

So, if you’re not Amazon and aren’t incredible fortune-tellers of the future of search, or you don’t have the capability to time travel 10 years in the past to invest in user experience, Tober sums up his advice confidently as:

[blockquote]”Your page might have a lot of stuff going for it, but there is more that you should cover… Not just a couple of terms, but many terms that belong together.” [/blockquote]

Keyword research should be about discovering what is working for you, but then using that information further to extrapolate what could work better for you. Think more holistically about each page.

What other information could you provide on a page, or link to from a page, that would make it a more useful resource on the topic it’s focused on? How well is the search engine going to be able to determine exactly what your page is “about”? Is it focused on providing information on a specific topic or product? Does it have information that is “off-topic” and thus may confuse the engines as to the theme of the page?

Pages and sites that can answer these questions and provide true value to users are the ones which are more likely to rank well going into the future than pages that are very specifically optimized to try to rank for a single, specific keyword phrase.

Contributing authors are invited to create content for Search Engine Land and are chosen for their expertise and contribution to the search community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. The opinions they express are their own.

Related stories

New on Search Engine Land