Bing explains how AI-powered intelligent answers can show users two points of view for the same query

Multi-perspective answers is another example of how Bing is using artificial intelligence to inform richer search results.

In December last year, Bing introduced several AI-powered search features, one being intelligent answers. This week Mir Rosenberg from the Bing search team published more detail on how one type of intelligent answer format called multi-perspective answers works.

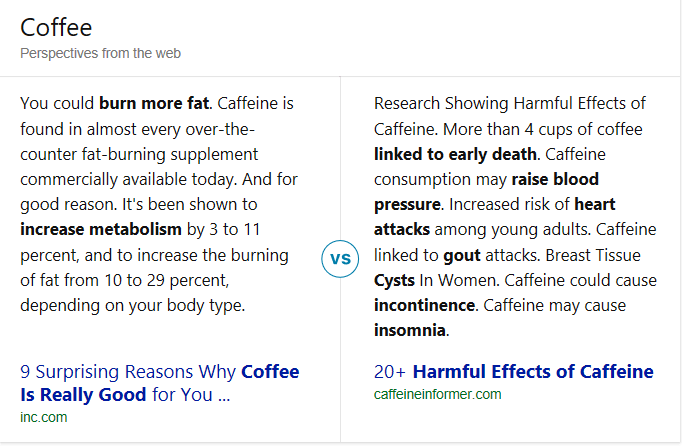

Bing multi-perspective answers provide two different and often opposing perspectives on a topic. “There are many questions where getting just one point of view is not sufficient, convenient or comprehensive,” Rosenberg wrote in the blog post. “We believe that your search engine should inform you when there are different viewpoints to answer a question you have, and it should help you save research time while expanding your knowledge with the rich content available on the Web.”

Below are some examples of Bing multi-perspective answers cards as displayed on desktop. (On mobile, the cards are shown vertically.)

How Bing multi-perspective answers work

Intelligent Answers were unveiled at a Microsoft AI event in December where the company outlined several ways in which artificial intelligence is being infused into the search engine to provide richer results — all under the umbrella of intelligent search. In addition to intelligent answers, which are aimed at providing answers to more complicated questions, Bing introduced intelligent image search to make images “shoppable” and conversational search, in which it suggests query refinements for broad searches.

For multi-perspective answers, Rosenberg explains that Bing uses several learning models to “prioritize reputable content from authoritative, high-quality websites that are relevant to the subject in question, have easily discoverable content and minimal to no distractions on the site.”

When a user asks a question, passages related to the query are pulled from Bing’s Web Search and Question Answering engine. Using deep recurrent neural network (Deep RNN) models, the passages are evaluated to determine similarity and sentiment and clustered accordingly. The most relevant passages from each cluster are then identified based on sentiment analysis and other features and served in the multi-perspective answer cards.

An illustration of how results are identified and selected for Bing’s multi-perspective answer cards.

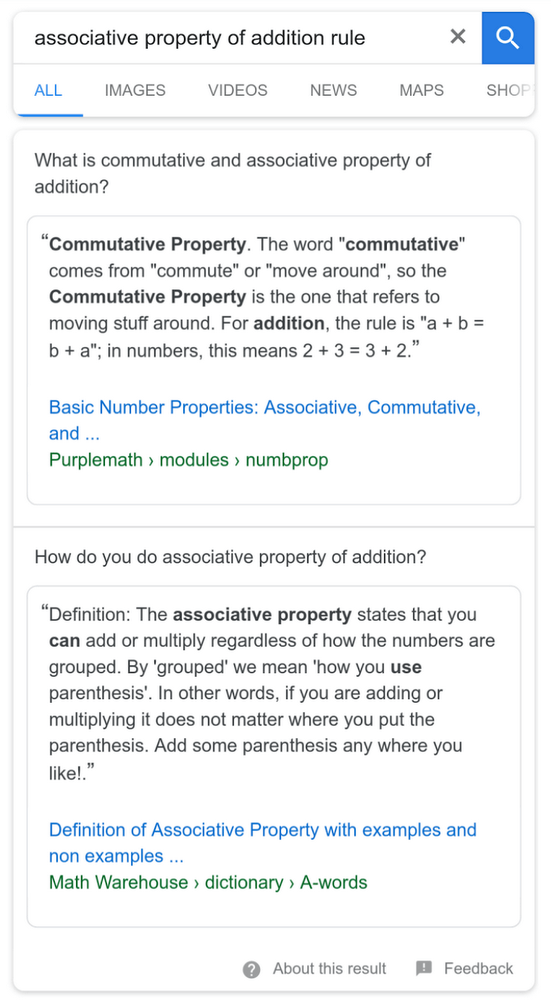

For its part, Google recently mentioned in its guide on featured snippets that it may show multiple responses to a query with separate featured snippets. “There are often legitimate diverse perspectives offered by publishers, and we want to provide users visibility and access into those perspectives from multiple sources,” Matthew Gray, a Google software engineer, explained.

Here is an example of this kind of treatment in Google:

Bing Multi-Perspective Answers is live in the US and will expand starting with the United Kingdom in the next few months.

Search Engine Land is owned by Semrush. We remain committed to providing high-quality coverage of marketing topics. Unless otherwise noted, this page’s content was written by either an employee or a paid contractor of Semrush Inc.