Were You Really Hit By A Search Engine Penalty, Or Is It Something Else?

Often, I’ll speak to prospective clients who think they’ve been hit by an algorithm update or a penalty. They’ve lost a lot of organic traffic, so they immediately think that a search engine change is to blame. Unfortunately, I’ve also run into many situations where SEOs are using algorithm updates such as Panda or Penguin […]

Often, I’ll speak to prospective clients who think they’ve been hit by an algorithm update or a penalty. They’ve lost a lot of organic traffic, so they immediately think that a search engine change is to blame.

Unfortunately, I’ve also run into many situations where SEOs are using algorithm updates such as Panda or Penguin as explanations for organic traffic drops. Because clients may not understand the technical nature of SEO, vendors can often hide behind excuses of algorithm updates or past penalties as the reasons for traffic drops. So how can you know what the real problem is?

Have You REALLY Been Hit With A Penalty?

The first step in understanding if your site has been a victim of an algorithm update or penalty is to check your organic traffic levels. If an algorithm update or penalty are at work, you often may see organic traffic drop off a cliff. Dig deeper to determine if the drops are solely from one search engine. If not, then an algorithm update or penalty are likely not the cause, since they would only affect traffic from one search engine.

Also check the timing of the drop, note the search engine and then check Moz’s Google Algorithm Update History page which can provide you with a timeframe of recent updates. If your traffic drop timeframe also matches the timing of an algorithm update, your site may have been affected by an update.

To check for penalties, such as inbound link penalties associated with Penguin, the first step is to ensure you have a Google Webmaster Tools account. Check this account regularly to be sure that you’re not missing notifications from Google about potential spammy links they may have identified.

Additionally, if you suspect that your SEO has been building spammy links, check some of the backlinks that Google has identified and listed in Google Webmaster Tools.

Click on the links themselves — view the pages where the links originate from. What are they like? Supreme Court Justice Potter Stewart described identifying pornography as, “I know it when I see it.” The same could be said about spammy links. It can be difficult to define exactly what constitutes a spammy link or site, but you’ll certainly know a site or link is spammy when you see it.

Other Common Reasons For Major Organic Traffic Drops

There can be other common reasons for big traffic drops, including blocked indexing, missing redirects, market changes and level of SEO activity.

Blocked Indexing

If traffic is quickly dropping from multiple search engines, this could be a sign of site technical issues that have prevented search engine indexing. Has your robots.txt file from your test server been moved to the live server accidentally? If so, it could be blocking search engines from indexing.

Check your robots.txt file for disallow statements that apply to your whole website or specific pages that have lost traffic. If this is the case, remove the incorrect disallow statements from the robots.txt to begin recovery.

Along with the robots file, there may be other ways that bots are being blocked from indexing content. Was your site redesigned recently, or did pages with the greatest losses experience a programming change? Other common issues that can prevent indexing include using JavaScript for certain page information, placing forms in front of content (search engine bots cannot fill out forms), and other types of technical changes.

Missing Redirects

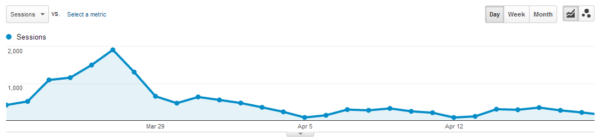

Often overlooked in the website relaunch process, 301 redirects are crucial for search engine robots to find content when the URL and/or domain for a site has been updated, which is common with site relaunches. Here’s an example of traffic drops (across multiple search engines) for a site relaunched without 301 redirects in place:

How can you see if you’re missing 301 redirects? In analytics, choose a timeframe from before the relaunch. Drill down to find a handful of your most visited pages on the website from that time period. Enter that URL in your browser address bar. If you receive a 404 error, then a 301 redirect was not created for that page and is a likely culprit in your organic traffic losses.

And if you’re missing 301 redirects for your most trafficked pages on your site, it’s likely safe to assume that 301s were not created for any pages on the site, since you would likely prioritize your most trafficked pages for redirection.

Market Changes

On occasion, I’ve also found that organic traffic drops because of a decrease in searches in a particular market. A few years back, I was working with a company that sold disk defragmentation software. Even though our rankings for key terms were consistently improving, organic traffic was dropping. Why? Was it a penalty or algorithm update? Neither — it was market conditions.

A good tool to use in these situations is Google Trends, which shows keyword search volume over a time period. Notice the pattern over the past ten years for the term “disk defragmentation”:

Lo and behold, in this instance, the overall search interest in terms like “disk defragmentation” has been on the decline for some time. This isn’t a measurement of SEO, per se, but rather an industry indicator.

Similar searches for the company’s brand name, its competitors’ brand names, and other related non-brand terms demonstrated similar results.

Level Of SEO Activity

One last issue I often see is that website owners may perceive SEO as a one-time project rather than an ongoing investment. The problem with this approach is that the elements are always shifting in SEO:

- Ranking factors change and evolve over time

- Competitors may be investing more in SEO than you are

- New competitors enter the fray each day

Again, you may initially perceive organic traffic loss over time as a potential algorithm or penalty issue, but truly it just may be that SEO inaction over time has caused the site to lose traction in search.

Final Thoughts

Before jumping to the conclusion that your site has been hit by an algorithm update or penalty, be sure to check all of these factors. While this isn’t a comprehensive list, these are the most common issues I’ve encountered that are mistaken for algorithm updates or penalties.

It’s far better to understand the real problem and face it head on than to blame an algorithm update or penalty; and, you’ll be one step closer to solving the real issue at hand and regaining organic search traffic.

Opinions expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.

Related stories