Google penalties, manual actions and notifications: A complete guide

Learn all about on-page and off-page guideline violations, what Google's messaging means, and how to get a Google manual penalty removed.

Google reserves the right to apply manual actions, which are more often called penalties within SEO, to websites it finds in violation of its Search Essentials (previously known as Google’s Webmaster Guidelines).

The specific reasons and scope of manual actions can be manifold, and the actual impact may range from barely noticeable to utterly disastrous for a website’s presence in organic Google Search results.

This guide describes what types of penalties you can get, demystifies Google’s messaging and explains how to go about successfully getting a Google manual action removed.

We’ll cover the following violations and notifications:

- On-page guideline violations and related notifications

- Off-page guideline violations and related notifications

- Reconsideration requests and related notifications

What is a Google penalty?

A Google penalty is what happens Google takes manual action on a website for violations related to Google Search Essentials. These are spammy techniques spotted by the Google Search Quality team and deemed egregious enough to trigger sanctions.

A Google penalty can result in a website being heavily demoted in rankings – or removed altogether from the search results.

- Dig deeper: Google algorithms are not Google penalties

Since 2012, Google has scaled up its communication efforts via Google Search Console (previously known as Google Webmaster Tools) about website issues that are likely to negatively impact how visible a site ends up being in organic Google search for relevant user queries.

This guide focuses on how you should interpret and respond to these notifications, which Google euphemistically calls “warnings.”

But notifications aren’t solely about using techniques that are in opposition to Google’s guidelines.

We’ll also explore some warnings about issues that could be considered sins of omission, in that the site owner has failed to secure the site – allowed it to host spam or be hacked – or has failed to implement structured data markup correctly.

Google also brings attention to technical issues it identifies. While these may equally impact a site’s visibility in organic Google search, they are not related to any Google guideline violations and will be omitted here.

That said, any piece of information highlighted in Google Search Console should be considered important and be taken seriously.

All sample messages discussed in this guide have been spotted “in the wild” within the last 24 months as of this writing. Older messages, not issued in years, such as the warning highlighting hidden content are not included in the manual action overview.

All sample screenshots have been adapted to highlight the most relevant pieces of information that provide guidance on how to resolve the existing issue.

On-page guideline violations and related notifications

This set of violations and notifications apply to problems that have been identified on a site.

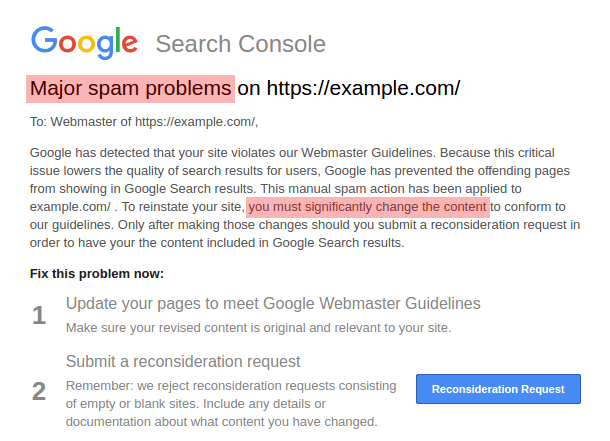

Major and pure spam problems

When site owners receive the manual action notification highlighting major spam problems, Google has identified the page content as entirely spammy, with no value to users.

This message tends to indicate that, at the time the recipient reads it, the most severe consequence has already come into effect and, in the majority of cases, the website is completely gone from organic Google Search. In some instances, however, the removal may affect only a subset of site pages.

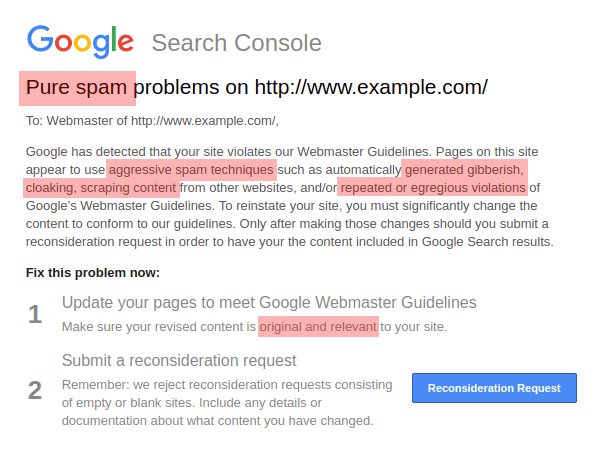

A rare variation of the major spam problems action highlights the gravity of the violation in more stark wording, referring to the website as pure spam. That message seems to be on the verge of being deprecated as in recent months it has been sporadically registered (in comparison to the major spam penalty, which is very common).

When issued, the pure spam problems penalty seems to be most frequently related to user-agent (or similar) cloaking. Displaying vastly different content to search engine bots and users based on the user-agent has become rare. At the same time, Google has stepped up their detection methods. This is a likely explanation for why this specific penalty has become less frequent.

Both major spam and pure spam problem notifications indicate that the site affected has, at a fundamental level, failed to live up to Google users’ expectations. The major spam penalty is most frequently applied to scraped content and/or gibberish sites.

Spam problems

The message highlighting just spam problems is a toned-down version of the major spam problems notification. The wording is similar, albeit less bleak.

While past variations of this message occasionally mentioned specifically “thin content” and/or “internal doorway pages,” recently a single message applied more generally to “spam problems” has become the standard.

This manual action suggests that, while the website isn’t entirely bad, bits and pieces are not good enough. That could refer to thin content (generally considered spammy), and it may very well refer to doorway pages – which, by definition, must be of low quality, as their primary purpose is to redirect users to another location rather than immediately providing an answer.

Typically, a penalty associated with the spam problems message does not result in complete removal from Google Search. Querying the site: search operator will often confirm that the site is still indexed, though it will likely be much less visible in organic Google Search results.

Google may try to go about the penalty surgically, penalizing only the offending pages and folders, but leaving the rest of the site intact. That tends to be the case if the violation is clearly isolatable, e.g., doorway pages are located exclusively within a directory like example.com/doorways/.

The exact scope of the penalty is usually not revealed to the site owner, and it can, therefore, be difficult to determine the impact the penalty has on site visibility.

How to go about removing the penalty

Removing this penalty will require a thorough site review, especially with regard to content quality. Large sites are better off conducting an all-out audit in order to identify which content is being indexed and which is not.

For the thin content, you will need to decide if (and how) it can be improved to meet standards, or if it is best to utilize noindex to avoid indexing low-quality content (which could possibly improve crawl budget distribution in the long run as well).

The evaluation process must include a landing page engagement assessment. It must not be driven by looking at word counts. It is not the length of content that may be an important factor, but rather how useful and engaging the content is to users.

Before a reconsideration request can be submitted to Google, doorway pages will need to be removed, and the content strategy will require revamping (often shifting toward less content that is more useful and robust).

All these steps are to be properly documented for the reconsideration request in order to put the best foot forward.

Regardless of the penalty applied, under no circumstances should an empty or placeholder page with a bare “Hello world!” message be submitted for reconsideration.

Google clearly states in their notifications that such sites/pages do not qualify for reconsideration and will be rejected due to insufficient efforts made.

Manual penalties in regard to thin content tend to affect large websites with tens of thousands or more crawlable and indexable pages.

A successful reconsideration with the very first attempt is an achievable goal, and a speedy recovery is possible.

Cases of sites actually doing better in organic Google Search after dropping their thin content and focusing on quality landing pages are not uncommon.

Unlike sites hit with major spam or pure spam penalties, sites affected by this manual action are not fundamentally on a collision course with Google Search Essentials. In most cases, improvements are needed and a fresh start from square one is required.

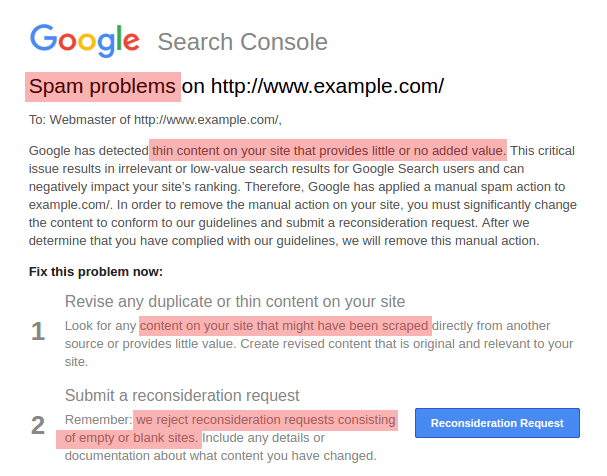

Thin content

The Google thin content warning is triggered in Google Search Console when a website is found to lack substantially in added value.

That is not to say that websites deemed by Google as thin content websites contain few landing pages or too little content. They may contain large amounts of content, that is however also readily available across many other websites.

This is why the thin content penalty is often applied to heavily monetized affiliate websites with prominently displayed commercial intent, so-called MfA (or “made for AdSense”) websites, and monetized scraped content websites.

Google and other search engines do not generally frown upon affiliation or monetization of websites as long as these add some unique value.

That said, websites that seem to be templated, do not represent a real brand nor provide tangible information about their authors tend to add little to the diversity of search results.

Search engines are interested in providing users diverse results, instead of ranking almost identical websites for a given query.

As a consequence of the thin content penalty, a website can be expected to initially drop in organic search rankings significantly, then continue to slowly decline after the first nosedive.

Convincing Google to lift the thin content penalty imposed, while possible, may require both pruning poor landing pages and enriching existing landing pages with fresh, relevant content.

In many cases, using data in order to provide relevant and unique information is a great way to make the website stand out.

Next to compelling About us and contact information as well as consistent, high-quality brand representation, a Google Reconsideration Request rationale outlining the specific added value the website represents is likely to be required in order to get back to Google’s good graces.

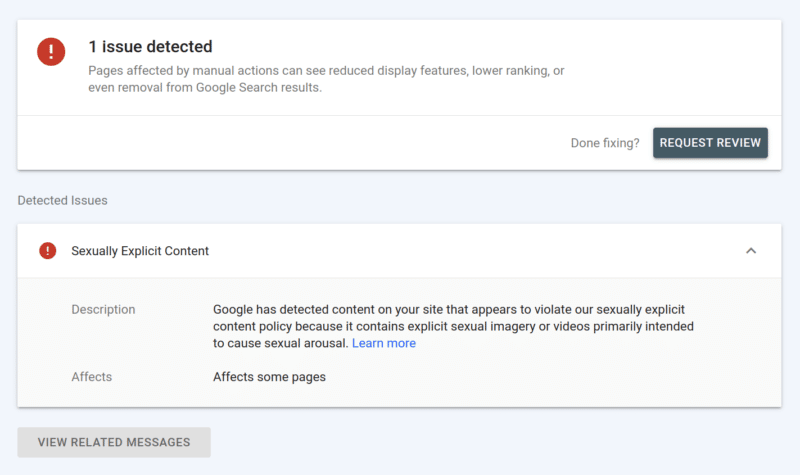

News and Discover

In a drive to combat false information and maintain information quality standards, Google has sharpened their focus on news and media website content quality. In consequence, the public list of News and Discover policy violations has been substantially expanded.

Online news platforms now face more scrutiny with regard to the factual accuracy of published content. At the same time, misleading, antisocial, offensive and explicit content has become less acceptable.

As these policies are being enforced, offending websites are confronted with a number of Google Search Console penalty messages.

The consequence of an imposed News and Discover-related penalty is always a significant (if at times much delayed) drop in organic Google Search results.

If a penalty is left to linger for a prolonged period of time, the entire website’s visibility in SERPs is certain to continuously, slowly decline.

Potential News and Discover violations which may trigger a Google penalty include, among others, explicit, unqualified medical or otherwise misleading content.

The confirmed offense is typically accurately described in the Google Search Console message received. Google often chooses to pinpoint specific URL examples representative of the behavior that triggered the penalty.

While never exhaustive, these examples are tremendously helpful in the initial stages of the analysis and penalty recovery effort.

Regaining Google’s confidence with the prospect of improved future rankings depends largely on how thorough the clean-up effort, preceding a reconsideration request submission, really is.

Because Google is unlikely to issue a penalty for relatively minor offenses, any website actually penalized is almost certainly violating Google policies in an excessive way.

For that reason affected websites face the prospect of editing or purging a substantial amount of the offending content.

Merely removing problematic landing pages, without supplementing the website with quality content is typically not considered a comprehensive effort though.

AMP content mismatch

In line with the objective to ensure a fast user experience, Google continues to push AMP as a desirable technical solution. This policy spills over into Google Search Essentials, where content parity for all users is specifically required.

Google strives to keep the user experience as consistent as possible. Therefore, displaying significantly different content or offering a substantially different user experience to mobile and desktop users constitutes a Google Search Essentials violation.

AMP landing pages are not required to match the content on their original, canonical landing pages 100%. But the gist of the content, including the user experience, must be comparable for users regardless of the device used to access it.

This is why Google does not like users to have to navigate from an AMP landing page to another landing page in order to access the actual content. The latter solution in effect makes an AMP page a doorway page, which Google considers an egregious Google Search Essentials violation.

Unlike most Google penalties, the AMP content mismatch warning does not trigger sitewide repercussions. Instead, Google takes a more nuanced approach dropping only the rankings of AMP landing pages of a website. All other contents of the website remain unaffected.

This may appear as a relatively light penalty. However, no Google penalty should be left to linger for a prolonged period of time.

Google recommends utilizing the URL inspection tool to test the parity of both AMP and canonical landing pages and in doing so ensure a uniform experience for all users.

Once the landing pages can be considered similar enough, a reconsideration request including a compelling rationale briefly outlining the changes introduced should be submitted.

Alternatively, a technological switch can be considered a viable option. With AMP limitations in mind, the Google penalty imposed due to parity issues can be considered a stepping stone toward embracing a more robust technical solution, such as progressive web apps.

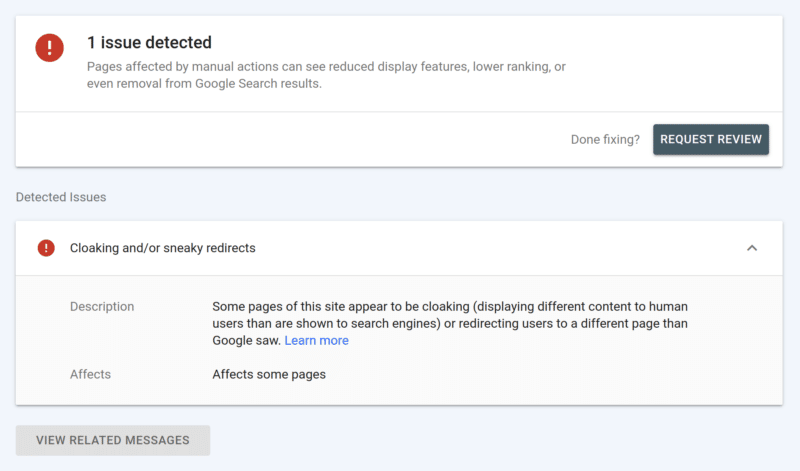

Cloaking and redirects

Search engines, including Google, want to rank ultimate destination landing pages. When a landing page redirects users, it is likely that search engines prefer to rank the final destination page, instead of an intermediary landing page.

There are of course legitimate reasons for maintaining redirects, which do not trigger any search engine sanctions.

However, when Google deems the intention of redirects to be deceptive (e.g., to intentionally redirect unsuspecting users to strongly commercialized landing pages), a manual spam action is likely to be applied to the website.

At the same time, Google considers showing significantly different landing pages to search engine bots and to human users known as cloaking as equally deceptive.

Similar to using redirects, when cloaking is implemented, search engines can’t trust that users will be presented with the expected content. From a search engine’s perspective, their user’s experience is likely to suffer, which they cannot ignore.

Since both cloaking and sneaky redirects are considered egregious Google Search Essentials violations, websites penalized for these offenses are likely to experience an imminent, steep drop in Google Search rankings.

In rare, extremely egregious instances, websites have even been known to be temporarily removed from Google’s index entirely.

Lifting a penalty applied for cloaking and/or sneaky redirects requires a thorough clean-up and documentation effort first. All deceptive behavior must be discontinued entirely.

Because of the egregiousness of the violation, haphazard changes to the website will only prolong the penalty.

Once all cloaking and/or deceptive redirects have been removed, it is best to conduct a crawling test, before a compelling Reconsideration Request can be submitted to Google.

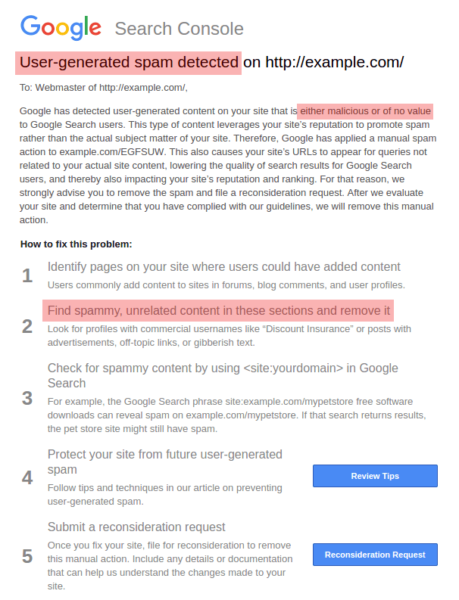

User-generated spam

User-generated spam tends to be an issue for large, user-driven sites. If this penalty is applied, that generally indicates that the affected site is being exploited by spammers.

Here, Google is essentially asking the site owner to get their own house in order – or else.

The message usually includes a sample URL where user-generated spam has been detected. Accordingly, the penalty’s impact (removal from Google Search results) is limited to the exact URL or directory mentioned.

While this may seem like a small price to pay, it is important to see the big picture. Sites affected by user-generated spam often receive a lot of user-generated spam messages, even on pages not identified by Google.

If the vulnerability isn’t swiftly taken care of, chances are there will be thousands of potentially malicious, user-generated pages which Google will remove from their index in order to protect their users.

On several occasions, Google has provided useful and actionable general guidance on how to protect sites from user-generated spam.

Ignoring a specific Google manual action message is never a good idea and a particularly dangerous strategy in the case of user-generated spam.

If you’ve received a message, that indicates Google deems the site useful but neglected. Thus, they raise the issue to help.

Hoping for the best, or for Google to fix the issue at their end, isn’t going to solve the problem.

Solving user-generated spam is mostly a technical challenge and rather simple when compared with the previously discussed penalties applied for spam content.

The following security measures should be considered and implemented as necessary:

- Make sure your forum or discussion software is up to date, especially with regard to any security patches that have been issued.

- Use moderation capabilities for the following:

- Blacklist obviously spammy words or inappropriate words (like pharma-related) and continue to add to your blacklists based on spam that you’re seeing.

- Identify and review content when a single account or IP address accounts for a large volume of posts in a short time.

- Subject posts from new members or new posters to editorial review before they are published, lifting the restriction once they have established themselves as trustworthy.

- Limit users’ ability to link.

- Consider disallowing links entirely or allowing only trusted contributors with spotless track records to link to other sites.

- If you allow links, you may nofollow them to remove some of the incentives to link externally.

- Close comments or discussions on threads after a reasonable period of time, as they’ll often collect spam after real users have ceased to engage with them.

Beyond getting you back in Google’s good graces, all of these steps are in the best interests of your business anyway, as they’ll help protect your brand integrity and your relationship with your user base.

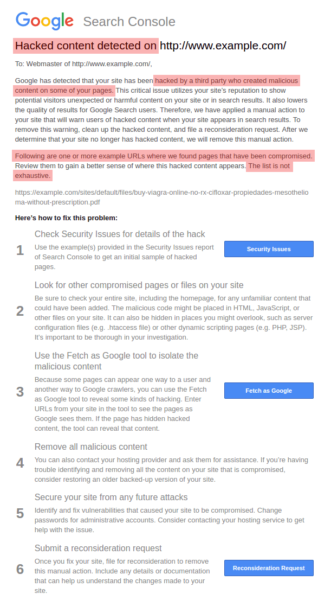

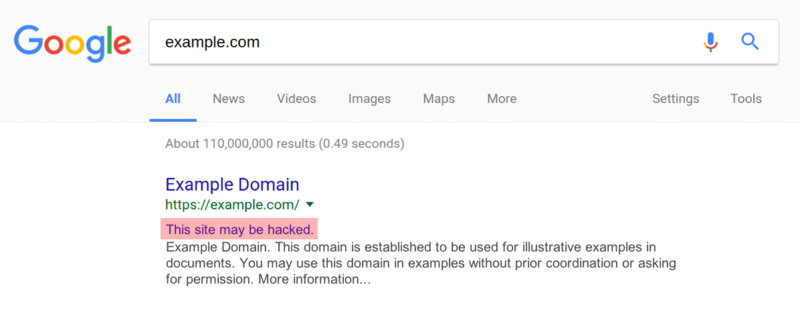

Hacked content spam

The hacked content spam penalty resembles user-generated spam for one reason: It is a case of a compromised website that is being abused by spammers to inject malicious and/or irrelevant content without the site owner’s consent.

Again, Google’s message includes a sample URL, which provides a clue to where to start the investigation and what type of content to look for while cleansing the site of spam.

There are, however, two important differences between hacked content spam and user-generated spam.

- First, the hacked content spam penalty is applied to sites that are not user-driven and where the vulnerability isn’t caused by poor quality enforcement, but rather insufficient security.

- Second, the consequence of a hacked content spam action is a prominent label in SERPs, warning users of the possible threat if they dare to open the website. This means imminent and potentially lasting loss of user traffic coming from organic Google Search.

In this instance, Google provides some assistance. But ultimately, you need to identify a permanent solution.

A simple clean-up and malicious content removal alone are not likely to have the desired effect unless the underlying vulnerability is identified and patched.

As in all previous cases, submitting a compelling reconsideration request is the first step toward resolving the problem and removing the “hacked” SERP label.

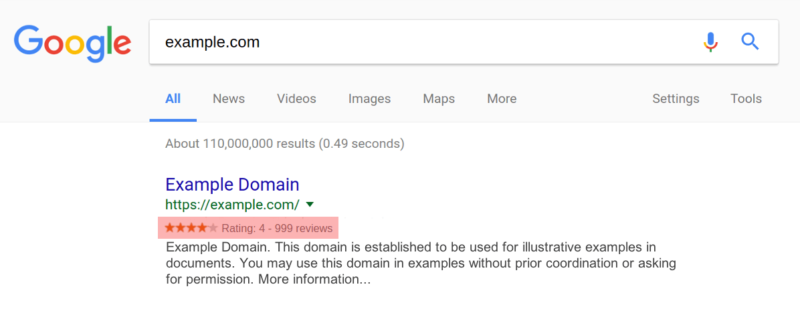

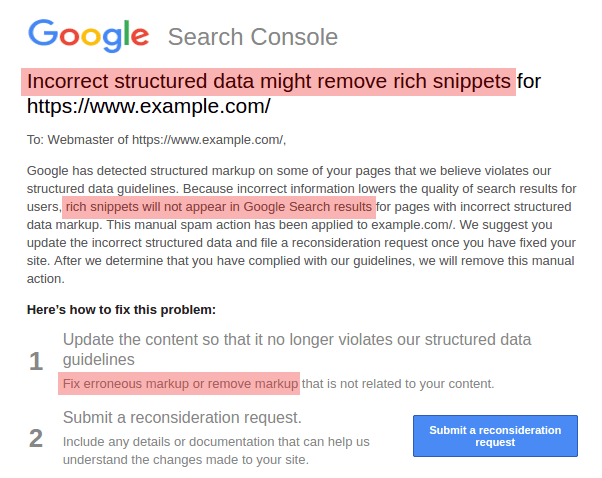

Incorrect structured data

Structured data is as much a means of making the content and context of a website better understood by search engines as it is a method to claim more SERP real estate.

When properly implemented, structured data can enhance your SERP listing with an eye-catching display known as a “rich snippet.”

The image below depicts the commonly seen Review rich snippet.

The prospect of obtaining a rich snippet is enticing, especially in competitive verticals, which is why attempts to game the system using inflated or deceptive structured data are very much on Google’s radar.

If spotted, a notification highlighting incorrect structured data is the consequence, and your rich snippets will no longer appear in search results.

The recovery and reconsideration process is similar to what you would do for other types of manual penalties.

House cleaning, spotless implementation and documentation are key. In practical terms, once the confidence in the accuracy of structured data is lost, fully regaining it is rare.

Gaming structured data is, therefore, a risky business. It is not only not recommended, but it is also likely to indefinitely impact the look and feel of a site in Google SERPs.

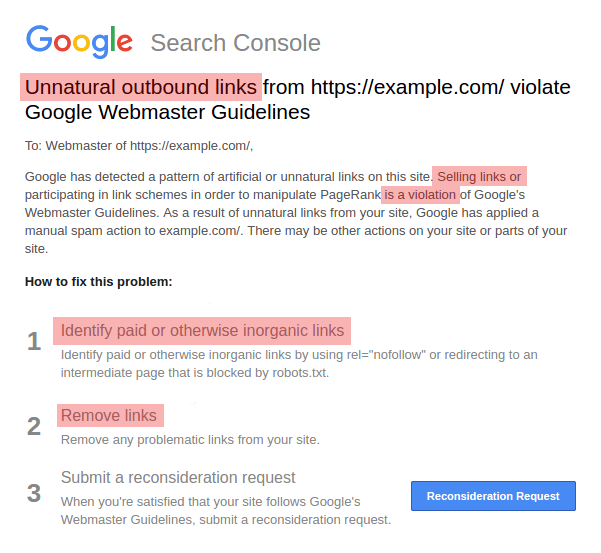

Unnatural outbound links

Selling links for the purpose of manipulating PageRank is yet another Google Search Essentials violation that triggers a corresponding manual action and a message highlighting the issue.

Unlike in the past, however, the associated penalty does not necessarily cause a dramatic loss of site visibility in organic Google Search nowadays.

That said, the penalty is not to be taken lightly. Given the site owner’s total control over their own website, narrowing down the problem and applying a suitable solution, such as no-following questionable links, is easy and requires comparatively few resources.

Spammy free host

When free hosting providers receive a Google Search Console penalty notification highlighting a spammy free host, Google has deemed an overwhelming majority of the user content hosted to be either spammy or otherwise of no value to internet users.

The specific Google Search Console message notifies the recipient and free host operator of the impending impact on the entire service’s visibility in Google Search results. In most cases, the service remains indexed by Google and can be found when users apply an exact, navigational query, e.g., site:example.ai.

However, the service is most likely to temporarily lose nearly all SERP visibility, even for what may be considered relevant queries. Because the entire website is affected, rather than only granular parts of the service, the spammy free host manual spam action is considered a harsh penalty.

Historically, Google seems to have been reluctant to take these wide-reaching measures against free hosting platforms, unless in the most egregious cases.

Confirmed past cases indicate that only free hosting services with approximately 99% of the indexable user-generated content abused, have been penalized as spammy free hosts.

Despite the excessive scale of the spam problem highlighted, Google still refrains from applying the ultimate penalty, comparable to pure spam, to remove the entire free hosting service from the index.

It is worth noting that the Google Search Console notification is brought exclusively to the free hosting service operators’ attention. Its legitimate users remain unaware of the impact on their content’s visibility in organic Google Search results.

This reflects the fact that only the service operator is in a position to impose some level of quality control and spam abuse prevention, which is a necessary step to build a comprehensive case before submitting a Google reconsideration request.

Off-page guideline violations and related notifications

While a site owner may theoretically be unable to control what other places link to the site, risky practices like buying links or spamming other sites have led Google to be concerned with off-page issues as well.

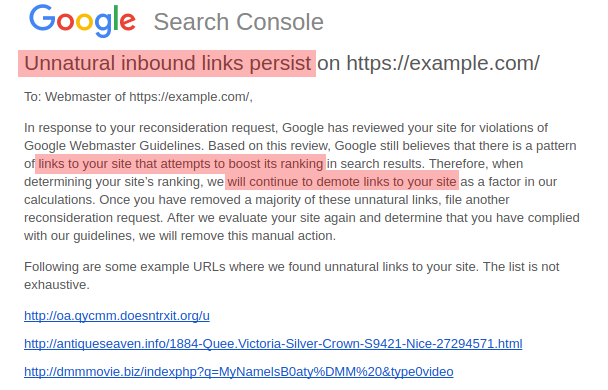

Unnatural inbound links

The most frequently experienced Google manual action by far is the one applied as a consequence of unnatural inbound links to a website.

Affected sites are deemed to be engaging in link schemes (link building intended to manipulate Google rankings), which Google considers a major violation. The penalty’s impact can be partial or affect the entire domain.

The consequent loss in visibility in Google ranges anywhere from barely noticeable to a steady decline over time to a dramatic total loss of visibility overnight. The vertical and brand prominence seem to play no role – large, reputable sites have been affected by this penalty.

Very rarely, we’ve seen sample URLs (even as many as five) listed on the unnatural inbound links message, which gives the site owner a starting point for their investigation. These samples may be of great use if they can indicate the pattern that raised a red flag – for example, if they all show low-quality PR services that scrape and reproduce pieces that include commercial anchor text links.

Whether examples are provided or not, it is vital to conduct a full backlink audit. Based on that evaluation – which, depending on the backlink data volume, could take up to several weeks – all questionable backlinks are to be noted in a separate file.

It is important to bear in mind that, for large websites with millions of backlinks, samples provided in Google Search Console will likely be too fragmented and offer insufficient data for a successful reconsideration request. Therefore, you must identify all of the questionable links yourself before asking to be reconsidered.

To boost the prospects for a favorable result with the very first reconsideration request, it is crucial to consider not only backlink volumes and distribution, but also the linking site’s quality and its general approach to linking out.

A solid backlink risk assessment is inclusive of all historic linking liabilities, without neglecting any legacy link building remnants, like directory entries of a long-gone SEO era.

Once you’ve created a file containing all your questionable or spammy backlinks, it’s time to work on getting those links removed from the web. This may require reaching out and asking other websites to take down links to your site or have them marked as “nofollow.”

Any spammy backlinks that you are not able to get removed, despite your best efforts, should be isolated and included in a .txt file called a disavow file.

Once the review is finalized and a disavow file created, it is recommended to upload the disavow file first (and receive confirmation of the change) before submitting documentation outlining all relevant steps taken to resolve the issues Google has highlighted.

Reconsideration requests and related notifications

Any Google penalty can be lifted and the website will not be held back because of past transgressions.

If you’ve received a manual action and have made a good faith effort to fix the issues that triggered it, you can request for Google to review your site so that you can have the penalty lifted.

This is what is known as filing a reconsideration request.

Any time you receive a manual action notification, it should outline all the steps you should take to rectify the problem. These steps will vary depending on the specific penalty that has been issued.

Once you’ve satisfied all of the requirements outlined by Google, the final step should contain a “Reconsideration Request” button that will initiate the process once clicked.

As part of the reconsideration request process, you may need to provide the Google team with documentation outlining the steps you have taken to bring the site into compliance with Google Search Essentials. This will help build a case for why the manual action should be lifted.

Once you’ve fixed all your website issues and submitted a reconsideration request, you may receive one of the following notifications in Google Search Console.

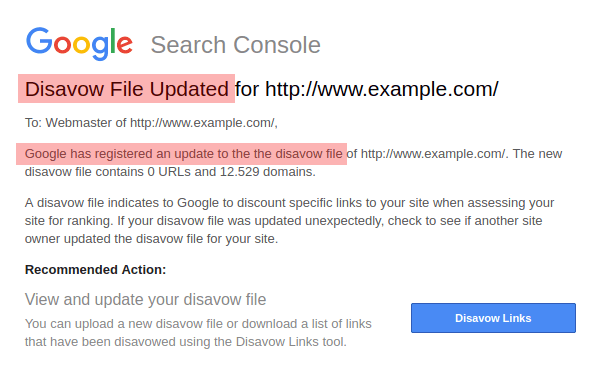

Disavow file updated notification

Any promising attempt to resolve a manual action related to unnatural backlinks will involve submitting a disavow file, which contains all the spammy backlinks to your site that you were unable to remove.

Submitting your disavow file will trigger a message confirming that a disavow file has been uploaded. It will specify the number of URLs and domains submitted.

Please note that while updating an existing disavow file, previously submitted URLs will be overwritten unless they are expressly included in a new file that is about to be submitted. Google’s Disavow Links Tool does not, as of this writing, support incremental updates.

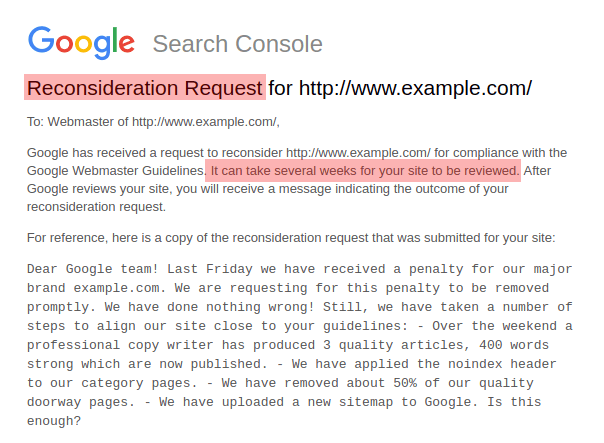

Reconsideration request (submission confirmed)

If you’ve received a manual action and have made a good faith effort to fix the issues that triggered it, you can request for Google to review your site so that you can have the penalty lifted. This is what is known as a reconsideration request.

After you submit your site for reconsideration, Google will confirm receiving a reconsideration request in the same way they acknowledge receiving a disavow file. The specific text seems to vary, often to manage expectations regarding the expected processing time.

Currently, there’s no officially guaranteed turnaround time for reconsideration requests to be processed. Experience shows that this process can take anywhere from a few hours (rare) to a few days (more common) to several weeks (worst-case scenario).

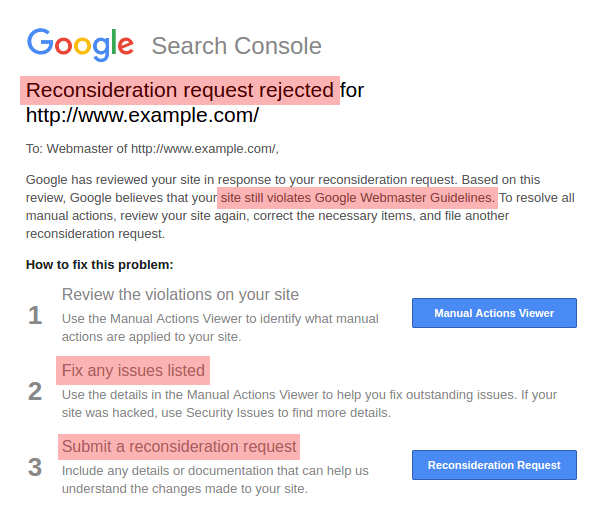

Reconsideration request rejected

If the efforts made to get back into Google’s good graces are deemed insufficient, a reconsideration request rejected message is forthcoming. That message seems to be uniform and merely states the active status of the penalty.

There are occasionally variations of the message which go beyond notifying the site owner and offer a little guidance regarding the persistent violation.

Those messages, which seem to be most common in the case of unnatural inbound link penalties, are customized and include specific violation examples.

Any spam backlink samples that are explicitly mentioned in such a message must inevitably be disavowed. Merely disavowing the links Google highlighted in its notification is insufficient, and a complete backlink audit is in order.

Regardless of the type of penalty applied, repeated reconsideration request attempts are possible. Currently, Google seems to have no cap on the total number of requests that are allowed.

However, every new request must reflect an increased effort to align the website with Google Search Essentials, or there’s little chance of eventual success.

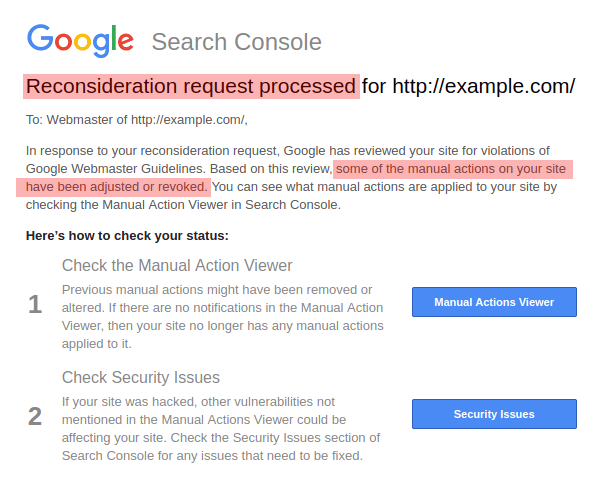

Reconsideration request processed

The majority of all reconsideration requests submitted to Google are processed and either approved or rejected.

However, there are rare exceptions in a small number of edge cases where a clear-cut decision does not seem to be possible. These cases are met with a Reconsideration request processed notification.

Judging by the wording, this seems to indicate that while a worthwhile effort was recognized, there’s more than just one type of violation present.

This may explain why it implies that one penalty may have been lifted while another one was refined.

Without a doubt, this message does not mean good news for the site’s owner – or the site’s visibility. Consequently, more investigative work is required.

There’s a silver lining on the horizon, however.

Most reconsideration request processed notifications come with a specific “note from your reviewer.” This is a manually edited message from a sympathetic Googler trying to help – an encouraging signal for the site’s future prospects.

Reconsideration request approved

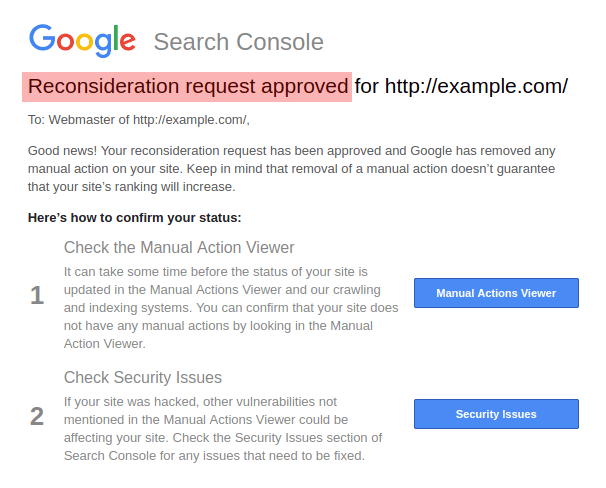

Google’s manual action removal triggers a short, uniform reconsideration request approved notification.

Aside from mentioning that the penalty has been lifted, this notification makes it clear that coming back into compliance with Google Search Essentials does not necessarily mean that your site will experience improved rankings in organic Google Search. The site may grow in visibility, stagnate or drop.

There’s no further action required once this message is received, other than focusing on users and the core of the website activity again. It is the best possible outcome to be expected.

Any questions about Google’s manual penalties not addressed sufficiently in this article are welcome and appreciated. All reader input received will be considered to keep this ultimate guide covering manual penalties up to date.

Opinions expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.

Related stories