How Content Quality Analysis Works With SEO

The Periodic Table of SEO Ranking Factors panel at the recent SMX Advanced show stimulated some fresh thinking for me on the role of content analysis and SEO. In particular, Marcus Tober of Searchmetrics shared some interesting data from one of their latest studies. What I’d like to discuss here is the notion of co-occurrence […]

The Periodic Table of SEO Ranking Factors panel at the recent SMX Advanced show stimulated some fresh thinking for me on the role of content analysis and SEO.

In particular, Marcus Tober of Searchmetrics shared some interesting data from one of their latest studies.

What I’d like to discuss here is the notion of co-occurrence analysis (which is available in Searchmetrics Suite as Content Optimization).

What Is Co-Occurrence Analysis?

In the world of keywords, this refers to an analysis of what words appear most commonly on a page. Imagine you create a page on women’s shoes. You can then analyze the content on the page to see what words are most common. For example, the results of the analysis for your page might look like this table here:

The “Occurrences” column tabulates the number of times the “Word” listed in the column to the left of it appears on the page.

Notice how you don’t see any instances of words like “the,” “and,” and “it” in the list. These are examples of what we call “stop words.” Usually, these words are not of value to this kind of analysis since they are in most cases ignored by search engines.

There are rare exceptions, such as with proper names like “The Office” (a TV show) and “The Home Depot” (the full proper name of this company), but it is not a factor in this analysis.

The Searchmetrics Study

As a next step, you can do this same analysis across all of the pages in the top 10 or more search results for a given search query to see what types of words people use. Searchmetrics did something similar to this in their recent study. In fact, they looked at 15,000 queries involving more than 350,000 URLs. Let’s look at the results of the test:

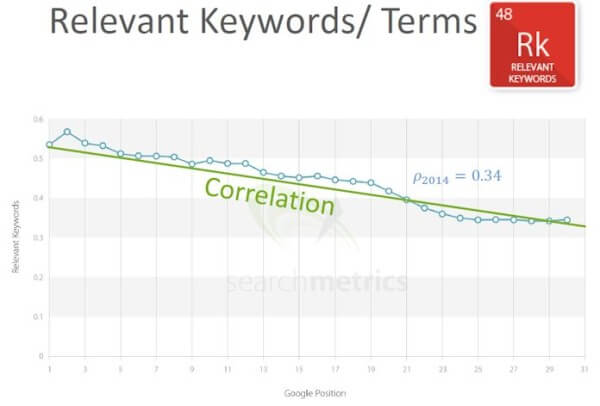

The Y-axis shows a score for the usage of the relevant keywords. The X-axis shows the ranking position in search engine results pages (SERPs), ranging from position 1 to position 30.

Remember, this has been run across 15,000 different SERPs. Based on this, you see a strong correlation between higher usage of the relevant terms and ranking position. The 0.34 score indicates a strong correlation.

Are We Really Back To Keyword Density?

No, not at all. This is quite different. This is not about one single phrase being repeated over and over again on the web page. It is much more sophisticated than all of that.

In the past days of search engines, this type of analysis was done in a very simplistic way. Simple repetition of a single keyword phrase over and over again was all that was required to get a page to rank highly for something. This led to lots of keyword stuffing by spammers.

Here is a partial snapshot extracted from Searchmetrics Content Optimization tools that shows the kinds of words found in a co-occurrence analysis:

You can see words like “view” and “sale” in this partial screen shot, and if you look at the rest of the report (not shown here) you see other words such as “price,” “cart” and “shipping.” If an e-commerce page does not have such words, then it might lower the chances that the page being analyzed is actually a place where you can buy women’s shoes.

Pages that have an unnatural mix of words may be detected using this type of analysis and judged to be of lower quality or relevance. Searchmetrics also tested the correlation of keyword relevance in 2013, and it looked like a factor in that test as well, but not quite as strongly as it did in this 2014 test.

A search engine will probably do this at a much more sophisticated level than shown here. Below are a few things they might do differently:

- Extend it to look at certain key phrases.

- Lower a page’s quality and relevance scores if it does not include a reasonable mix of synonyms. This would be one method for distinguishing pages that are written in bulk or machine-generated.

- Look at the anchor text in incoming links and include them in the analysis. A similar analysis could be used to determine poor anchor text mix to a web page. Most web pages may not have enough links for this type of analysis, but the home page of many sites might.

- Too high a mix of “the right words or phrases” could also be judged as a problem.

- This type of analysis could be solidified even further by picking out a hand-curated set of trusted seed sites. Since we know they are quality sites, they can be given more weight in the analysis to reduce the chances of bad results.

What Does This Mean?

The goal of the search engines is to recognize well-designed web pages that offer a high-quality user experience. That should be your first goal, as well. However, this is a difficult art form to learn, so expect to invest some time in getting it right. Start by recruiting really talented writers to create content that fits the user intention.

Then study the sites that rank well for the terms of interest to you. Go study their approach and learn from what they are doing. You can learn about page structure, key elements to include on the page, and an approach to providing a user experience that works.

Searchmetrics can do this automatically for your keywords and delivers a report of terms and phrases that should definitely be used and phrases that are highly relevant for your topic. And if you can, take advantage of user testing services.

You can try a service like Usertesting.com, or just ask some people you know to take an impartial look at your pages and critique them. The most important lesson from this is that you need to be very holistic in your approach to putting content on your pages and create the best possible experience there. This will be an investment of time, but it will help you both with conversion and user satisfaction.

We can’t say with any certainty that it’s an SEO ranking factor, but the Searchmetrics data shows a very strong correlation between this type of thinking and higher rankings, and that’s good enough for me to know it’s important, regardless of whether or not it’s a ranking factor or not!

Opinions expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.

Related stories